Sidekick is a personal AI assistant designed to enhance productivity by integrating advanced language model capabilities into everyday workflows. It helps users organize knowledge, automate tasks, and interact with information through structured, persistent conversations.

The project is built as a practical, extensible system that combines Large Language Models (LLMs), Retrieval-Augmented Generation (RAG), and agent-based workflows.

- Each Knowledge Base folder has its own dedicated chat.

- Every indexed folder represents an independent context and conversation.

- Chats are isolated per folder, preventing context mixing.

- This design allows users to work on multiple topics or projects in parallel.

- Chats and folders are persistent and can be resumed at any time.

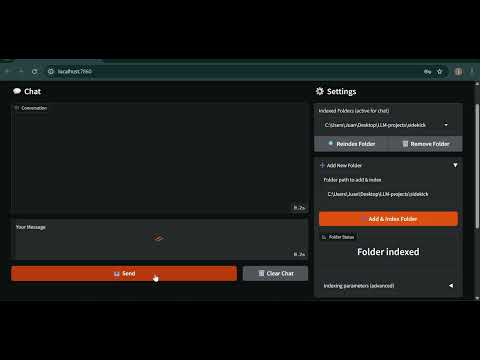

Click the image below to watch a full demo of SidekickAI on YouTube.

- Chat Model:

gpt-4o-mini

Chosen to reduce costs while maintaining strong reasoning and tool usage. - Embedding Model:

text-embedding-ada-002via OpenAI API.

Used for semantic indexing and retrieval inside knowledge base folders. - Frameworks:

- LangGraph for agent orchestration and workflow control.

- LangChain ecosystem tools for retrieval, tools, and integrations.

- Contextual understanding using LLMs.

- Knowledge Base per folder, each with its own chat history.

- Persistent chats saved per user and per folder.

- User authentication with private settings and conversations.

- Customizable indexing with adjustable chunk size and overlap.

- Configurable retrieval count per query.

- Modular tool-based agent system.

- Clean and extensible LangGraph-based architecture.

- Supported file types for indexing:

- PDF (.pdf)

- Markdown (.md)

- Plain text (.txt)

- Python source files (.py)

- The indexing pipeline is designed to be easily extensible to support additional file formats.

RAG (Retrieval-Augmented Generation) is implemented as one of Sidekick’s tools, not a mandatory step.

- RAG is only used when it is enabled and when the agent decides it is useful/necessary for a given prompt.

- If RAG is disabled (via tool settings) or if no folder/file context is selected, Sidekick will answer using the LLM and any other enabled tools.

- This keeps the agent flexible: it can work as a general assistant, or as a knowledge-base assistant, depending on the session configuration.

git clone https://github.com/Jsrodrigue/sidekickAI.git

cd sidekickAIpython -m venv .venv

source .venv/bin/activate

pip install -r requirements.txt(On Windows, activate with `.venv\Scripts\activate`)

This project supports uv for fast dependency management.

uv venv

uv sync- Create an account.

- Log in to your workspace.

- Create or select a Knowledge Base folder.

- Chat with Sidekick inside the selected folder.

After installing dependencies, start the application using one of the following options:

Make sure your virtual environment is activated:

python app.pyuv run app.pyThe application will start locally and you can access it through the URL shown in the terminal.

Each Knowledge Base can be configured independently:

- Chunk Size: controls how documents are split during indexing.

- Chunk Overlap: improves context continuity across chunks.

- Retrieval Count: number of documents retrieved per query.

OPENAI_API_KEY=your_openai_api_key_here

SERPER_API_KEY=your_serper_api_key_here

Sidekick uses a LangGraph-based agent loop with a single worker node and a tool execution node.

The graph is designed to be simple, deterministic, and extensible.

-

Worker node

- Main LLM node with tools bound

- Builds a dynamic system prompt using:

- Success criteria (if provided)

- Current timestamp

- Tool usage constraints

- Decides whether tools are needed based on the model output

-

Tool node

- Executes tool calls emitted by the worker

- Supports multiple tool invocations per turn (with safety limits)

- START → Worker

- Worker decides:

- If tool calls are present → Tools

- If not → END

- Tools → Worker (loop continues)

- Worker → END when no further tool calls are needed

- Tool usage is conditional and bounded per turn.

- Disabled tools are respected at runtime.

- The worker node is stateless beyond the shared graph state.

- Memory is injected via a checkpointer to persist conversations per folder.

- RAG is treated as a tool: it can be enabled/disabled and is not always invoked.

This design makes the graph easy to extend with:

- Additional decision nodes

- Specialized workers

- Validation or guardrail steps

- Multi-agent routing in the future

- The user sends a message in a selected Knowledge Base folder (or without any folder).

- The LangGraph worker node processes the input.

- If RAG is enabled and applicable, relevant documents are retrieved.

- Tools are invoked only when necessary (based on model decisions and enabled tool settings).

- The response is generated and saved to the folder-specific chat.

Sidekick exposes multiple tool groups. Any tool can be enabled/disabled at runtime, and the agent only uses tools when needed:

- RAG (Retrieval-Augmented Generation) for document-based answering (optional tool, not always invoked).

- File tools for reading, writing, and listing files.

- Web search for external information.

- Python execution for dynamic tasks.

- Wikipedia integration for quick factual lookups.

- LangGraph for coordinating agent workflows.

This project was inspired by the Udemy courses "AI Engineer Core Track: LLM Engineering, RAG, QLoRA, Agents" and "AI Engineer Agentic Track: The Complete Agent & MCP Course" by Ed Donner.

It was developed as a portfolio project to demonstrate practical skills in:

- LLM-based automation

- Agent design

- Retrieval systems

- Tool orchestration

This project is licensed under the MIT License. See the LICENSE file for details.