- Berkeley Function Calling Leaderboard (BFCL)

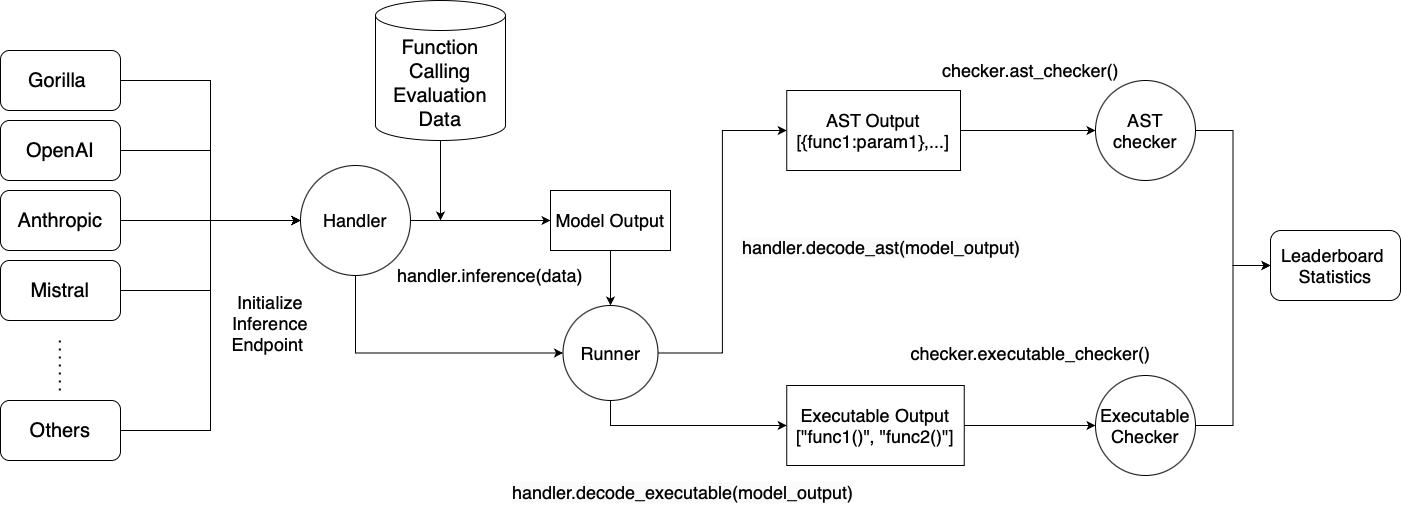

We introduce the Berkeley Function Calling Leaderboard (BFCL), the first comprehensive and executable function call evaluation dedicated to assessing Large Language Models' (LLMs) ability to invoke functions. Unlike previous evaluations, BFCL accounts for various forms of function calls, diverse scenarios, and executability.

💡 Read more in our blog posts:

- BFCL v1: Simple, Parallel, and Multiple Function Call eval with AST

- BFCL v2: Enterprise and OSS-contributed Live Data

- BFCL v3: Multi-Turn & Multi-Step Function Call Evaluation

- BFCL V4 Part 1: Agentic Web Search

- BFCL V4 Part 2: Agentic Memory Management

- BFCL V4 Part 3: Agentic Format Sensitivity

🦍 See the live leaderboard at Berkeley Function Calling Leaderboard

# Create a new Conda environment with Python 3.10

conda create -n BFCL python=3.10

conda activate BFCL

# Clone the Gorilla repository

git clone https://github.com/ShishirPatil/gorilla.git

# Change directory to the `berkeley-function-call-leaderboard`

cd gorilla/berkeley-function-call-leaderboard

# Install the package in editable mode

pip install -e .If you simply want to run the evaluation without making code changes, you can

install the prebuilt wheel instead. Be careful not to confuse our package with

the unrelated bfcl project on PyPI—make sure you install bfcl-eval:

pip install bfcl-eval # Be careful not to confuse with the unrelated `bfcl` project on PyPI!For locally hosted models, choose one of the following backends, ensuring you have the right GPU and OS setup:

sglang is much faster than vllm in our specific multi-turn use case, but it only supports newer GPUs with SM 80+ (Ampere etc).

If you are using an older GPU (T4/V100), you should use vllm instead as it supports a much wider range of GPUs.

Using vllm:

pip install -e .[oss_eval_vllm]Using sglang:

pip install -e .[oss_eval_sglang]Optional: If using sglang, we recommend installing flashinfer for speedups. Find instructions here.

Important: If you installed the package from PyPI (using pip install bfcl-eval), you must set the BFCL_PROJECT_ROOT environment variable to specify where the evaluation results and score files should be stored.

Otherwise, you'll need to navigate deep into the Python package's source code folder to access the evaluation results and configuration files.

For editable installations (using pip install -e .), setting BFCL_PROJECT_ROOT is optional--it defaults to the berkeley-function-call-leaderboard directory.

Set BFCL_PROJECT_ROOT as an environment variable in your shell environment:

# In your shell environment

export BFCL_PROJECT_ROOT=/path/to/your/desired/project/directoryWhen BFCL_PROJECT_ROOT is set:

- The

result/folder (containing model responses) will be created at$BFCL_PROJECT_ROOT/result/ - The

score/folder (containing evaluation results) will be created at$BFCL_PROJECT_ROOT/score/ - The library will look for the

.envconfiguration file at$BFCL_PROJECT_ROOT/.env(see Setting up Environment Variables)

We store API keys and other configuration variables (separate from the BFCL_PROJECT_ROOT variable mentioned above) in a .env file. A sample .env.example file is distributed with the package.

For editable installations:

cp bfcl_eval/.env.example .env

# Fill in necessary values in `.env`For PyPI installations (using pip install bfcl-eval):

cp $(python -c "import bfcl_eval; print(bfcl_eval.__path__[0])")/.env.example $BFCL_PROJECT_ROOT/.env

# Fill in necessary values in `.env`If you are running any proprietary models, make sure the model API keys are included in your .env file. Models like GPT, Claude, Mistral, Gemini, Nova, will require them.

The library looks for the .env file in the project root, i.e. $BFCL_PROJECT_ROOT/.env.

For the web_search test category, we use the SerpAPI service to perform web search. You need to sign up for an API key and add it to your .env file. You can also switch to other web search APIs by changing the search_engine_query function in bfcl_eval/eval_checker/multi_turn_eval/func_source_code/web_search.py.

MODEL_NAME: For available models, please refer to SUPPORTED_MODELS.md. If not specified, the default modelgorilla-openfunctions-v2is used.TEST_CATEGORY: For available test categories, please refer to TEST_CATEGORIES.md. If not specified, all categories are included by default.

You can provide multiple models or test categories by separating them with commas. For example:

bfcl generate --model claude-3-5-sonnet-20241022-FC,gpt-4o-2024-11-20-FC --test-category simple_python,parallel,live_multiple,multi_turnSometimes you may only need to regenerate a handful of test entries—for instance when iterating on a new model or after fixing an inference bug. Passing the --run-ids flag lets you target exact test IDs rather than an entire category:

bfcl generate --model MODEL_NAME --run-ids # --test-category will be ignoredWhen this flag is set the generation pipeline reads a JSON file named

test_case_ids_to_generate.json located in the project root (the same

place where .env lives). The file should map each test category to a list of

IDs to run:

{

"simple_python": ["simple_python_102", "simple_python_103"],

"multi_turn_base": ["multi_turn_base_15"]

}Note: When using

--run-ids, the--test-categoryflag is ignored.

A sample file is provided at bfcl_eval/test_case_ids_to_generate.json.example; copy it to your project root so the CLI can pick it up regardless of your working directory:

For editable installations:

cp bfcl_eval/test_case_ids_to_generate.json.example ./test_case_ids_to_generate.jsonFor PyPI installations:

cp $(python -c "import bfcl_eval, pathlib; print(pathlib.Path(bfcl_eval.__path__[0]) / 'test_case_ids_to_generate.json.example')") $BFCL_PROJECT_ROOT/test_case_ids_to_generate.jsonOnce --run-ids is provided only the IDs listed in the JSON will be evaluated.

- By default, generated model responses are stored in a

result/folder under the project root (which defaults to the package directory):result/MODEL_NAME/BFCL_v3_TEST_CATEGORY_result.json. - You can customise the location by setting the

BFCL_PROJECT_ROOTenvironment variable or passing the--result-diroption.

An inference log is included with the model responses to help analyze/debug the model's performance, and to better understand the model behavior. For more verbose logging, use the --include-input-log flag. Refer to LOG_GUIDE.md for details on how to interpret the inference logs.

bfcl generate --model MODEL_NAME --test-category TEST_CATEGORY --num-threads 1- Use

--num-threadsto control the level of parallel inference. The default (1) means no parallelization. - The maximum allowable threads depends on your API's rate limits.

bfcl generate \

--model MODEL_NAME \

--test-category TEST_CATEGORY \

--backend {sglang|vllm} \

--num-gpus 1 \

--gpu-memory-utilization 0.9 \

--local-model-path /path/to/local/model # ← optional- Choose your backend using

--backend sglangor--backend vllm. The default backend issglang. - Control GPU usage by adjusting

--num-gpus(default1, relevant for multi-GPU tensor parallelism) and--gpu-memory-utilization(default0.9), which can help avoid out-of-memory errors. --local-model-path(optional): Point this flag at a directory that already contains the model's files (config.json, tokenizer, weights, etc.). Use it only when you've pre‑downloaded the model and the weights live somewhere other than the default$HF_HOMEcache.

If you have a server already running (e.g., vLLM in a SLURM cluster), you can bypass the vLLM/sglang setup phase and directly generate responses by using the --skip-server-setup flag:

bfcl generate --model MODEL_NAME --test-category TEST_CATEGORY --skip-server-setupIn addition, you should specify the endpoint and port used by the local server. By default, the endpoint is localhost and the port is 1053. These can be overridden by the LOCAL_SERVER_ENDPOINT and LOCAL_SERVER_PORT environment variables in the .env file:

LOCAL_SERVER_ENDPOINT=localhost

LOCAL_SERVER_PORT=1053For those who prefer using script execution instead of the CLI, you can run the following command:

python -m bfcl_eval.openfunctions_evaluation --model MODEL_NAME --test-category TEST_CATEGORYWhen specifying multiple models or test categories, separate them with spaces, not commas. All other flags mentioned earlier are compatible with the script execution method as well.

Important: You must have generated the model responses before running the evaluation.

Once you have the results, run:

bfcl evaluate --model MODEL_NAME --test-category TEST_CATEGORYIf you only generated a subset of benchmark entries (e.g. by using --run-ids during the generation step or by manually editing the result files) and you wish to evaluate just those entries, add the --partial-eval flag:

bfcl evaluate --model MODEL_NAME --test-category TEST_CATEGORY --partial-evalWhen --partial-eval is set, the evaluator silently skips IDs that are not present in the model result file and computes accuracy on the remaining subset. Please note that the score may differ from a full-set evaluation and therefore might not match the official leaderboard numbers.

The MODEL_NAME and TEST_CATEGORY options are the same as those used in the Generating LLM Responses section. For details, refer to SUPPORTED_MODELS.md and TEST_CATEGORIES.md.

If in the previous step you stored the model responses in a custom directory, specify it using the --result-dir flag or set BFCL_PROJECT_ROOT so the evaluator can locate the files.

Note: For unevaluated test categories, they will be marked as

N/Ain the evaluation result csv files. For summary columns (e.g.,Overall Acc,Non_Live Overall Acc,Live Overall Acc, andMulti Turn Overall Acc), the score reported will treat all unevaluated categories as 0 during calculation.

Evaluation scores are stored in a score/ directory under the project root (defaults to the package directory), mirroring the structure of result/: score/MODEL_NAME/BFCL_v3_TEST_CATEGORY_score.json.

- To use a custom directory for the score file, set the

BFCL_PROJECT_ROOTenvironment variable or specify--score-dir.

Additionally, four CSV files are generated in ./score/:

data_overall.csv– Overall scores for each model. This is used for updating the leaderboard.data_live.csv– Detailed breakdown of scores for each Live (single-turn) test category.data_non_live.csv– Detailed breakdown of scores for each Non-Live (single-turn) test category.data_multi_turn.csv– Detailed breakdown of scores for each Multi-Turn test category.

If you'd like to log evaluation results to WandB artifacts:

pip install -e.[wandb]Mkae sure you also set WANDB_BFCL_PROJECT=ENTITY:PROJECT in .env.

For those who prefer using script execution instead of the CLI, you can run the following command:

python -m bfcl_eval.eval_checker.eval_runner --model MODEL_NAME --test-category TEST_CATEGORYWhen specifying multiple models or test categories, separate them with spaces, not commas. All other flags mentioned earlier are compatible with the script execution method as well.

We welcome contributions! To add a new model:

- Review

bfcl_eval/model_handler/base_handler.pyand/orbfcl_eval/model_handler/local_inference/base_oss_handler.py(if your model is hosted locally). - Implement a new handler class for your model.

- Update

bfcl_eval/constants/model_config.py. - Submit a Pull Request.

For detailed steps, please see the Contributing Guide.

- Discord (

#leaderboardchannel) - Project Website

All the leaderboard statistics, and data used to train the models are released under Apache 2.0. BFCL is an open source effort from UC Berkeley and we welcome contributors. For any comments, criticisms, or questions, please feel free to raise an issue or a PR. You can also reach us via email.