-

Notifications

You must be signed in to change notification settings - Fork 1

Expand file tree

/

Copy pathLLMS.txt

More file actions

7334 lines (5330 loc) · 307 KB

/

LLMS.txt

File metadata and controls

7334 lines (5330 loc) · 307 KB

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

205

206

207

208

209

210

211

212

213

214

215

216

217

218

219

220

221

222

223

224

225

226

227

228

229

230

231

232

233

234

235

236

237

238

239

240

241

242

243

244

245

246

247

248

249

250

251

252

253

254

255

256

257

258

259

260

261

262

263

264

265

266

267

268

269

270

271

272

273

274

275

276

277

278

279

280

281

282

283

284

285

286

287

288

289

290

291

292

293

294

295

296

297

298

299

300

301

302

303

304

305

306

307

308

309

310

311

312

313

314

315

316

317

318

319

320

321

322

323

324

325

326

327

328

329

330

331

332

333

334

335

336

337

338

339

340

341

342

343

344

345

346

347

348

349

350

351

352

353

354

355

356

357

358

359

360

361

362

363

364

365

366

367

368

369

370

371

372

373

374

375

376

377

378

379

380

381

382

383

384

385

386

387

388

389

390

391

392

393

394

395

396

397

398

399

400

401

402

403

404

405

406

407

408

409

410

411

412

413

414

415

416

417

418

419

420

421

422

423

424

425

426

427

428

429

430

431

432

433

434

435

436

437

438

439

440

441

442

443

444

445

446

447

448

449

450

451

452

453

454

455

456

457

458

459

460

461

462

463

464

465

466

467

468

469

470

471

472

473

474

475

476

477

478

479

480

481

482

483

484

485

486

487

488

489

490

491

492

493

494

495

496

497

498

499

500

501

502

503

504

505

506

507

508

509

510

511

512

513

514

515

516

517

518

519

520

521

522

523

524

525

526

527

528

529

530

531

532

533

534

535

536

537

538

539

540

541

542

543

544

545

546

547

548

549

550

551

552

553

554

555

556

557

558

559

560

561

562

563

564

565

566

567

568

569

570

571

572

573

574

575

576

577

578

579

580

581

582

583

584

585

586

587

588

589

590

591

592

593

594

595

596

597

598

599

600

601

602

603

604

605

606

607

608

609

610

611

612

613

614

615

616

617

618

619

620

621

622

623

624

625

626

627

628

629

630

631

632

633

634

635

636

637

638

639

640

641

642

643

644

645

646

647

648

649

650

651

652

653

654

655

656

657

658

659

660

661

662

663

664

665

666

667

668

669

670

671

672

673

674

675

676

677

678

679

680

681

682

683

684

685

686

687

688

689

690

691

692

693

694

695

696

697

698

699

700

701

702

703

704

705

706

707

708

709

710

711

712

713

714

715

716

717

718

719

720

721

722

723

724

725

726

727

728

729

730

731

732

733

734

735

736

737

738

739

740

741

742

743

744

745

746

747

748

749

750

751

752

753

754

755

756

757

758

759

760

761

762

763

764

765

766

767

768

769

770

771

772

773

774

775

776

777

778

779

780

781

782

783

784

785

786

787

788

789

790

791

792

793

794

795

796

797

798

799

800

801

802

803

804

805

806

807

808

809

810

811

812

813

814

815

816

817

818

819

820

821

822

823

824

825

826

827

828

829

830

831

832

833

834

835

836

837

838

839

840

841

842

843

844

845

846

847

848

849

850

851

852

853

854

855

856

857

858

859

860

861

862

863

864

865

866

867

868

869

870

871

872

873

874

875

876

877

878

879

880

881

882

883

884

885

886

887

888

889

890

891

892

893

894

895

896

897

898

899

900

901

902

903

904

905

906

907

908

909

910

911

912

913

914

915

916

917

918

919

920

921

922

923

924

925

926

927

928

929

930

931

932

933

934

935

936

937

938

939

940

941

942

943

944

945

946

947

948

949

950

951

952

953

954

955

956

957

958

959

960

961

962

963

964

965

966

967

968

969

970

971

972

973

974

975

976

977

978

979

980

981

982

983

984

985

986

987

988

989

990

991

992

993

994

995

996

997

998

999

1000

# Project Structure and Function Definitions

This file contains the project directory structure and function definitions to help LLMs understand the codebase.

## Directory Structure

```

mcp-agent/

├── .vscode/

│ ├── extensions.json

│ ├── settings.json

├── schema/

│ ├── mcp-agent.config.schema.json

├── src/

│ ├── mcp_agent/

│ │ ├── agents/

│ │ │ ├── __init__.py

│ │ │ ├── agent.py

│ │ ├── cli/

│ │ │ ├── commands/

│ │ │ │ ├── config.py

│ │ │ ├── __init__.py

│ │ │ ├── __main__.py

│ │ │ ├── main.py

│ │ │ ├── terminal.py

│ │ ├── core/

│ │ │ ├── context.py

│ │ │ ├── context_dependent.py

│ │ │ ├── decorator_app.py

│ │ │ ├── exceptions.py

│ │ ├── data/

│ │ │ ├── artificial_analysis_llm_benchmarks.json

│ │ ├── eval/

│ │ │ ├── __init__.py

│ │ ├── executor/

│ │ │ ├── temporal/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── workflow_registry.py

│ │ │ │ ├── workflow_signal.py

│ │ │ ├── __init__.py

│ │ │ ├── decorator_registry.py

│ │ │ ├── executor.py

│ │ │ ├── signal_registry.py

│ │ │ ├── task_registry.py

│ │ │ ├── workflow.py

│ │ │ ├── workflow_registry.py

│ │ │ ├── workflow_signal.py

│ │ │ ├── workflow_task.py

│ │ ├── human_input/

│ │ │ ├── __init__.py

│ │ │ ├── handler.py

│ │ │ ├── types.py

│ │ ├── logging/

│ │ │ ├── __init__.py

│ │ │ ├── event_progress.py

│ │ │ ├── events.py

│ │ │ ├── json_serializer.py

│ │ │ ├── listeners.py

│ │ │ ├── logger.py

│ │ │ ├── progress_display.py

│ │ │ ├── rich_progress.py

│ │ │ ├── tracing.py

│ │ │ ├── transport.py

│ │ ├── mcp/

│ │ │ ├── __init__.py

│ │ │ ├── gen_client.py

│ │ │ ├── mcp_agent_client_session.py

│ │ │ ├── mcp_aggregator.py

│ │ │ ├── mcp_connection_manager.py

│ │ │ ├── mcp_server_registry.py

│ │ ├── server/

│ │ │ ├── app_server.py

│ │ │ ├── app_server_types.py

│ │ ├── telemetry/

│ │ │ ├── __init__.py

│ │ │ ├── usage_tracking.py

│ │ ├── utils/

│ │ │ ├── common.py

│ │ │ ├── pydantic_type_serializer.py

│ │ ├── workflows/

│ │ │ ├── embedding/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── embedding_base.py

│ │ │ │ ├── embedding_cohere.py

│ │ │ │ ├── embedding_openai.py

│ │ │ ├── evaluator_optimizer/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── evaluator_optimizer.py

│ │ │ ├── intent_classifier/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── intent_classifier_base.py

│ │ │ │ ├── intent_classifier_embedding.py

│ │ │ │ ├── intent_classifier_embedding_cohere.py

│ │ │ │ ├── intent_classifier_embedding_openai.py

│ │ │ │ ├── intent_classifier_llm.py

│ │ │ │ ├── intent_classifier_llm_anthropic.py

│ │ │ │ ├── intent_classifier_llm_openai.py

│ │ │ ├── llm/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── augmented_llm.py

│ │ │ │ ├── augmented_llm_anthropic.py

│ │ │ │ ├── augmented_llm_azure.py

│ │ │ │ ├── augmented_llm_bedrock.py

│ │ │ │ ├── augmented_llm_google.py

│ │ │ │ ├── augmented_llm_ollama.py

│ │ │ │ ├── augmented_llm_openai.py

│ │ │ │ ├── llm_selector.py

│ │ │ ├── orchestrator/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── orchestrator.py

│ │ │ │ ├── orchestrator_models.py

│ │ │ │ ├── orchestrator_prompts.py

│ │ │ ├── parallel/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── fan_in.py

│ │ │ │ ├── fan_out.py

│ │ │ │ ├── parallel_llm.py

│ │ │ ├── router/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── router_base.py

│ │ │ │ ├── router_embedding.py

│ │ │ │ ├── router_embedding_cohere.py

│ │ │ │ ├── router_embedding_openai.py

│ │ │ │ ├── router_llm.py

│ │ │ │ ├── router_llm_anthropic.py

│ │ │ │ ├── router_llm_openai.py

│ │ │ ├── swarm/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── swarm.py

│ │ │ │ ├── swarm_anthropic.py

│ │ │ │ ├── swarm_openai.py

│ │ │ ├── __init__.py

│ │ ├── __init__.py

│ │ ├── app.py

│ │ ├── config.py

│ │ ├── console.py

│ │ ├── py.typed

├── LLMS.md

├── logs.txt

├── test_output.txt

```

## Project README

<p align="center">

<img src="https://github.com/user-attachments/assets/6f4e40c4-dc88-47b6-b965-5856b69416d2" alt="Logo" width="300" />

</p>

<p align="center">

<em>Build effective agents with Model Context Protocol using simple, composable patterns.</em>

<p align="center">

<a href="https://github.com/lastmile-ai/mcp-agent/tree/main/examples" target="_blank"><strong>Examples</strong></a>

|

<a href="https://www.anthropic.com/research/building-effective-agents" target="_blank"><strong>Building Effective Agents</strong></a>

|

<a href="https://modelcontextprotocol.io/introduction" target="_blank"><strong>MCP</strong></a>

</p>

<p align="center">

<a href="https://pypi.org/project/mcp-agent/"><img src="https://img.shields.io/pypi/v/mcp-agent?color=%2334D058&label=pypi" /></a>

<a href="https://github.com/lastmile-ai/mcp-agent/issues"><img src="https://img.shields.io/github/issues-raw/lastmile-ai/mcp-agent" /></a>

<a href="https://lmai.link/discord/mcp-agent"><img src="https://shields.io/discord/1089284610329952357" alt="discord" /></a>

<img alt="Pepy Total Downloads" src="https://img.shields.io/pepy/dt/mcp-agent?label=pypi%20%7C%20downloads"/>

<a href="https://github.com/lastmile-ai/mcp-agent/blob/main/LICENSE"><img src="https://img.shields.io/pypi/l/mcp-agent" /></a>

</p>

## Overview

**`mcp-agent`** is a simple, composable framework to build agents using [Model Context Protocol](https://modelcontextprotocol.io/introduction).

**Inspiration**: Anthropic announced 2 foundational updates for AI application developers:

1. [Model Context Protocol](https://www.anthropic.com/news/model-context-protocol) - a standardized interface to let any software be accessible to AI assistants via MCP servers.

2. [Building Effective Agents](https://www.anthropic.com/research/building-effective-agents) - a seminal writeup on simple, composable patterns for building production-ready AI agents.

`mcp-agent` puts these two foundational pieces into an AI application framework:

1. It handles the pesky business of managing the lifecycle of MCP server connections so you don't have to.

2. It implements every pattern described in Building Effective Agents, and does so in a _composable_ way, allowing you to chain these patterns together.

3. **Bonus**: It implements [OpenAI's Swarm](https://github.com/openai/swarm) pattern for multi-agent orchestration, but in a model-agnostic way.

Altogether, this is the simplest and easiest way to build robust agent applications. Much like MCP, this project is in early development.

We welcome all kinds of [contributions](/CONTRIBUTING.md), feedback and your help in growing this to become a new standard.

## Get Started

We recommend using [uv](https://docs.astral.sh/uv/) to manage your Python projects:

```bash

uv add "mcp-agent"

```

Alternatively:

```bash

pip install mcp-agent

```

### Quickstart

> [!TIP]

> The [`examples`](/examples) directory has several example applications to get started with.

> To run an example, clone this repo, then:

>

> ```bash

> cd examples/basic/mcp_basic_agent # Or any other example

> cp mcp_agent.secrets.yaml.example mcp_agent.secrets.yaml # Update API keys

> uv run main.py

> ```

Here is a basic "finder" agent that uses the fetch and filesystem servers to look up a file, read a blog and write a tweet. [Example link](./examples/basic/mcp_basic_agent/):

<details open>

<summary>finder_agent.py</summary>

```python

import asyncio

import os

from mcp_agent.app import MCPApp

from mcp_agent.agents.agent import Agent

from mcp_agent.workflows.llm.augmented_llm_openai import OpenAIAugmentedLLM

app = MCPApp(name="hello_world_agent")

async def example_usage():

async with app.run() as mcp_agent_app:

logger = mcp_agent_app.logger

# This agent can read the filesystem or fetch URLs

finder_agent = Agent(

name="finder",

instruction="""You can read local files or fetch URLs.

Return the requested information when asked.""",

server_names=["fetch", "filesystem"], # MCP servers this Agent can use

)

async with finder_agent:

# Automatically initializes the MCP servers and adds their tools for LLM use

tools = await finder_agent.list_tools()

logger.info(f"Tools available:", data=tools)

# Attach an OpenAI LLM to the agent (defaults to GPT-4o)

llm = await finder_agent.attach_llm(OpenAIAugmentedLLM)

# This will perform a file lookup and read using the filesystem server

result = await llm.generate_str(

message="Show me what's in README.md verbatim"

)

logger.info(f"README.md contents: {result}")

# Uses the fetch server to fetch the content from URL

result = await llm.generate_str(

message="Print the first two paragraphs from https://www.anthropic.com/research/building-effective-agents"

)

logger.info(f"Blog intro: {result}")

# Multi-turn interactions by default

result = await llm.generate_str("Summarize that in a 128-char tweet")

logger.info(f"Tweet: {result}")

if __name__ == "__main__":

asyncio.run(example_usage())

```

</details>

<details>

<summary>mcp_agent.config.yaml</summary>

```yaml

execution_engine: asyncio

logger:

transports: [console] # You can use [file, console] for both

level: debug

path: "logs/mcp-agent.jsonl" # Used for file transport

# For dynamic log filenames:

# path_settings:

# path_pattern: "logs/mcp-agent-{unique_id}.jsonl"

# unique_id: "timestamp" # Or "session_id"

# timestamp_format: "%Y%m%d_%H%M%S"

mcp:

servers:

fetch:

command: "uvx"

args: ["mcp-server-fetch"]

filesystem:

command: "npx"

args:

[

"-y",

"@modelcontextprotocol/server-filesystem",

"<add_your_directories>",

]

openai:

# Secrets (API keys, etc.) are stored in an mcp_agent.secrets.yaml file which can be gitignored

default_model: gpt-4o

```

</details>

<details>

<summary>Agent output</summary>

<img width="2398" alt="Image" src="https://github.com/user-attachments/assets/eaa60fdf-bcc6-460b-926e-6fa8534e9089" />

</details>

## Table of Contents

- [Why use mcp-agent?](#why-use-mcp-agent)

- [Example Applications](#examples)

- [Claude Desktop](#claude-desktop)

- [Streamlit](#streamlit)

- [Gmail Agent](#gmail-agent)

- [RAG](#simple-rag-chatbot)

- [Marimo](#marimo)

- [Python](#python)

- [Swarm (CLI)](#swarm)

- [Core Concepts](#core-components)

- [Workflows Patterns](#workflows)

- [Augmented LLM](#augmentedllm)

- [Parallel](#parallel)

- [Router](#router)

- [Intent-Classifier](#intentclassifier)

- [Orchestrator-Workers](#orchestrator-workers)

- [Evaluator-Optimizer](#evaluator-optimizer)

- [OpenAI Swarm](#swarm-1)

- [Advanced](#advanced)

- [Composing multiple workflows](#composability)

- [Signaling and Human input](#signaling-and-human-input)

- [App Config](#app-config)

- [MCP Server Management](#mcp-server-management)

- [Contributing](#contributing)

- [Roadmap](#roadmap)

- [FAQs](#faqs)

## Why use `mcp-agent`?

There are too many AI frameworks out there already. But `mcp-agent` is the only one that is purpose-built for a shared protocol - [MCP](https://modelcontextprotocol.io/introduction). It is also the most lightweight, and is closer to an agent pattern library than a framework.

As [more services become MCP-aware](https://github.com/punkpeye/awesome-mcp-servers), you can use mcp-agent to build robust and controllable AI agents that can leverage those services out-of-the-box.

## Examples

Before we go into the core concepts of mcp-agent, let's show what you can build with it.

In short, you can build any kind of AI application with mcp-agent: multi-agent collaborative workflows, human-in-the-loop workflows, RAG pipelines and more.

### Claude Desktop

You can integrate mcp-agent apps into MCP clients like Claude Desktop.

#### mcp-agent server

This app wraps an mcp-agent application inside an MCP server, and exposes that server to Claude Desktop.

The app exposes agents and workflows that Claude Desktop can invoke to service of the user's request.

https://github.com/user-attachments/assets/7807cffd-dba7-4f0c-9c70-9482fd7e0699

This demo shows a multi-agent evaluation task where each agent evaluates aspects of an input poem, and

then an aggregator summarizes their findings into a final response.

**Details**: Starting from a user's request over text, the application:

- dynamically defines agents to do the job

- uses the appropriate workflow to orchestrate those agents (in this case the Parallel workflow)

**Link to code**: [examples/basic/mcp_agent_server](./examples/basic/mcp_agent_server)

> [!NOTE]

> Huge thanks to [Jerron Lim (@StreetLamb)](https://github.com/StreetLamb)

> for developing and contributing this example!

### Streamlit

You can deploy mcp-agent apps using Streamlit.

#### Gmail agent

This app is able to perform read and write actions on gmail using text prompts -- i.e. read, delete, send emails, mark as read/unread, etc.

It uses an MCP server for Gmail.

https://github.com/user-attachments/assets/54899cac-de24-4102-bd7e-4b2022c956e3

**Link to code**: [gmail-mcp-server](https://github.com/jasonsum/gmail-mcp-server/blob/add-mcp-agent-streamlit/streamlit_app.py)

> [!NOTE]

> Huge thanks to [Jason Summer (@jasonsum)](https://github.com/jasonsum)

> for developing and contributing this example!

#### Simple RAG Chatbot

This app uses a Qdrant vector database (via an MCP server) to do Q&A over a corpus of text.

https://github.com/user-attachments/assets/f4dcd227-cae9-4a59-aa9e-0eceeb4acaf4

**Link to code**: [examples/usecases/streamlit_mcp_rag_agent](./examples/usecases/streamlit_mcp_rag_agent/)

> [!NOTE]

> Huge thanks to [Jerron Lim (@StreetLamb)](https://github.com/StreetLamb)

> for developing and contributing this example!

### Marimo

[Marimo](https://github.com/marimo-team/marimo) is a reactive Python notebook that replaces Jupyter and Streamlit.

Here's the "file finder" agent from [Quickstart](#quickstart) implemented in Marimo:

<img src="https://github.com/user-attachments/assets/139a95a5-e3ac-4ea7-9c8f-bad6577e8597" width="400"/>

**Link to code**: [examples/usecases/marimo_mcp_basic_agent](./examples/usecases/marimo_mcp_basic_agent/)

> [!NOTE]

> Huge thanks to [Akshay Agrawal (@akshayka)](https://github.com/akshayka)

> for developing and contributing this example!

### Python

You can write mcp-agent apps as Python scripts or Jupyter notebooks.

#### Swarm

This example demonstrates a multi-agent setup for handling different customer service requests in an airline context using the Swarm workflow pattern. The agents can triage requests, handle flight modifications, cancellations, and lost baggage cases.

https://github.com/user-attachments/assets/b314d75d-7945-4de6-965b-7f21eb14a8bd

**Link to code**: [examples/workflows/workflow_swarm](./examples/workflows/workflow_swarm/)

## Core Components

The following are the building blocks of the mcp-agent framework:

- **[MCPApp](./src/mcp_agent/app.py)**: global state and app configuration

- **MCP server management**: [`gen_client`](./src/mcp_agent/mcp/gen_client.py) and [`MCPConnectionManager`](./src/mcp_agent/mcp/mcp_connection_manager.py) to easily connect to MCP servers.

- **[Agent](./src/mcp_agent/agents/agent.py)**: An Agent is an entity that has access to a set of MCP servers and exposes them to an LLM as tool calls. It has a name and purpose (instruction).

- **[AugmentedLLM](./src/mcp_agent/workflows/llm/augmented_llm.py)**: An LLM that is enhanced with tools provided from a collection of MCP servers. Every Workflow pattern described below is an `AugmentedLLM` itself, allowing you to compose and chain them together.

Everything in the framework is a derivative of these core capabilities.

## Workflows

mcp-agent provides implementations for every pattern in Anthropic’s [Building Effective Agents](https://www.anthropic.com/research/building-effective-agents), as well as the OpenAI [Swarm](https://github.com/openai/swarm) pattern.

Each pattern is model-agnostic, and exposed as an `AugmentedLLM`, making everything very composable.

### AugmentedLLM

[AugmentedLLM](./src/mcp_agent/workflows/llm/augmented_llm.py) is an LLM that has access to MCP servers and functions via Agents.

LLM providers implement the AugmentedLLM interface to expose 3 functions:

- `generate`: Generate message(s) given a prompt, possibly over multiple iterations and making tool calls as needed.

- `generate_str`: Calls `generate` and returns result as a string output.

- `generate_structured`: Uses [Instructor](https://github.com/instructor-ai/instructor) to return the generated result as a Pydantic model.

Additionally, `AugmentedLLM` has memory, to keep track of long or short-term history.

<details>

<summary>Example</summary>

```python

from mcp_agent.agents.agent import Agent

from mcp_agent.workflows.llm.augmented_llm_anthropic import AnthropicAugmentedLLM

finder_agent = Agent(

name="finder",

instruction="You are an agent with filesystem + fetch access. Return the requested file or URL contents.",

server_names=["fetch", "filesystem"],

)

async with finder_agent:

llm = await finder_agent.attach_llm(AnthropicAugmentedLLM)

result = await llm.generate_str(

message="Print the first 2 paragraphs of https://www.anthropic.com/research/building-effective-agents",

# Can override model, tokens and other defaults

)

logger.info(f"Result: {result}")

# Multi-turn conversation

result = await llm.generate_str(

message="Summarize those paragraphs in a 128 character tweet",

)

logger.info(f"Result: {result}")

```

</details>

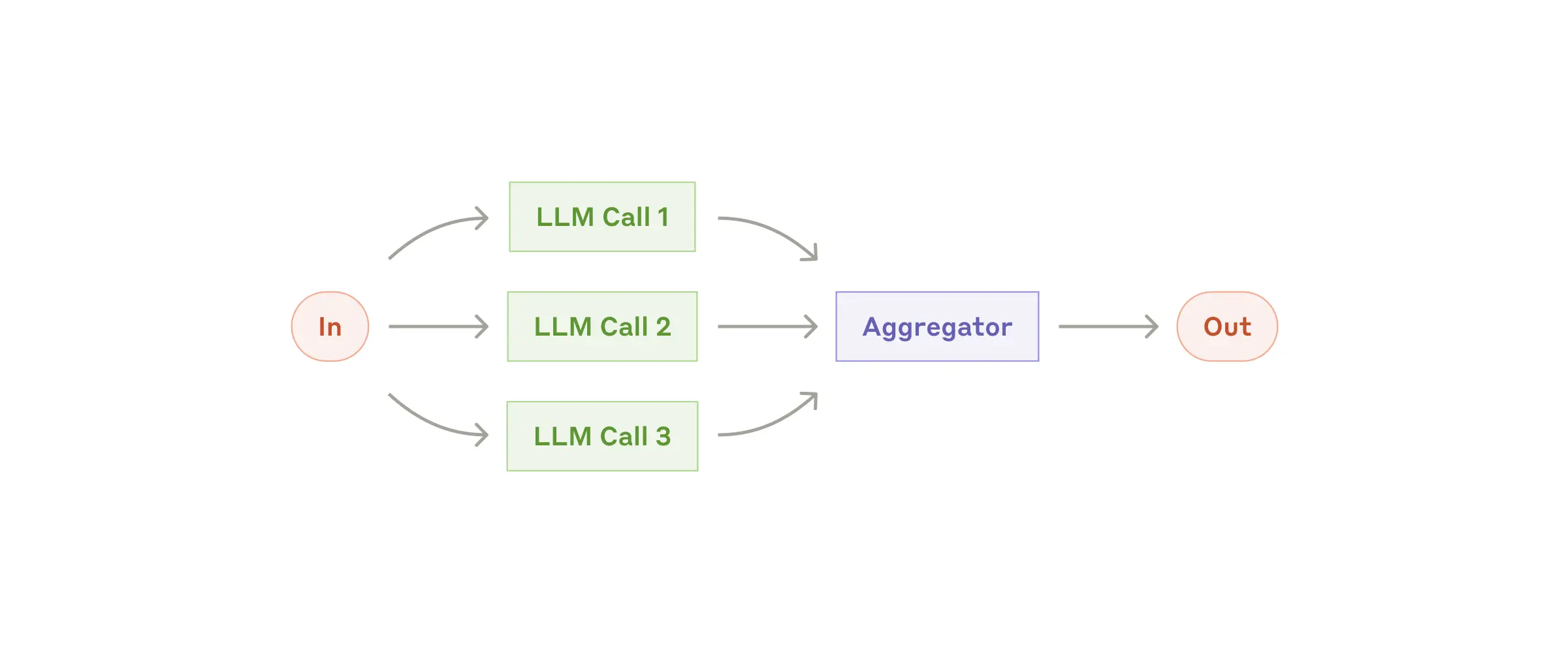

### [Parallel](src/mcp_agent/workflows/parallel/parallel_llm.py)

Fan-out tasks to multiple sub-agents and fan-in the results. Each subtask is an AugmentedLLM, as is the overall Parallel workflow, meaning each subtask can optionally be a more complex workflow itself.

> [!NOTE]

>

> **[Link to full example](examples/workflows/workflow_parallel/main.py)**

<details>

<summary>Example</summary>

```python

proofreader = Agent(name="proofreader", instruction="Review grammar...")

fact_checker = Agent(name="fact_checker", instruction="Check factual consistency...")

style_enforcer = Agent(name="style_enforcer", instruction="Enforce style guidelines...")

grader = Agent(name="grader", instruction="Combine feedback into a structured report.")

parallel = ParallelLLM(

fan_in_agent=grader,

fan_out_agents=[proofreader, fact_checker, style_enforcer],

llm_factory=OpenAIAugmentedLLM,

)

result = await parallel.generate_str("Student short story submission: ...", RequestParams(model="gpt4-o"))

```

</details>

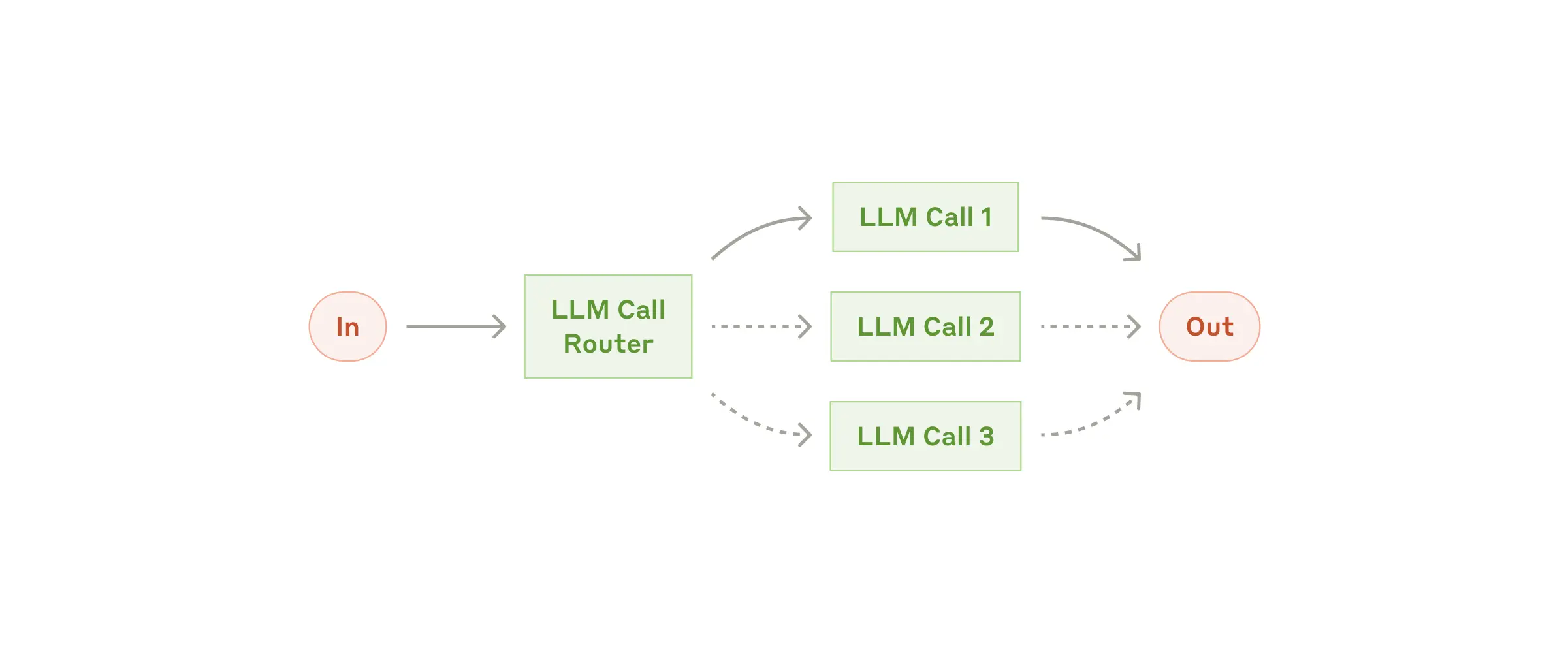

### [Router](src/mcp_agent/workflows/router/)

Given an input, route to the `top_k` most relevant categories. A category can be an Agent, an MCP server or a regular function.

mcp-agent provides several router implementations, including:

- [`EmbeddingRouter`](src/mcp_agent/workflows/router/router_embedding.py): uses embedding models for classification

- [`LLMRouter`](src/mcp_agent/workflows/router/router_llm.py): uses LLMs for classification

> [!NOTE]

>

> **[Link to full example](examples/workflows/workflow_router/main.py)**

<details>

<summary>Example</summary>

```python

def print_hello_world:

print("Hello, world!")

finder_agent = Agent(name="finder", server_names=["fetch", "filesystem"])

writer_agent = Agent(name="writer", server_names=["filesystem"])

llm = OpenAIAugmentedLLM()

router = LLMRouter(

llm=llm,

agents=[finder_agent, writer_agent],

functions=[print_hello_world],

)

results = await router.route( # Also available: route_to_agent, route_to_server

request="Find and print the contents of README.md verbatim",

top_k=1

)

chosen_agent = results[0].result

async with chosen_agent:

...

```

</details>

### [IntentClassifier](src/mcp_agent/workflows/intent_classifier/)

A close sibling of Router, the Intent Classifier pattern identifies the `top_k` Intents that most closely match a given input.

Just like a Router, mcp-agent provides both an [embedding](src/mcp_agent/workflows/intent_classifier/intent_classifier_embedding.py) and [LLM-based](src/mcp_agent/workflows/intent_classifier/intent_classifier_llm.py) intent classifier.

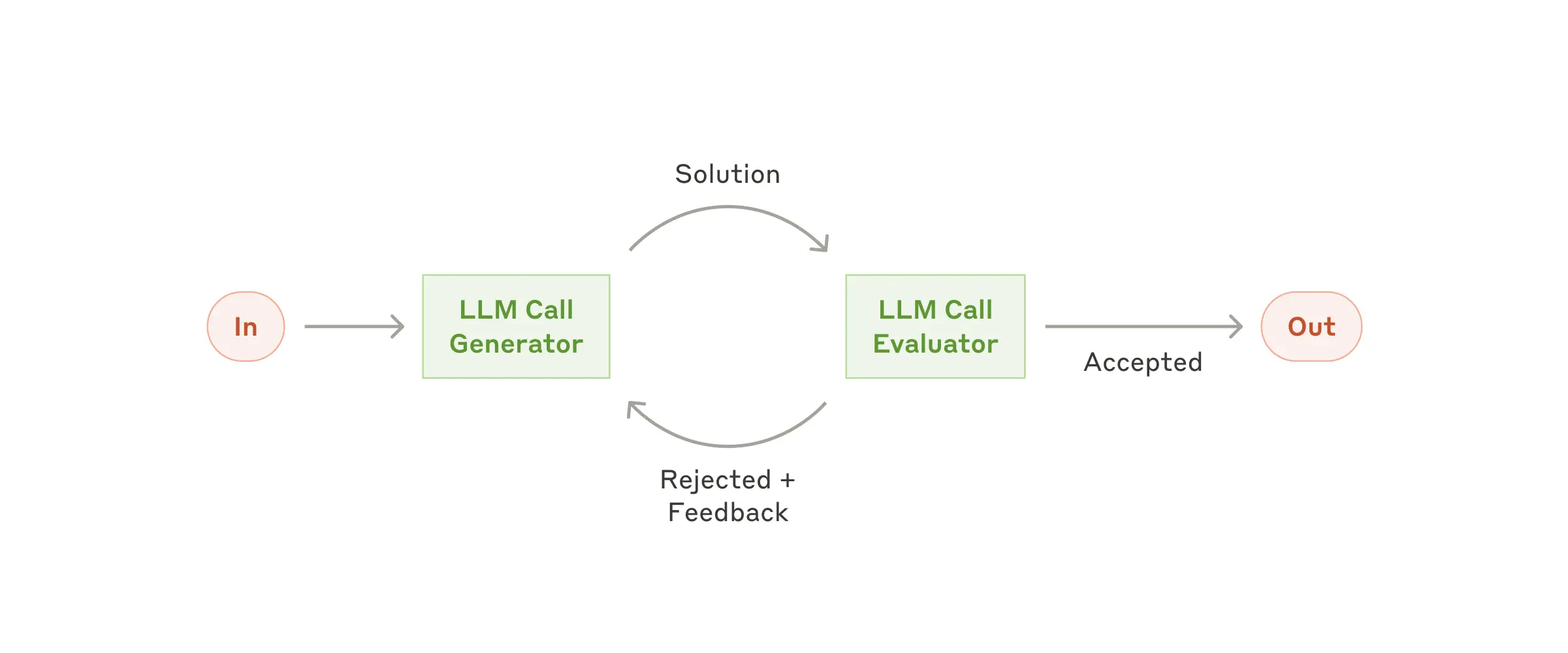

### [Evaluator-Optimizer](src/mcp_agent/workflows/evaluator_optimizer/evaluator_optimizer.py)

One LLM (the “optimizer”) refines a response, another (the “evaluator”) critiques it until a response exceeds a quality criteria.

> [!NOTE]

>

> **[Link to full example](examples/workflows/workflow_evaluator_optimizer/main.py)**

<details>

<summary>Example</summary>

```python

optimizer = Agent(name="cover_letter_writer", server_names=["fetch"], instruction="Generate a cover letter ...")

evaluator = Agent(name="critiquer", instruction="Evaluate clarity, specificity, relevance...")

llm = EvaluatorOptimizerLLM(

optimizer=optimizer,

evaluator=evaluator,

llm_factory=OpenAIAugmentedLLM,

min_rating=QualityRating.EXCELLENT, # Keep iterating until the minimum quality bar is reached

)

result = await eo_llm.generate_str("Write a job cover letter for an AI framework developer role at LastMile AI.")

print("Final refined cover letter:", result)

```

</details>

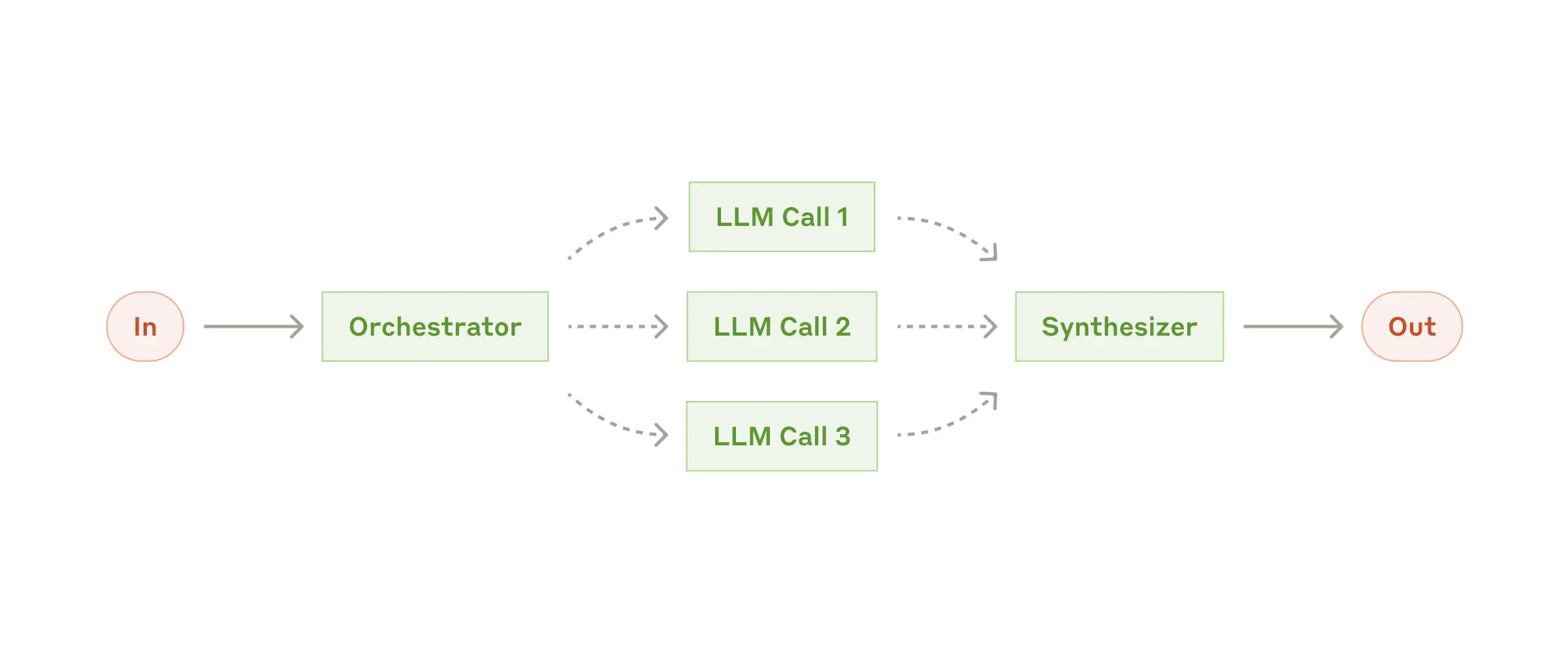

### [Orchestrator-workers](src/mcp_agent/workflows/orchestrator/orchestrator.py)

A higher-level LLM generates a plan, then assigns them to sub-agents, and synthesizes the results.

The Orchestrator workflow automatically parallelizes steps that can be done in parallel, and blocks on dependencies.

> [!NOTE]

>

> **[Link to full example](examples/workflows/workflow_orchestrator_worker/main.py)**

<details>

<summary>Example</summary>

```python

finder_agent = Agent(name="finder", server_names=["fetch", "filesystem"])

writer_agent = Agent(name="writer", server_names=["filesystem"])

proofreader = Agent(name="proofreader", ...)

fact_checker = Agent(name="fact_checker", ...)

style_enforcer = Agent(name="style_enforcer", instructions="Use APA style guide from ...", server_names=["fetch"])

orchestrator = Orchestrator(

llm_factory=AnthropicAugmentedLLM,

available_agents=[finder_agent, writer_agent, proofreader, fact_checker, style_enforcer],

)

task = "Load short_story.md, evaluate it, produce a graded_report.md with multiple feedback aspects."

result = await orchestrator.generate_str(task, RequestParams(model="gpt-4o"))

print(result)

```

</details>

### [Swarm](src/mcp_agent/workflows/swarm/swarm.py)

OpenAI has an experimental multi-agent pattern called [Swarm](https://github.com/openai/swarm), which we provide a model-agnostic reference implementation for in mcp-agent.

<img src="https://github.com/openai/swarm/blob/main/assets/swarm_diagram.png?raw=true" width=500 />

The mcp-agent Swarm pattern works seamlessly with MCP servers, and is exposed as an `AugmentedLLM`, allowing for composability with other patterns above.

> [!NOTE]

>

> **[Link to full example](examples/workflows/workflow_swarm/main.py)**

<details>

<summary>Example</summary>

```python

triage_agent = SwarmAgent(...)

flight_mod_agent = SwarmAgent(...)

lost_baggage_agent = SwarmAgent(...)

# The triage agent decides whether to route to flight_mod_agent or lost_baggage_agent

swarm = AnthropicSwarm(agent=triage_agent, context_variables={...})

test_input = "My bag was not delivered!"

result = await swarm.generate_str(test_input)

print("Result:", result)

```

</details>

## Advanced

### Composability

An example of composability is using an [Evaluator-Optimizer](#evaluator-optimizer) workflow as the planner LLM inside

the [Orchestrator](#orchestrator-workers) workflow. Generating a high-quality plan to execute is important for robust behavior, and an evaluator-optimizer can help ensure that.

Doing so is seamless in mcp-agent, because each workflow is implemented as an `AugmentedLLM`.

<details>

<summary>Example</summary>

```python

optimizer = Agent(name="plan_optimizer", server_names=[...], instruction="Generate a plan given an objective ...")

evaluator = Agent(name="plan_evaluator", instruction="Evaluate logic, ordering and precision of plan......")

planner_llm = EvaluatorOptimizerLLM(

optimizer=optimizer,

evaluator=evaluator,

llm_factory=OpenAIAugmentedLLM,

min_rating=QualityRating.EXCELLENT,

)

orchestrator = Orchestrator(

llm_factory=AnthropicAugmentedLLM,

available_agents=[finder_agent, writer_agent, proofreader, fact_checker, style_enforcer],

planner=planner_llm # It's that simple

)

...

```

</details>

### Signaling and Human Input

**Signaling**: The framework can pause/resume tasks. The agent or LLM might “signal” that it needs user input, so the workflow awaits. A developer may signal during a workflow to seek approval or review before continuing with a workflow.

**Human Input**: If an Agent has a `human_input_callback`, the LLM can call a `__human_input__` tool to request user input mid-workflow.

<details>

<summary>Example</summary>

The [Swarm example](examples/workflows/workflow_swarm/main.py) shows this in action.

```python

from mcp_agent.human_input.handler import console_input_callback

lost_baggage = SwarmAgent(

name="Lost baggage traversal",

instruction=lambda context_variables: f"""

{

FLY_AIR_AGENT_PROMPT.format(

customer_context=context_variables.get("customer_context", "None"),

flight_context=context_variables.get("flight_context", "None"),

)

}\n Lost baggage policy: policies/lost_baggage_policy.md""",

functions=[

escalate_to_agent,

initiate_baggage_search,

transfer_to_triage,

case_resolved,

],

server_names=["fetch", "filesystem"],

human_input_callback=console_input_callback, # Request input from the console

)

```

</details>

### App Config

Create an [`mcp_agent.config.yaml`](/schema/mcp-agent.config.schema.json) and a gitignored [`mcp_agent.secrets.yaml`](./examples/basic/mcp_basic_agent/mcp_agent.secrets.yaml.example) to define MCP app configuration. This controls logging, execution, LLM provider APIs, and MCP server configuration.

### MCP server management

mcp-agent makes it trivial to connect to MCP servers. Create an [`mcp_agent.config.yaml`](/schema/mcp-agent.config.schema.json) to define server configuration under the `mcp` section:

```yaml

mcp:

servers:

fetch:

command: "uvx"

args: ["mcp-server-fetch"]

description: "Fetch content at URLs from the world wide web"

```

#### [`gen_client`](src/mcp_agent/mcp/gen_client.py)

Manage the lifecycle of an MCP server within an async context manager:

```python

from mcp_agent.mcp.gen_client import gen_client

async with gen_client("fetch") as fetch_client:

# Fetch server is initialized and ready to use

result = await fetch_client.list_tools()

# Fetch server is automatically disconnected/shutdown

```

The gen_client function makes it easy to spin up connections to MCP servers.

#### Persistent server connections

In many cases, you want an MCP server to stay online for persistent use (e.g. in a multi-step tool use workflow).

For persistent connections, use:

- [`connect`](<(src/mcp_agent/mcp/gen_client.py)>) and [`disconnect`](src/mcp_agent/mcp/gen_client.py)

```python

from mcp_agent.mcp.gen_client import connect, disconnect

fetch_client = None

try:

fetch_client = connect("fetch")

result = await fetch_client.list_tools()

finally:

disconnect("fetch")

```

- [`MCPConnectionManager`](src/mcp_agent/mcp/mcp_connection_manager.py)

For even more fine-grained control over server connections, you can use the MCPConnectionManager.

<details>

<summary>Example</summary>

```python

from mcp_agent.context import get_current_context

from mcp_agent.mcp.mcp_connection_manager import MCPConnectionManager

context = get_current_context()

connection_manager = MCPConnectionManager(context.server_registry)

async with connection_manager:

fetch_client = await connection_manager.get_server("fetch") # Initializes fetch server

result = fetch_client.list_tool()

fetch_client2 = await connection_manager.get_server("fetch") # Reuses same server connection

# All servers managed by connection manager are automatically disconnected/shut down

```

</details>

#### MCP Server Aggregator

[`MCPAggregator`](src/mcp_agent/mcp/mcp_aggregator.py) acts as a "server-of-servers".

It provides a single MCP server interface for interacting with multiple MCP servers.

This allows you to expose tools from multiple servers to LLM applications.

<details>

<summary>Example</summary>

```python

from mcp_agent.mcp.mcp_aggregator import MCPAggregator

aggregator = await MCPAggregator.create(server_names=["fetch", "filesystem"])

async with aggregator:

# combined list of tools exposed by 'fetch' and 'filesystem' servers

tools = await aggregator.list_tools()

# namespacing -- invokes the 'fetch' server to call the 'fetch' tool

fetch_result = await aggregator.call_tool(name="fetch-fetch", arguments={"url": "https://www.anthropic.com/research/building-effective-agents"})

# no namespacing -- first server in the aggregator exposing that tool wins

read_file_result = await aggregator.call_tool(name="read_file", arguments={})

```

</details>

## Contributing

We welcome any and all kinds of contributions. Please see the [CONTRIBUTING guidelines](./CONTRIBUTING.md) to get started.

### Special Mentions

There have already been incredible community contributors who are driving this project forward:

- [Shaun Smith (@evalstate)](https://github.com/evalstate) -- who has been leading the charge on countless complex improvements, both to `mcp-agent` and generally to the MCP ecosystem.

- [Jerron Lim (@StreetLamb)](https://github.com/StreetLamb) -- who has contributed countless hours and excellent examples, and great ideas to the project.

- [Jason Summer (@jasonsum)](https://github.com/jasonsum) -- for identifying several issues and adapting his Gmail MCP server to work with mcp-agent

## Roadmap

We will be adding a detailed roadmap (ideally driven by your feedback). The current set of priorities include:

- **Durable Execution** -- allow workflows to pause/resume and serialize state so they can be replayed or be paused indefinitely. We are working on integrating [Temporal](./src/mcp_agent/executor/temporal.py) for this purpose.

- **Memory** -- adding support for long-term memory

- **Streaming** -- Support streaming listeners for iterative progress

- **Additional MCP capabilities** -- Expand beyond tool calls to support:

- Resources

- Prompts

- Notifications

## FAQs

### What are the core benefits of using mcp-agent?

mcp-agent provides a streamlined approach to building AI agents using capabilities exposed by **MCP** (Model Context Protocol) servers.

MCP is quite low-level, and this framework handles the mechanics of connecting to servers, working with LLMs, handling external signals (like human input) and supporting persistent state via durable execution. That lets you, the developer, focus on the core business logic of your AI application.

Core benefits:

- 🤝 **Interoperability**: ensures that any tool exposed by any number of MCP servers can seamlessly plug in to your agents.

- ⛓️ **Composability & Cutstomizability**: Implements well-defined workflows, but in a composable way that enables compound workflows, and allows full customization across model provider, logging, orchestrator, etc.

- 💻 **Programmatic control flow**: Keeps things simple as developers just write code instead of thinking in graphs, nodes and edges. For branching logic, you write `if` statements. For cycles, use `while` loops.

- 🖐️ **Human Input & Signals**: Supports pausing workflows for external signals, such as human input, which are exposed as tool calls an Agent can make.

### Do you need an MCP client to use mcp-agent?

No, you can use mcp-agent anywhere, since it handles MCPClient creation for you. This allows you to leverage MCP servers outside of MCP hosts like Claude Desktop.

Here's all the ways you can set up your mcp-agent application:

#### MCP-Agent Server

You can expose mcp-agent applications as MCP servers themselves (see [example](./examples/basic/mcp_agent_server)), allowing MCP clients to interface with sophisticated AI workflows using the standard tools API of MCP servers. This is effectively a server-of-servers.

#### MCP Client or Host

You can embed mcp-agent in an MCP client directly to manage the orchestration across multiple MCP servers.

#### Standalone

You can use mcp-agent applications in a standalone fashion (i.e. they aren't part of an MCP client). The [`examples`](/examples/) are all standalone applications.

### Tell me a fun fact

I debated naming this project _silsila_ (سلسلہ), which means chain of events in Urdu. mcp-agent is more matter-of-fact, but there's still an easter egg in the project paying homage to silsila.

## Code Examples

The MCP-Agent framework provides multiple ways to create and run AI agents with MCP server connections:

### Basic Agent Example

```python

from mcp_agent.app import MCPApp

from mcp_agent.agents.agent import Agent

from mcp_agent.workflows.llm.augmented_llm_openai import OpenAIAugmentedLLM

app = MCPApp(name="my_agent")

async def main():

async with app.run() as agent_app:

# Create an agent with filesystem and fetch capabilities

agent = Agent(

name="finder",

instruction="You help find and analyze files and web content",

server_names=["fetch", "filesystem"]

)

async with agent:

# Attach an LLM to the agent

llm = await agent.attach_llm(OpenAIAugmentedLLM)

# Generate responses using MCP tools

result = await llm.generate_str(

message="Find and read the config file"

)

print(result)

```

### Router-Based Workflow

```python

from mcp_agent.workflows.router.router_llm_openai import OpenAILLMRouter

from mcp_agent.agents.agent import Agent

# Create specialized agents

finder_agent = Agent(

name="finder",

instruction="Find and read files",

server_names=["filesystem"]

)

writer_agent = Agent(

name="writer",

instruction="Write content to files",

server_names=["filesystem"]

)

# Router automatically selects the best agent

router = OpenAILLMRouter(agents=[finder_agent, writer_agent])

# Route a request to the most appropriate agent

results = await router.route_to_agent(

request="Read the config file", top_k=1

)

selected_agent = results[0].result

```

### Configuration

MCP-Agent uses YAML configuration files (`mcp_agent.config.yaml`):

```yaml

execution_engine: "asyncio"

mcp:

servers:

filesystem:

command: "npx"

args: ["-y", "@modelcontextprotocol/server-filesystem"]

fetch:

command: "uvx"

args: ["mcp-server-fetch"]

openai:

default_model: "gpt-4o-mini"

```

## Function and Class Definitions

*Note: Test files, example files, and script files are excluded from this section.*

### src/mcp_agent/agents/agent.py

**Class: `Agent`**

- **Inherits from**: BaseModel

- **Description**: An Agent is an entity that has access to a set of MCP servers and can interact with them.

Each agent should have a purpose defined by its instruction.

- **Attributes**:

- `name` (str): Agent name.

- `instruction` (str | Callable[[Dict], str]) = 'You are a helpful agent.': Instruction for the agent. This can be a string or a callable that takes a dictionary and returns a string. The callable can be used to generate dynamic instructions based on the context.

- `server_names` (List[str]) = Field(default_factory=list): List of MCP server names that the agent can access.

- `functions` (List[Callable]) = Field(default_factory=list): List of local functions that the agent can call.

- `context` (Optional[Context]) = None: The application context that the agent is running in.

- `connection_persistence` (bool) = True: Whether to persist connections to the MCP servers.

- `human_input_callback` (Optional[Callable]) = None: Callback function for requesting human input. Must match HumanInputCallback protocol.

- `llm` (Optional[Any]) = None: The LLM instance that is attached to the agent. This is set in attach_llm method.

- `initialized` (bool) = False: Whether the agent has been initialized. This is set to True after agent.initialize() is completed.

- `model_config` = ConfigDict(arbitrary_types_allowed=True, extra='allow')

- `_function_tool_map` (Dict[str, FastTool]) = PrivateAttr(default_factory=dict)

- `_namespaced_tool_map` (Dict[str, NamespacedTool]) = PrivateAttr(default_factory=dict)

- `_server_to_tool_map` (Dict[str, List[NamespacedTool]]) = PrivateAttr(default_factory=dict)

- `_namespaced_prompt_map` (Dict[str, NamespacedPrompt]) = PrivateAttr(default_factory=dict)

- `_server_to_prompt_map` (Dict[str, List[NamespacedPrompt]]) = PrivateAttr(default_factory=dict)

- `_agent_tasks` ('AgentTasks') = PrivateAttr(default=None)