|

| 1 | +# Getting Started with Groundlight |

1 | 2 |

|

2 | | -# Getting Started |

3 | | - |

4 | | -## How to Build a Computer Vision Application with Groundlight's Python SDK |

5 | | - |

6 | | -If you're new to Groundlight AI, this is a good place to start. This is the equivalent of building a "Hello, world!" application. |

7 | | - |

8 | | -Don't code? [Reach out to Groundlight AI](mailto:support@groundlight.ai) so we can build a custom computer vision application for you. |

9 | | - |

10 | | - |

11 | | -### What's below? |

12 | | - |

13 | | - - [Prerequisites](#prerequisites) |

14 | | - - [Environment Setup](#environment-setup) |

15 | | - - [Authentication](#authentication) |

16 | | - - [Writing the code](#writing-the-code) |

17 | | - - [Using your application](#using-your-computer-vision-application) |

| 3 | +## Build Powerful Computer Vision Applications in Minutes |

18 | 4 |

|

| 5 | +Welcome to Groundlight AI! This guide will walk you through creating powerful computer vision applications in minutes using our Python SDK. |

| 6 | +No machine learning expertise required! Groundlight empowers businesses across industries - |

| 7 | +from [revolutionizing industrial quality control](https://www.groundlight.ai/blog/lkq-corporation-uses-groundlight-ai-to-revolutionize-quality-control-and-inspection) |

| 8 | +and [monitoring workplace safety compliance](https://www.groundlight.ai/use-cases/ppe-detection-in-the-workplace) |

| 9 | +to [optimizing inventory management](https://www.groundlight.ai/use-cases/inventory-monitoring-using-vision-ai). |

| 10 | +Our human-in-the-loop technology delivers accurate results while continuously improving over time, making |

| 11 | +sophisticated computer vision accessible to everyone. |

19 | 12 |

|

| 13 | +Don't code? No problem! [Contact our team](mailto:support@groundlight.ai) and we'll build a custom solution tailored to your needs. |

20 | 14 |

|

21 | 15 | ### Prerequisites |

22 | | - |

23 | | -Before getting started: |

24 | | - |

25 | | -- Make sure you have python installed |

26 | | -- Install VSCode |

27 | | -- Make sure your device has a c compiler. On Mac, this is provided through XCode while in Windows you can use the Microsoft Visual Studio Build Tools |

28 | | - |

29 | | -### Environment Setup |

30 | | - |

31 | | -Before you get started, you need to make sure you have python installed. Additionally, it’s good practice to set up a dedicated environment for your project. |

32 | | - |

33 | | -You can download python from https://www.python.org/downloads/. Once installed, you should be able to run the following in the command line to create a new environment |

34 | | - |

35 | | - ```bash |

36 | | - python3 -m venv gl_env |

37 | | - ``` |

38 | | -Once your environment is created, you can activate it with |

39 | | - ```bash |

40 | | - source gl_env/bin/activate |

41 | | - ``` |

42 | | -For Linux and Mac or if you’re on Windows you can run |

43 | | - ```bash |

44 | | - gl_env\Scripts\activate |

45 | | - ``` |

46 | | -The last step to setting up your python environment is to run |

47 | | - ```bash |

48 | | - pip install groundlight |

49 | | - pip install framegrab |

50 | | - ``` |

51 | | -in order to download Groundlight’s SDK and image capture libraries. |

52 | | - |

53 | | - |

| 16 | +Before diving in, you'll need: |

| 17 | +1. A [Groundlight account](https://dashboard.groundlight.ai/) (sign up is quick and easy!) |

| 18 | +2. An API token from your [Groundlight dashboard](https://dashboard.groundlight.ai/reef/my-account/api-tokens). Check out our [API Tokens guide](/docs/getting-started/api-tokens) for details. |

| 19 | +3. Python 3.9 or newer installed on your system. |

| 20 | + |

| 21 | +### Setting Up Your Environment |

| 22 | + |

| 23 | +Let's set up a clean Python environment for your Groundlight project! The Groundlight SDK is available on PyPI and can be installed with [pip](https://packaging.python.org/en/latest/tutorials/installing-packages/#use-pip-for-installing). |

| 24 | + |

| 25 | +First, let's create a virtual environment to keep your Groundlight dependencies isolated from other Python projects: |

| 26 | +```bash |

| 27 | +python3 -m venv groundlight-env |

| 28 | +``` |

| 29 | +Now, activate your virtual environment: |

| 30 | +```bash |

| 31 | +# MacOS / Linux |

| 32 | +source groundlight-env/bin/activate |

| 33 | +``` |

| 34 | +``` |

| 35 | +# Windows |

| 36 | +.\groundlight-env\Scripts\activate |

| 37 | +``` |

| 38 | + |

| 39 | +With your environment ready, install the Groundlight SDK with a simple pip command: |

| 40 | +```bash |

| 41 | +pip install groundlight |

| 42 | +``` |

| 43 | + |

| 44 | +Let's also install [framegrab](https://github.com/groundlight/framegrab) with YouTube support - |

| 45 | +this useful library will let us capture frames from YouTube livestreams, webcams, and other video |

| 46 | +sources, making it easy to get started! |

| 47 | +```bash |

| 48 | +pip install framegrab[youtube] |

| 49 | +``` |

| 50 | +:::tip Camera Support |

| 51 | +Framegrab is versatile! It works with: |

| 52 | +- Webcams and USB cameras |

| 53 | +- RTSP streams (security cameras) |

| 54 | +- Professional cameras (Basler USB/GigE) |

| 55 | +- Depth cameras (Intel RealSense) |

| 56 | +- Video files and streams (mp4, mov, mjpeg, avi) |

| 57 | +- YouTube livestreams |

| 58 | + |

| 59 | +This makes it perfect for quickly prototyping your computer vision applications! |

| 60 | +::: |

| 61 | + |

| 62 | +Need more options? Check out our detailed [installation guide](/docs/installation/) for advanced setup instructions. |

54 | 63 |

|

55 | 64 | ### Authentication |

56 | 65 |

|

57 | | -In order to verify your identity while connecting to your custom ML models through our SDK, you’ll need to create an API token. |

58 | | - |

59 | | -1. Head over to [https://dashboard.groundlight.ai/](https://dashboard.groundlight.ai/) and create or log into your account |

60 | | - |

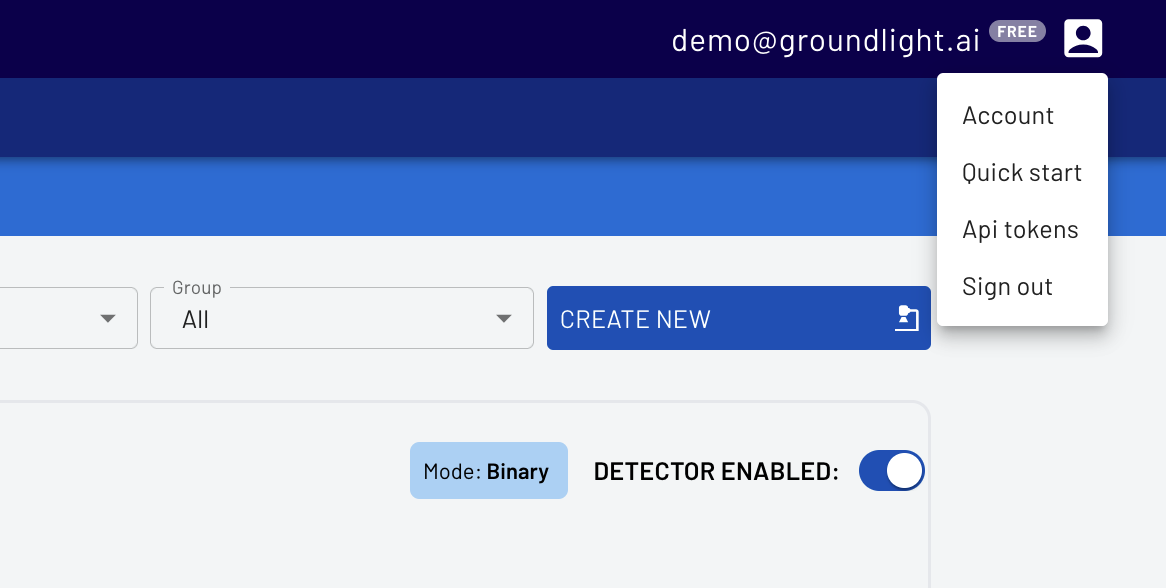

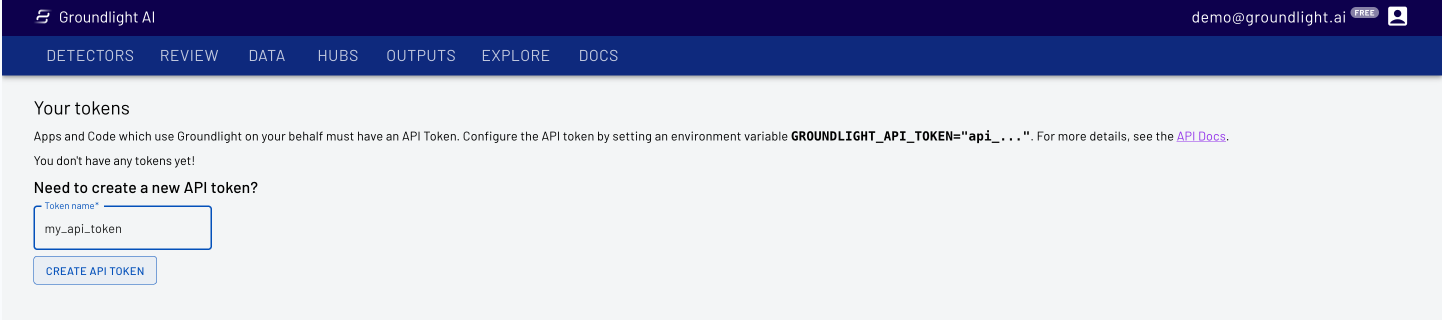

61 | | -2. Once in, click on your username in the upper right hand corner of your dashboard: |

62 | | - |

63 | | - |

64 | | - |

65 | | -3. Select API Tokens, then enter a name, like ‘personal-laptop-token’ for your api token. |

66 | | - |

67 | | - |

68 | | - |

69 | | -4. Copy the API Token for use in your code |

70 | | - |

71 | | -IMPORTANT: Keep your API token secure! Anyone who has access to it can impersonate you and will have access to your Groundlight data |

72 | | - |

73 | | - |

74 | | - ```bash |

75 | | - $env:GROUNDLIGHT_API_TOKEN="YOUR_API_TOKEN_HERE" |

76 | | - ``` |

77 | | -Or on Mac |

78 | | - ```bash |

79 | | - export GROUNDLIGHT_API_TOKEN="YOUR_API_TOKEN_HERE" |

80 | | - ``` |

81 | | - |

82 | | - |

83 | | -### Writing the code |

84 | | - |

85 | | -For your first and simple application you can build a binary detector, which is computer vision model where the answer will either be 'Yes' or 'No'. Groundlight AI will confirm if the thumb is facing up or down ("Is the thumb facing up?"). |

86 | | - |

87 | | -You can start using Groundlight using just your laptop camera, but you can also use a USB camera if you have one. |

88 | | - |

89 | | - ```python |

90 | | - import groundlight |

91 | | - import cv2 |

92 | | - from framegrab import FrameGrabber |

93 | | - import time |

94 | | - |

95 | | - gl = groundlight.Groundlight() |

96 | | - |

97 | | - detector_name = "trash_detector" |

98 | | - detector_query = "Is the trash can overflowing" |

99 | | - |

100 | | - detector = gl.get_or_create_detector(detector_name, detector_query) |

101 | | - |

102 | | - grabber = list(FrameGrabber.autodiscover().values())[0] |

103 | | - |

104 | | - WAIT_TIME = 5 |

105 | | - last_capture_time = time.time() - WAIT_TIME |

106 | | - |

107 | | - while True: |

108 | | - frame = grabber.grab() |

109 | | - |

110 | | - cv2.imshow('Video Feed', frame) |

111 | | - key = cv2.waitKey(30) |

112 | | - |

113 | | - if key == ord('q'): |

114 | | - break |

115 | | - # # Press enter to submit an image query |

116 | | - # elif key in (ord('\r'), ord('\n')): |

117 | | - # print(f'Asking question: {detector_query}') |

118 | | - # image_query = gl.submit_image_query(detector, frame) |

119 | | - # print(f'The answer is {image_query.result.label.value}') |

120 | | - |

121 | | - # # Press 'y' or 'n' to submit a label |

122 | | - # elif key in (ord('y'), ord('n')): |

123 | | - # if key == ord('y'): |

124 | | - # label = 'YES' |

125 | | - # else: |

126 | | - # label = 'NO' |

127 | | - # image_query = gl.ask_async(detector, frame, human_review="NEVER") |

128 | | - # gl.add_label(image_query, label) |

129 | | - # print(f'Adding label {label} for image query {image_query.id}') |

130 | | - |

131 | | - # Submit image queries in a timed loop |

132 | | - now = time.time() |

133 | | - if last_capture_time + WAIT_TIME < now: |

134 | | - last_capture_time = now |

135 | | - |

136 | | - print(f'Asking question: {detector_query}') |

137 | | - image_query = gl.submit_image_query(detector, frame) |

138 | | - print(f'The answer is {image_query.result.label.value}') |

139 | | - |

140 | | - grabber.release() |

141 | | - cv2.destroyAllWindows() |

142 | | - ``` |

143 | | - This code will take an image from your connected camera every minute and ask Groundlight a question in natural language, before printing out the answer. |

144 | | - |

145 | | - |

146 | | - |

147 | | -### Using your computer vision application |

148 | | - |

149 | | -Just like that, you have a complete computer vision application. You can change the code and configure a detector for your specific use case. Also, you can monitor and improve the performance of your detector at [https://dashboard.groundlight.ai/](https://dashboard.groundlight.ai/). Groundlight’s human-in-the-loop technology will monitor your image feed for unexpected changes and anomalies, and by verifying answers returned by Groundlight you can improve the process. At app.groundlight.ai, you can also set up text and email notifications, so you can be alerted when something of interest happens in your video stream. |

150 | | - |

151 | | - |

152 | | - |

153 | | -### If You're Looking for More: |

154 | | - |

155 | | -Now that you've built your first application, learn how to [write queries](https://code.groundlight.ai/python-sdk/docs/getting-started/writing-queries). |

156 | | - |

157 | | -Want to play around with sample applications built by Groundlight AI? Visit [Guides](https://www.groundlight.ai/guides) to build example applications, from detecting birds outside of your window to running Groundlight AI on a Raspberry Pi. |

| 66 | +Now let's set up your credentials so you can start making API calls. Groundlight uses API tokens to securely authenticate your requests. |

| 67 | + |

| 68 | +If you don't have an API token yet, refer to our [API Tokens guide](/docs/getting-started/api-tokens) to create one. |

| 69 | + |

| 70 | +The SDK will automatically look for your token in the `GROUNDLIGHT_API_TOKEN` environment variable. Set it up with: |

| 71 | +```bash |

| 72 | +# MacOS / Linux |

| 73 | +export GROUNDLIGHT_API_TOKEN='your-api-token' |

| 74 | +``` |

| 75 | +```powershell |

| 76 | +# Windows |

| 77 | +setx GROUNDLIGHT_API_TOKEN "your-api-token" |

| 78 | +``` |

| 79 | +:::important API Tokens |

| 80 | +Keep your API token secure! Anyone who has access to it can impersonate you and can access to your Groundlight data. |

| 81 | +::: |

| 82 | + |

| 83 | +### Call the Groundlight API |

| 84 | + |

| 85 | +Call the Groundlight API by creating a `Detector` and submitting an `ImageQuery`. A `Detector` represents a specific |

| 86 | +visual question you want to answer, while an `ImageQuery` is a request to analyze an image with that question. |

| 87 | + |

| 88 | +The Groundlight system is designed to provide consistent, highly confident answers for similar images |

| 89 | +(such as frames from the same camera) when asked the same question repeatedly. This makes it ideal for |

| 90 | +scenarios where you need reliable visual detection. |

| 91 | + |

| 92 | +Let's see how to use Groundlight to analyze an image: |

| 93 | +```python title="ask.py" |

| 94 | +from framegrab import FrameGrabber |

| 95 | +from groundlight import Groundlight, Detector, ImageQuery |

| 96 | + |

| 97 | +gl = Groundlight() |

| 98 | +detector: Detector = gl.get_or_create_detector( |

| 99 | + name="eagle-detector", |

| 100 | + query="Is there an eagle visible?", |

| 101 | +) |

| 102 | + |

| 103 | +# Big Bear Bald Eagle Nest livestream |

| 104 | +youtube_live_url = 'https://www.youtube.com/watch?v=B4-L2nfGcuE' |

| 105 | + |

| 106 | +framegrab_config = { |

| 107 | + 'input_type': 'youtube_live', |

| 108 | + 'id': {'youtube_url': youtube_live_url}, |

| 109 | +} |

| 110 | + |

| 111 | +with FrameGrabber.create_grabber(framegrab_config) as grabber: |

| 112 | + frame = grabber.grab() |

| 113 | + if frame is None: |

| 114 | + raise RuntimeError("No frame captured") |

| 115 | + |

| 116 | +iq: ImageQuery = gl.submit_image_query(detector=detector, image=frame) |

| 117 | + |

| 118 | +print(f"{detector.query} -- Answer: {iq.result.label} with confidence={iq.result.confidence:.3f}\n") |

| 119 | +print(iq) |

| 120 | +``` |

| 121 | + |

| 122 | +Run the code using `python ask.py`. The code will submit an image from the live-stream to the Groundlight API and print the result: |

| 123 | +``` |

| 124 | +Is there an eagle visible? -- Answer: YES with confidence=0.988 |

| 125 | +

|

| 126 | +ImageQuery( |

| 127 | + id='iq_2pL5wwlefaOnFNQx1X6awTOd119', |

| 128 | + query="Is there an eagle visible?, |

| 129 | + detector_id='det_2owcsT7XCsfFlu7diAKgPKR4BXY', |

| 130 | + result=BinaryClassificationResult( |

| 131 | + confidence=0.9884857543478209, |

| 132 | + label=<Label.YES: 'YES'> |

| 133 | + ), |

| 134 | + created_at=datetime.datetime(2025, 2, 25, 11, 5, 57, 38627, tzinfo=tzutc()), |

| 135 | + patience_time=30.0, |

| 136 | + confidence_threshold=0.9, |

| 137 | + type=<ImageQueryTypeEnum.image_query: 'image_query'>, |

| 138 | + result_type=<ResultTypeEnum.binary_classification: 'binary_classification'>, |

| 139 | + metadata=None |

| 140 | +) |

| 141 | +``` |

| 142 | +## What's Next? |

| 143 | + |

| 144 | +**Amazing job!** You've just built your first computer vision application with Groundlight. |

| 145 | +In just a few lines of code, you've created an eagle detector that can analyze live video streams! |

| 146 | + |

| 147 | +### Supercharge Your Application |

| 148 | + |

| 149 | +Take your application to the next level: |

| 150 | + |

| 151 | +- **Monitor in real-time** through the [Groundlight Dashboard](https://dashboard.groundlight.ai/) - see your detections, review results, and track performance |

| 152 | +- **Get instant alerts** when important events happen - [set up text and email notifications](/docs/guide/alerts) for critical detections |

| 153 | +- **Improve continuously** with Groundlight's human-in-the-loop technology that learns from your feedback |

| 154 | + |

| 155 | +### Next Steps |

| 156 | + |

| 157 | +| What You Want To Do | Resource | |

| 158 | +|---|---| |

| 159 | +| 📝 Create better detectors | [Writing effective queries](/docs/getting-started/writing-queries) | |

| 160 | +| 📷 Connect to cameras, RTSP, or other sources | [Grabbing images from various sources](/docs/guide/grabbing-images) | |

| 161 | +| 🎯 Fine-tune detection accuracy | [Managing confidence thresholds](/docs/guide/managing-confidence) | |

| 162 | +| 📚 Explore the full API | [SDK Reference](/docs/api-reference/) | |

| 163 | + |

| 164 | +Ready to explore more possibilities? Visit our [Guides](https://www.groundlight.ai/guides) to discover sample |

| 165 | +applications built with Groundlight AI — from [industrial inspection workflows](https://www.groundlight.ai/blog/lkq-corporation-uses-groundlight-ai-to-revolutionize-quality-control-and-inspection) |

| 166 | +to [hummingbird detection systems](https://www.groundlight.ai/guides/detecting-hummingbirds-with-groundlight-ai). |

0 commit comments