|

| 1 | + |

1 | 2 | # Getting Started |

2 | 3 |

|

3 | | -## Computer Vision powered by Natural Language |

| 4 | +## How to Build a Computer Vision Application with Groundlight's Python SDK |

4 | 5 |

|

5 | | -Build a working computer vision system in just a few lines of python: |

| 6 | +If you're new to Groundlight AI, this is a good place to start. This is the equivalent of building a "Hello, world!" application. |

6 | 7 |

|

7 | | -```python |

8 | | -from groundlight import Groundlight |

| 8 | +Don't code? [Reach out to Groundlight AI](mailto:support@groundlight.ai) so we can build a custom computer vision application for you. |

9 | 9 |

|

10 | | -gl = Groundlight() |

11 | | -det = gl.get_or_create_detector(name="doorway", query="Is the doorway open?") |

12 | | -img = "./docs/static/img/doorway.jpg" # Image can be a file or a Python object |

13 | | -image_query = gl.submit_image_query(detector=det, image=img) |

14 | | -print(f"The answer is {image_query.result}") |

15 | | -``` |

16 | 10 |

|

17 | | -### How does it work? |

| 11 | +### What's below? |

18 | 12 |

|

19 | | -Your images are first analyzed by machine learning (ML) models which are automatically trained on your data. If those models have high enough [confidence](docs/guide/5-managing-confidence.md), that's your answer. But if the models are unsure, then the images are progressively escalated to more resource-intensive analysis methods up to real-time human review. So what you get is a computer vision system that starts working right away without even needing to first gather and label a dataset. At first it will operate with high latency, because people need to review the image queries. But over time, the ML systems will learn and improve so queries come back faster with higher confidence. |

| 13 | + - [Prerequisites](#prerequisites) |

| 14 | + - [Environment Setup](#environment-setup) |

| 15 | + - [Authentication](#authentication) |

| 16 | + - [Writing the code](#writing-the-code) |

| 17 | + - [Using your application](#using-your-computer-vision-application) |

20 | 18 |

|

21 | | -### Escalation Technology |

22 | 19 |

|

23 | | -Groundlight's Escalation Technology combines the power of generative AI using our Visual LLM, along with the speed of edge computing, and the reliability of real-time human oversight. |

24 | 20 |

|

25 | | - |

| 21 | +### Prerequisites |

26 | 22 |

|

| 23 | +Before getting started: |

27 | 24 |

|

28 | | -## Building a simple visual application |

| 25 | +- Make sure you have python installed |

| 26 | +- Install VSCode |

| 27 | +- Make sure your device has a c compiler. On Mac, this is provided through XCode while in Windows you can use the Microsoft Visual Studio Build Tools |

29 | 28 |

|

30 | | -1. Install the `groundlight` SDK. Requires python version 3.9 or higher. |

| 29 | +### Environment Setup |

31 | 30 |

|

32 | | - ```shell |

33 | | - pip3 install groundlight |

34 | | - ``` |

| 31 | +Before you get started, you need to make sure you have python installed. Additionally, it’s good practice to set up a dedicated environment for your project. |

35 | 32 |

|

36 | | -1. Head over to the [Groundlight dashboard](https://dashboard.groundlight.ai/) to create an [API token](https://dashboard.groundlight.ai/reef/my-account/api-tokens). You will |

37 | | - need to set the `GROUNDLIGHT_API_TOKEN` environment variable to access the API. |

| 33 | +You can download python from https://www.python.org/downloads/. Once installed, you should be able to run the following in the command line to create a new environment |

38 | 34 |

|

39 | | - ```shell |

40 | | - export GROUNDLIGHT_API_TOKEN=api_2GdXMflhJi6L_example |

| 35 | + ```bash |

| 36 | + python3 -m venv gl_env |

| 37 | + ``` |

| 38 | +Once your environment is created, you can activate it with |

| 39 | + ```bash |

| 40 | + source gl_env/bin/activate |

| 41 | + ``` |

| 42 | +For Linux and Mac or if you’re on Windows you can run |

| 43 | + ```bash |

| 44 | + gl_env\Scripts\activate |

| 45 | + ``` |

| 46 | +The last step to setting up your python environment is to run |

| 47 | + ```bash |

| 48 | + pip install groundlight |

| 49 | + pip install framegrab |

41 | 50 | ``` |

| 51 | +in order to download Groundlight’s SDK and image capture libraries. |

| 52 | + |

| 53 | + |

| 54 | + |

| 55 | +### Authentication |

| 56 | + |

| 57 | +In order to verify your identity while connecting to your custom ML models through our SDK, you’ll need to create an API token. |

| 58 | + |

| 59 | +1. Head over to [https://dashboard.groundlight.ai/](https://dashboard.groundlight.ai/) and create or log into your account |

42 | 60 |

|

43 | | -1. Create a python script. |

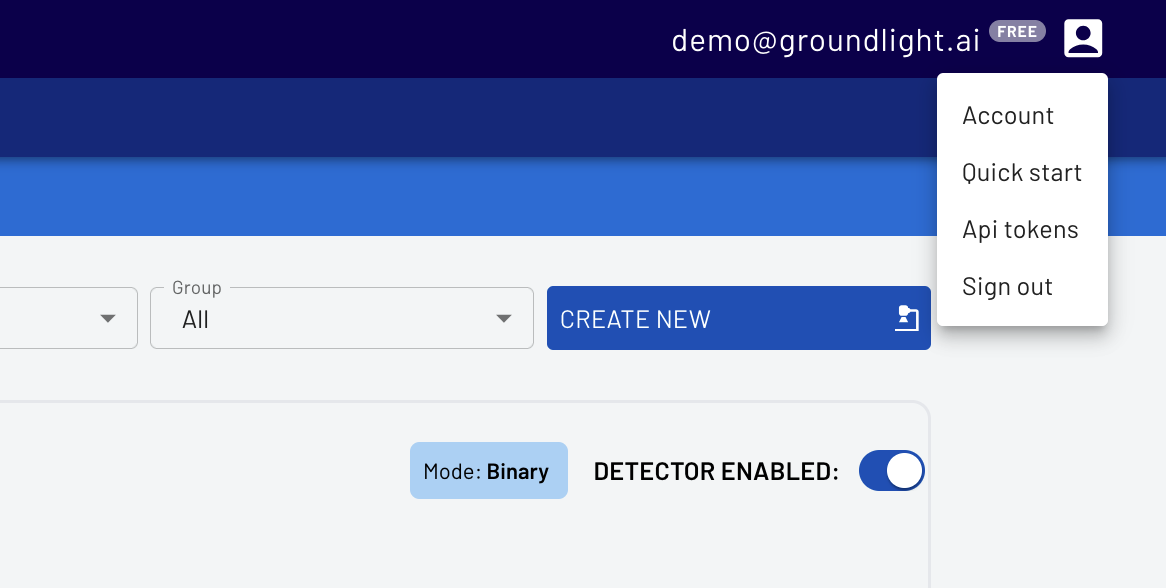

| 61 | +2. Once in, click on your username in the upper right hand corner of your dashboard: |

44 | 62 |

|

45 | | - ```python title="ask.py" |

46 | | - from groundlight import Groundlight |

| 63 | + |

47 | 64 |

|

48 | | - gl = Groundlight() |

49 | | - det = gl.get_or_create_detector(name="doorway", query="Is the doorway open?") |

50 | | - img = "./docs/static/img/doorway.jpg" # Image can be a file or a Python object |

51 | | - image_query = gl.submit_image_query(detector=det, image=img) |

52 | | - print(f"The answer is {image_query.result}") |

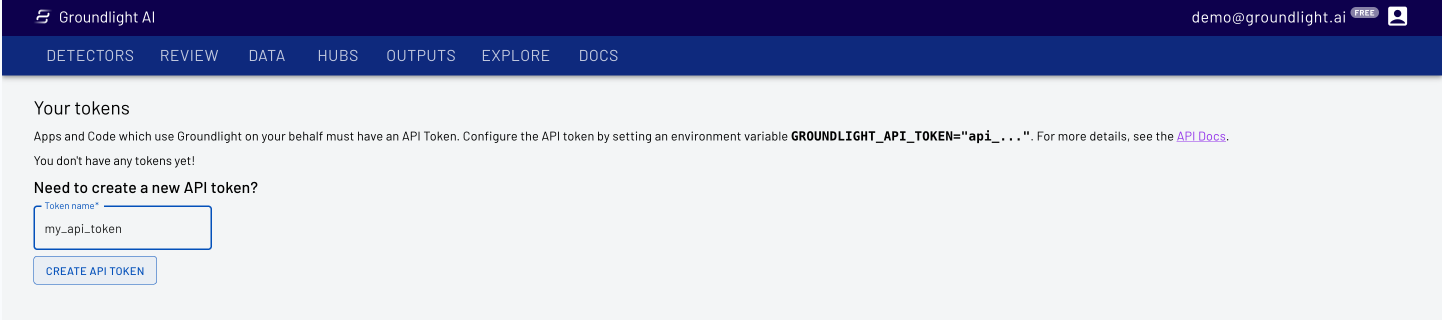

| 65 | +3. Select API Tokens, then enter a name, like ‘personal-laptop-token’ for your api token. |

| 66 | + |

| 67 | + |

| 68 | + |

| 69 | +4. Copy the API Token for use in your code |

| 70 | + |

| 71 | +IMPORTANT: Keep your API token secure! Anyone who has access to it can impersonate you and will have access to your Groundlight data |

| 72 | + |

| 73 | + |

| 74 | + ```bash |

| 75 | + $env:GROUNDLIGHT_API_TOKEN="YOUR_API_TOKEN_HERE" |

53 | 76 | ``` |

| 77 | +Or on Mac |

| 78 | + ```bash |

| 79 | + export GROUNDLIGHT_API_TOKEN="YOUR_API_TOKEN_HERE" |

| 80 | + ``` |

| 81 | + |

| 82 | + |

| 83 | +### Writing the code |

| 84 | + |

| 85 | +For your first and simple application you can build a binary detector, which is computer vision model where the answer will either be 'Yes' or 'No'. Groundlight AI will confirm if the thumb is facing up or down ("Is the thumb facing up?"). |

| 86 | + |

| 87 | +You can start using Groundlight using just your laptop camera, but you can also use a USB camera if you have one. |

54 | 88 |

|

55 | | -1. Run it! |

| 89 | + ```python |

| 90 | + import groundlight |

| 91 | + import cv2 |

| 92 | + from framegrab import FrameGrabber |

| 93 | + import time |

56 | 94 |

|

57 | | - ```shell |

58 | | - python ask.py |

| 95 | + gl = groundlight.Groundlight() |

| 96 | + |

| 97 | + detector_name = "trash_detector" |

| 98 | + detector_query = "Is the trash can overflowing" |

| 99 | + |

| 100 | + detector = gl.get_or_create_detector(detector_name, detector_query) |

| 101 | + |

| 102 | + grabber = list(FrameGrabber.autodiscover().values())[0] |

| 103 | + |

| 104 | + WAIT_TIME = 5 |

| 105 | + last_capture_time = time.time() - WAIT_TIME |

| 106 | + |

| 107 | + while True: |

| 108 | + frame = grabber.grab() |

| 109 | + |

| 110 | + cv2.imshow('Video Feed', frame) |

| 111 | + key = cv2.waitKey(30) |

| 112 | + |

| 113 | + if key == ord('q'): |

| 114 | + break |

| 115 | + # # Press enter to submit an image query |

| 116 | + # elif key in (ord('\r'), ord('\n')): |

| 117 | + # print(f'Asking question: {detector_query}') |

| 118 | + # image_query = gl.submit_image_query(detector, frame) |

| 119 | + # print(f'The answer is {image_query.result.label.value}') |

| 120 | + |

| 121 | + # # Press 'y' or 'n' to submit a label |

| 122 | + # elif key in (ord('y'), ord('n')): |

| 123 | + # if key == ord('y'): |

| 124 | + # label = 'YES' |

| 125 | + # else: |

| 126 | + # label = 'NO' |

| 127 | + # image_query = gl.ask_async(detector, frame, human_review="NEVER") |

| 128 | + # gl.add_label(image_query, label) |

| 129 | + # print(f'Adding label {label} for image query {image_query.id}') |

| 130 | + |

| 131 | + # Submit image queries in a timed loop |

| 132 | + now = time.time() |

| 133 | + if last_capture_time + WAIT_TIME < now: |

| 134 | + last_capture_time = now |

| 135 | + |

| 136 | + print(f'Asking question: {detector_query}') |

| 137 | + image_query = gl.submit_image_query(detector, frame) |

| 138 | + print(f'The answer is {image_query.result.label.value}') |

| 139 | + |

| 140 | + grabber.release() |

| 141 | + cv2.destroyAllWindows() |

59 | 142 | ``` |

| 143 | + This code will take an image from your connected camera every minute and ask Groundlight a question in natural language, before printing out the answer. |

| 144 | + |

| 145 | + |

| 146 | + |

| 147 | +### Using your computer vision application |

| 148 | + |

| 149 | +Just like that, you have a complete computer vision application. You can change the code and configure a detector for your specific use case. Also, you can monitor and improve the performance of your detector at [https://dashboard.groundlight.ai/](https://dashboard.groundlight.ai/). Groundlight’s human-in-the-loop technology will monitor your image feed for unexpected changes and anomalies, and by verifying answers returned by Groundlight you can improve the process. At app.groundlight.ai, you can also set up text and email notifications, so you can be alerted when something of interest happens in your video stream. |

| 150 | + |

| 151 | + |

| 152 | + |

| 153 | +### If You're Looking for More: |

| 154 | + |

| 155 | +Now that you've built your first application, learn how to [write queries](https://code.groundlight.ai/python-sdk/docs/getting-started/writing-queries). |

| 156 | + |

| 157 | +Want to play around with sample applications built by Groundlight AI? Visit [Guides](https://www.groundlight.ai/guides) to build example applications, from detecting birds outside of your window to running Groundlight AI on a Raspberry Pi. |

0 commit comments