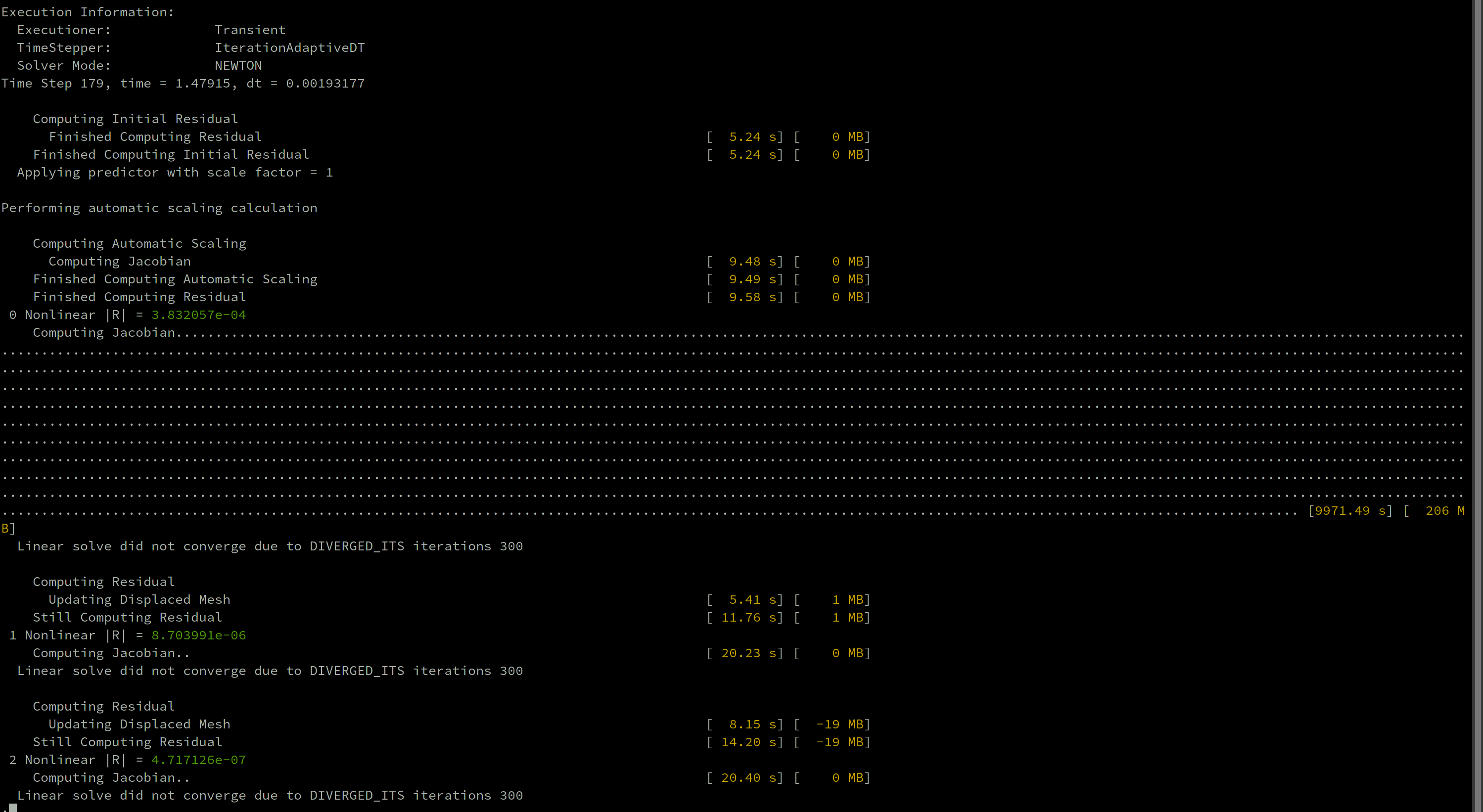

Computation at HPC system: First time computing Jacobian takes incredibly long, subsequent computations are OK #20164

-

Beta Was this translation helpful? Give feedback.

Replies: 4 comments 9 replies

-

|

I have no idea. |

Beta Was this translation helpful? Give feedback.

-

|

What is your physics? Do you have contact? This kind of behavior is often seen when the initial sparsity pattern is not correct and PETSc has to allocate memory on the fly during Jacobian assembly. Once the first Jacobian is computed, typically the memory is sufficient for all subsequent Jacobian calculations. Our traditional contact methods are known to have some issues with the initial sparsity pattern, at least based on some recent reports |

Beta Was this translation helpful? Give feedback.

-

|

Thank you for both answers! My application is essentially a TensorMechanis App, using my own (stress divergence) kernels. I could try to run the same example without the Furthermore, I am using no threading, just pure MPI parallelization. |

Beta Was this translation helpful? Give feedback.

-

|

I think you were right: |

Beta Was this translation helpful? Give feedback.

What is your physics? Do you have contact? This kind of behavior is often seen when the initial sparsity pattern is not correct and PETSc has to allocate memory on the fly during Jacobian assembly. Once the first Jacobian is computed, typically the memory is sufficient for all subsequent Jacobian calculations. Our traditional contact methods are known to have some issues with the initial sparsity pattern, at least based on some recent reports