diff --git a/notebooks/qwen3-omni-chatbot/README.md b/notebooks/qwen3-omni-chatbot/README.md

new file mode 100644

index 00000000000..ab4a729e96d

--- /dev/null

+++ b/notebooks/qwen3-omni-chatbot/README.md

@@ -0,0 +1,42 @@

+# Omnimodal assistant with Qwen3-Omni and OpenVINO

+

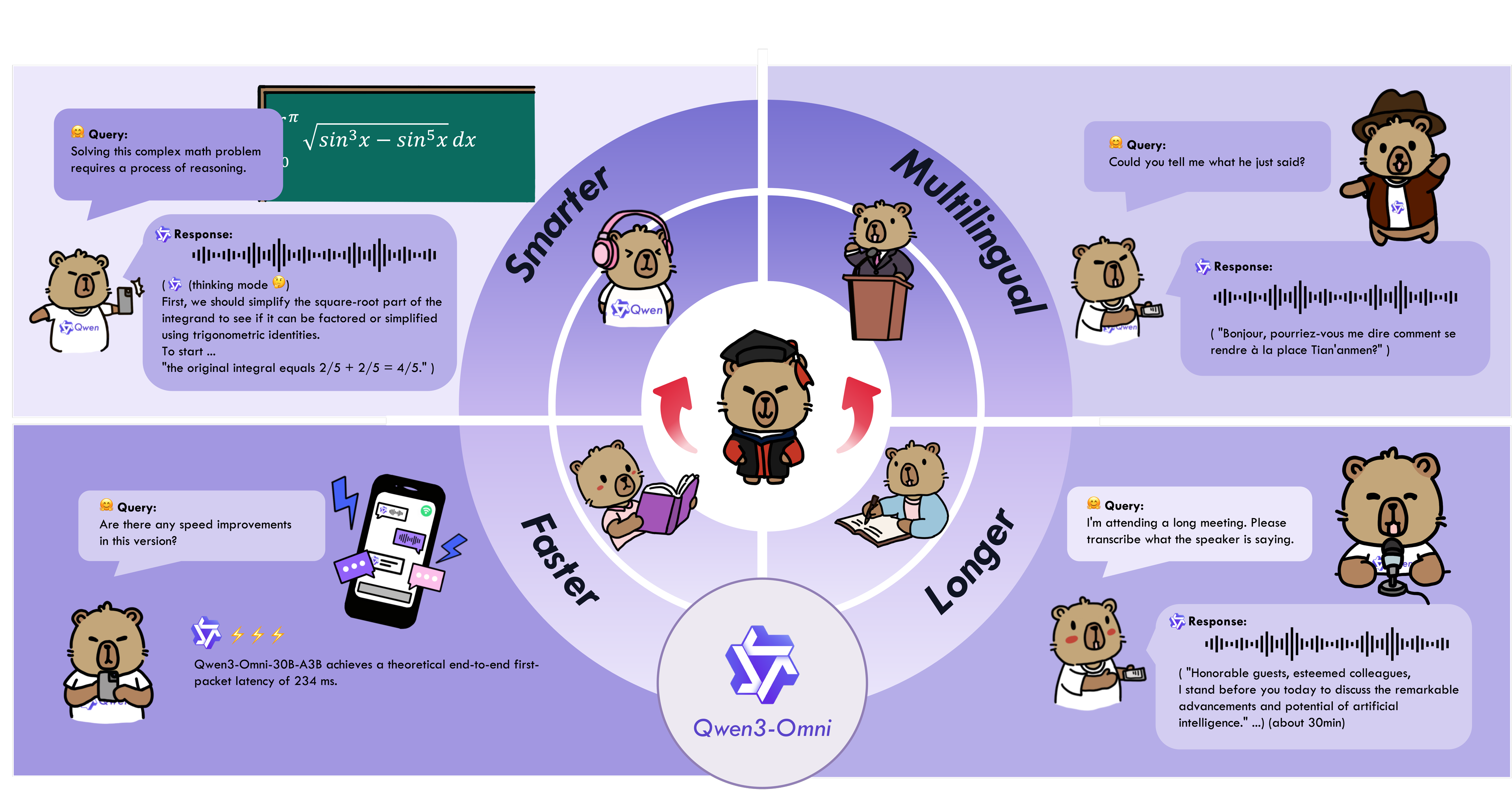

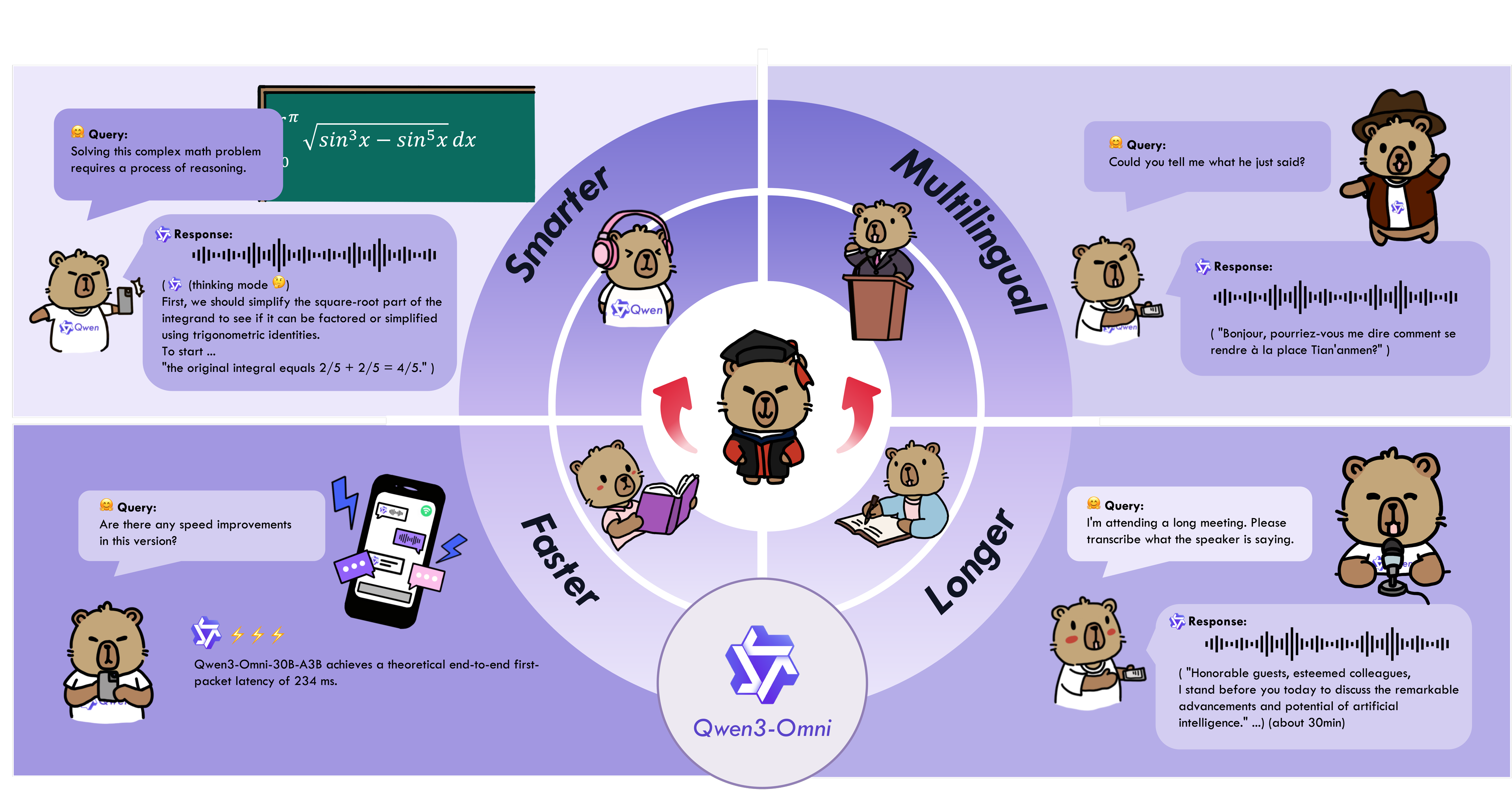

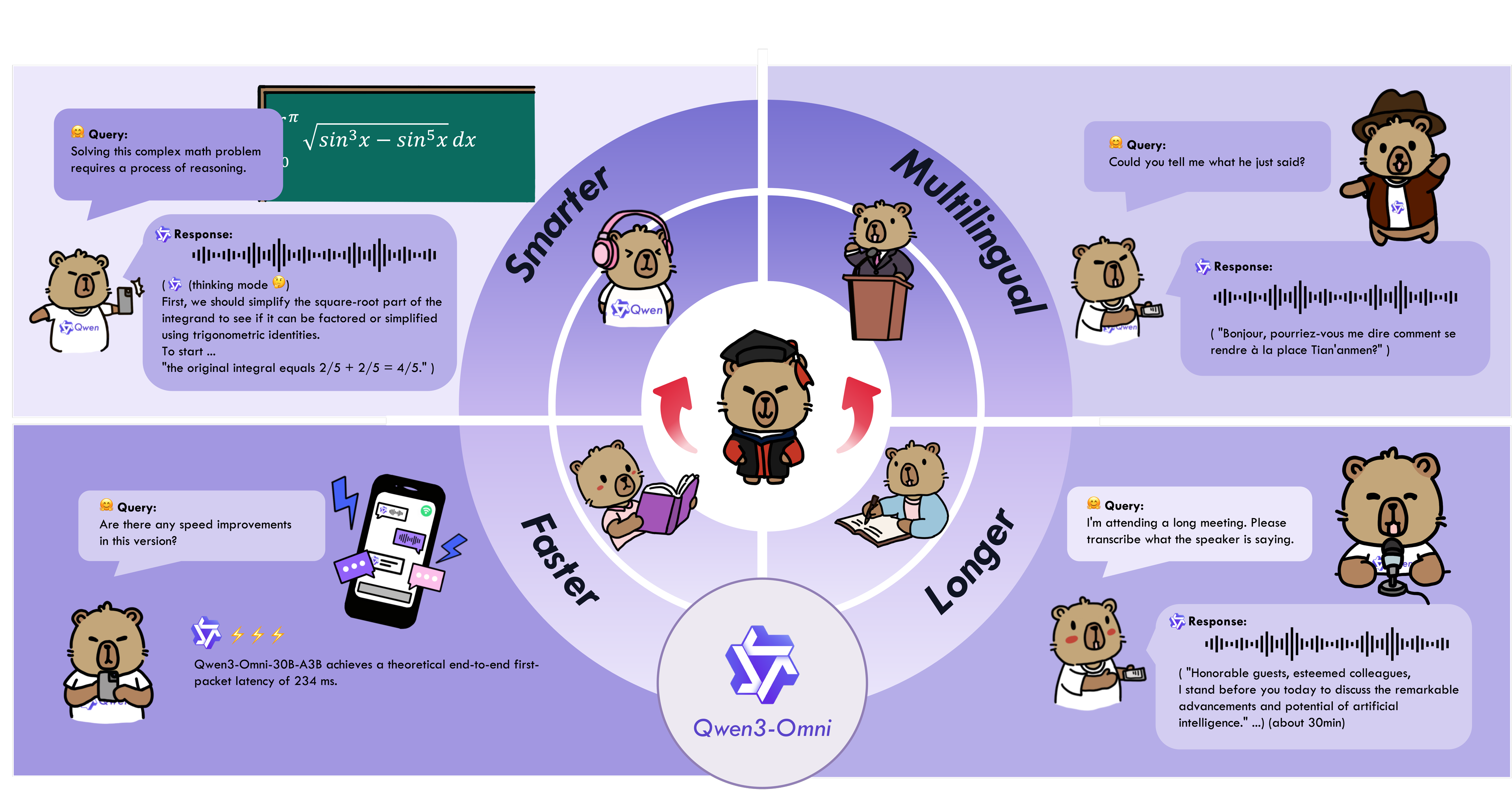

+Qwen3-Omni is the natively end-to-end multilingual omni-modal foundation models. It processes text, images, audio, and video, and delivers real-time streaming responses in both text and natural speech. We introduce several architectural upgrades to improve performance and efficiency. Key features:

+

+* **State-of-the-art across modalities**: Early text-first pretraining and mixed multimodal training provide native multimodal support. While achieving strong audio and audio-video results, unimodal text and image performance does not regress. Reaches SOTA on 22 of 36 audio/video benchmarks and open-source SOTA on 32 of 36; ASR, audio understanding, and voice conversation performance is comparable to Gemini 2.5 Pro.

+

+* **Multilingual**: Supports 119 text languages, 19 speech input languages, and 10 speech output languages.

+ - **Speech Input**: English, Chinese, Korean, Japanese, German, Russian, Italian, French, Spanish, Portuguese, Malay, Dutch, Indonesian, Turkish, Vietnamese, Cantonese, Arabic, Urdu.

+ - **Speech Output**: English, Chinese, French, German, Russian, Italian, Spanish, Portuguese, Japanese, Korean.

+

+* **Novel Architecture**: MoE-based Thinker–Talker design with AuT pretraining for strong general representations, plus a multi-codebook design that drives latency to a minimum.

+

+* **Real-time Audio/Video Interaction**: Low-latency streaming with natural turn-taking and immediate text or speech responses.

+

+* **Flexible Control**: Customize behavior via system prompts for fine-grained control and easy adaptation.

+

+* **Detailed Audio Captioner**: Qwen3-Omni-30B-A3B-Captioner is now open source: a general-purpose, highly detailed, low-hallucination audio captioning model that fills a critical gap in the open-source community.

+

+

+  +

+

+

+More details about model can be found in [model card](https://huggingface.co/Qwen/Qwen3-Omni-30B-A3B-Instruct) and original [repo](https://github.com/QwenLM/Qwen3-Omni/tree/main).

+

+## Notebook contents

+The tutorial consists from following steps:

+

+- Install requirements

+- Download PyTorch model

+- Convert model to OpenVINO Intermediate Representation (IR)

+- Compress Language Model weights

+- Run OpenVINO model inference

+- Launch Interactive demo

+

+In this demonstration, you'll create interactive chatbot that can answer questions about provided image's content. Image bellow shows a result of model work.

+

+

+

+## Installation instructions

+This is a self-contained example that relies solely on its own code.

+We recommend running the notebook in a virtual environment. You only need a Jupyter server to start.

+For details, please refer to [Installation Guide](../../README.md).

diff --git a/notebooks/qwen3-omni-chatbot/qwen3-omni-chatbot.ipynb b/notebooks/qwen3-omni-chatbot/qwen3-omni-chatbot.ipynb

new file mode 100644

index 00000000000..e5e630d2246

--- /dev/null

+++ b/notebooks/qwen3-omni-chatbot/qwen3-omni-chatbot.ipynb

@@ -0,0 +1,915 @@

+{

+ "cells": [

+ {

+ "attachments": {},

+ "cell_type": "markdown",

+ "id": "5918b41c-dad7-4f7b-9e39-b3026933dddf",

+ "metadata": {},

+ "source": [

+ "# Omnimodal assistant with Qwen3-Omni and OpenVINO\n",

+ "\n",

+ "Qwen3-Omni is the natively end-to-end multilingual omni-modal foundation models. It processes text, images, audio, and video, and delivers real-time streaming responses in both text and natural speech. We introduce several architectural upgrades to improve performance and efficiency. Key features:\n",

+ "\n",

+ "* **State-of-the-art across modalities**: Early text-first pretraining and mixed multimodal training provide native multimodal support. While achieving strong audio and audio-video results, unimodal text and image performance does not regress. Reaches SOTA on 22 of 36 audio/video benchmarks and open-source SOTA on 32 of 36; ASR, audio understanding, and voice conversation performance is comparable to Gemini 2.5 Pro.\n",

+ "\n",

+ "* **Multilingual**: Supports 119 text languages, 19 speech input languages, and 10 speech output languages.\n",

+ " - **Speech Input**: English, Chinese, Korean, Japanese, German, Russian, Italian, French, Spanish, Portuguese, Malay, Dutch, Indonesian, Turkish, Vietnamese, Cantonese, Arabic, Urdu.\n",

+ " - **Speech Output**: English, Chinese, French, German, Russian, Italian, Spanish, Portuguese, Japanese, Korean.\n",

+ "\n",

+ "* **Novel Architecture**: MoE-based Thinker–Talker design with AuT pretraining for strong general representations, plus a multi-codebook design that drives latency to a minimum.\n",

+ "\n",

+ "* **Real-time Audio/Video Interaction**: Low-latency streaming with natural turn-taking and immediate text or speech responses.\n",

+ "\n",

+ "* **Flexible Control**: Customize behavior via system prompts for fine-grained control and easy adaptation.\n",

+ "\n",

+ "* **Detailed Audio Captioner**: Qwen3-Omni-30B-A3B-Captioner is now open source: a general-purpose, highly detailed, low-hallucination audio captioning model that fills a critical gap in the open-source community.\n",

+ "\n",

+ "

\n",

+ "  \n",

+ "

\n",

+ "

\n",

+ "\n",

+ "More details about model can be found in [model card](https://huggingface.co/Qwen/Qwen3-Omni-30B-A3B-Instruct) and original [repo](https://github.com/QwenLM/Qwen3-Omni/tree/main).\n",

+ "\n",

+ "In this tutorial we consider how to convert and optimize Qwen3-Omni model for creating omnimodal chatbot. Additionally, we demonstrate how to apply stateful transformation on LLM part and model optimization techniques like weights compression using [NNCF](https://github.com/openvinotoolkit/nncf)\n",

+ "\n",

+ "#### Table of contents:\n",

+ "\n",

+ "- [Prerequisites](#Prerequisites)\n",

+ "- [Convert model to OpenVINO Intermediate Representation](#Convert-model-to-OpenVINO-Intermediate-Representation)\n",

+ " - [Compress Language Model Weights to 4 bits](#Compress-Language-Model-Weights-to-4-bits)\n",

+ "- [Prepare model inference pipeline](#Prepare-model-inference-pipeline)\n",

+ " - [Select device](#Select-device)\n",

+ " - [Initialize model tasks](#Initialize-model-tasks)\n",

+ "- [Run OpenVINO model inference](#Run-OpenVINO-model-inference)\n",

+ " - [Text-only input and Audio output](#Text-only-input-and-Audio-output)\n",

+ " - [Text-Image input](#Text-Image-input)\n",

+ " - [Audio-Text input](#Audio-Text-input)\n",

+ " - [Video-text input](#Video-text-input)\n",

+ "- [Interactive demo](#Interactive-demo)\n",

+ "\n",

+ "\n",

+ "### Installation Instructions\n",

+ "\n",

+ "This is a self-contained example that relies solely on its own code.\n",

+ "\n",

+ "We recommend running the notebook in a virtual environment. You only need a Jupyter server to start.\n",

+ "For details, please refer to [Installation Guide](https://github.com/openvinotoolkit/openvino_notebooks/blob/latest/README.md#-installation-guide).\n"

+ ]

+ },

+ {

+ "attachments": {},

+ "cell_type": "markdown",

+ "id": "8ff80f4e-08df-4bcd-a72a-4f77bdd5768b",

+ "metadata": {},

+ "source": [

+ "## Prerequisites\n",

+ "[back to top ⬆️](#Table-of-contents:)"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": 1,

+ "id": "1534e378-1b87-4f1b-94e8-09061e960700",

+ "metadata": {},

+ "outputs": [

+ {

+ "name": "stdout",

+ "output_type": "stream",

+ "text": [

+ "Requirement already satisfied: openvino>=2026.0.0 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (2026.0.0)\n",

+ "Requirement already satisfied: nncf>=2.18.0 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (2.19.0)\n",

+ "Requirement already satisfied: numpy<2.5.0,>=1.16.6 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from openvino>=2026.0.0) (1.26.4)\n",

+ "Requirement already satisfied: openvino-telemetry>=2023.2.1 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from openvino>=2026.0.0) (2025.2.0)\n",

+ "Requirement already satisfied: jsonschema>=3.2.0 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from nncf>=2.18.0) (4.26.0)\n",

+ "Requirement already satisfied: natsort>=7.1.0 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from nncf>=2.18.0) (8.4.0)\n",

+ "Requirement already satisfied: networkx<3.5.0,>=2.6 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from nncf>=2.18.0) (3.4.2)\n",

+ "Requirement already satisfied: ninja<1.14,>=1.10.0.post2 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from nncf>=2.18.0) (1.13.0)\n",

+ "Requirement already satisfied: packaging>=20.0 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from nncf>=2.18.0) (25.0)\n",

+ "Requirement already satisfied: pandas<2.4,>=1.1.5 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from nncf>=2.18.0) (2.3.3)\n",

+ "Requirement already satisfied: psutil in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from nncf>=2.18.0) (7.2.1)\n",

+ "Requirement already satisfied: pydot<=3.0.4,>=1.4.1 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from nncf>=2.18.0) (3.0.4)\n",

+ "Requirement already satisfied: pymoo>=0.6.0.1 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from nncf>=2.18.0) (0.6.1.6)\n",

+ "Requirement already satisfied: rich>=13.5.2 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from nncf>=2.18.0) (14.2.0)\n",

+ "Requirement already satisfied: safetensors>=0.4.1 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from nncf>=2.18.0) (0.7.0)\n",

+ "Requirement already satisfied: scikit-learn>=0.24.0 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from nncf>=2.18.0) (1.7.2)\n",

+ "Requirement already satisfied: scipy>=1.3.2 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from nncf>=2.18.0) (1.15.3)\n",

+ "Requirement already satisfied: tabulate>=0.9.0 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from nncf>=2.18.0) (0.9.0)\n",

+ "Requirement already satisfied: python-dateutil>=2.8.2 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from pandas<2.4,>=1.1.5->nncf>=2.18.0) (2.9.0.post0)\n",

+ "Requirement already satisfied: pytz>=2020.1 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from pandas<2.4,>=1.1.5->nncf>=2.18.0) (2025.2)\n",

+ "Requirement already satisfied: tzdata>=2022.7 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from pandas<2.4,>=1.1.5->nncf>=2.18.0) (2025.3)\n",

+ "Requirement already satisfied: pyparsing>=3.0.9 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from pydot<=3.0.4,>=1.4.1->nncf>=2.18.0) (3.3.1)\n",

+ "Requirement already satisfied: attrs>=22.2.0 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from jsonschema>=3.2.0->nncf>=2.18.0) (25.4.0)\n",

+ "Requirement already satisfied: jsonschema-specifications>=2023.03.6 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from jsonschema>=3.2.0->nncf>=2.18.0) (2025.9.1)\n",

+ "Requirement already satisfied: referencing>=0.28.4 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from jsonschema>=3.2.0->nncf>=2.18.0) (0.37.0)\n",

+ "Requirement already satisfied: rpds-py>=0.25.0 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from jsonschema>=3.2.0->nncf>=2.18.0) (0.30.0)\n",

+ "Requirement already satisfied: moocore>=0.1.7 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from pymoo>=0.6.0.1->nncf>=2.18.0) (0.2.0)\n",

+ "Requirement already satisfied: autograd>=1.4 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from pymoo>=0.6.0.1->nncf>=2.18.0) (1.8.0)\n",

+ "Requirement already satisfied: cma>=3.2.2 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from pymoo>=0.6.0.1->nncf>=2.18.0) (4.4.1)\n",

+ "Requirement already satisfied: matplotlib>=3 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from pymoo>=0.6.0.1->nncf>=2.18.0) (3.10.7)\n",

+ "Requirement already satisfied: alive_progress in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from pymoo>=0.6.0.1->nncf>=2.18.0) (3.3.0)\n",

+ "Requirement already satisfied: Deprecated in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from pymoo>=0.6.0.1->nncf>=2.18.0) (1.3.1)\n",

+ "Requirement already satisfied: contourpy>=1.0.1 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from matplotlib>=3->pymoo>=0.6.0.1->nncf>=2.18.0) (1.3.2)\n",

+ "Requirement already satisfied: cycler>=0.10 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from matplotlib>=3->pymoo>=0.6.0.1->nncf>=2.18.0) (0.12.1)\n",

+ "Requirement already satisfied: fonttools>=4.22.0 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from matplotlib>=3->pymoo>=0.6.0.1->nncf>=2.18.0) (4.61.1)\n",

+ "Requirement already satisfied: kiwisolver>=1.3.1 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from matplotlib>=3->pymoo>=0.6.0.1->nncf>=2.18.0) (1.4.9)\n",

+ "Requirement already satisfied: pillow>=8 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from matplotlib>=3->pymoo>=0.6.0.1->nncf>=2.18.0) (10.4.0)\n",

+ "Requirement already satisfied: cffi>=1.17.1 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from moocore>=0.1.7->pymoo>=0.6.0.1->nncf>=2.18.0) (2.0.0)\n",

+ "Requirement already satisfied: platformdirs in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from moocore>=0.1.7->pymoo>=0.6.0.1->nncf>=2.18.0) (4.5.1)\n",

+ "Requirement already satisfied: pycparser in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from cffi>=1.17.1->moocore>=0.1.7->pymoo>=0.6.0.1->nncf>=2.18.0) (2.23)\n",

+ "Requirement already satisfied: six>=1.5 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from python-dateutil>=2.8.2->pandas<2.4,>=1.1.5->nncf>=2.18.0) (1.17.0)\n",

+ "Requirement already satisfied: typing-extensions>=4.4.0 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from referencing>=0.28.4->jsonschema>=3.2.0->nncf>=2.18.0) (4.15.0)\n",

+ "Requirement already satisfied: markdown-it-py>=2.2.0 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from rich>=13.5.2->nncf>=2.18.0) (4.0.0)\n",

+ "Requirement already satisfied: pygments<3.0.0,>=2.13.0 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from rich>=13.5.2->nncf>=2.18.0) (2.19.2)\n",

+ "Requirement already satisfied: mdurl~=0.1 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from markdown-it-py>=2.2.0->rich>=13.5.2->nncf>=2.18.0) (0.1.2)\n",

+ "Requirement already satisfied: joblib>=1.2.0 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from scikit-learn>=0.24.0->nncf>=2.18.0) (1.5.3)\n",

+ "Requirement already satisfied: threadpoolctl>=3.1.0 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from scikit-learn>=0.24.0->nncf>=2.18.0) (3.6.0)\n",

+ "Requirement already satisfied: about-time==4.2.1 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from alive_progress->pymoo>=0.6.0.1->nncf>=2.18.0) (4.2.1)\n",

+ "Requirement already satisfied: graphemeu==0.7.2 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from alive_progress->pymoo>=0.6.0.1->nncf>=2.18.0) (0.7.2)\n",

+ "Requirement already satisfied: wrapt<3,>=1.10 in /home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages (from Deprecated->pymoo>=0.6.0.1->nncf>=2.18.0) (2.0.1)\n"

+ ]

+ }

+ ],

+ "source": [

+ "import requests\n",

+ "from pathlib import Path\n",

+ "import sys\n",

+ "\n",

+ "\n",

+ "if not Path(\"qwen_3_omni_moe_helper.py\").exists():\n",

+ " r = requests.get(url=\"https://raw.githubusercontent.com/openvinotoolkit/openvino_notebooks/latest/notebooks/qwen3-omni-chatbot/qwen_3_omni_moe_helper.py\")\n",

+ " open(\"qwen_3_omni_moe_helper.py\", \"w\").write(r.text)\n",

+ "\n",

+ "\n",

+ "if not Path(\"gradio_helper.py\").exists():\n",

+ " r = requests.get(url=\"https://raw.githubusercontent.com/openvinotoolkit/openvino_notebooks/latest/notebooks/qwen3-omni-chatbot/gradio_helper.py\")\n",

+ " open(\"gradio_helper.py\", \"w\").write(r.text)\n",

+ "\n",

+ "if not Path(\"notebook_utils.py\").exists():\n",

+ " r = requests.get(url=\"https://raw.githubusercontent.com/openvinotoolkit/openvino_notebooks/latest/utils/notebook_utils.py\")\n",

+ " open(\"notebook_utils.py\", \"w\").write(r.text)\n",

+ "\n",

+ "if not Path(\"pip_helper.py\").exists():\n",

+ " r = requests.get(\n",

+ " url=\"https://raw.githubusercontent.com/openvinotoolkit/openvino_notebooks/latest/utils/pip_helper.py\",\n",

+ " )\n",

+ " open(\"pip_helper.py\", \"w\").write(r.text)\n",

+ "\n",

+ "from pip_helper import pip_install\n",

+ "\n",

+ "pip_install(\n",

+ " \"-q\",\n",

+ " \"transformers==4.57.0\",\n",

+ " \"torch==2.8\",\n",

+ " \"torchvision==0.23.0\",\n",

+ " \"accelerate\",\n",

+ " \"gradio>=4.19\",\n",

+ " \"--no-cache-dir\",\n",

+ " \"--extra-index-url\",\n",

+ " \"https://download.pytorch.org/whl/cpu\",\n",

+ ")\n",

+ " \n",

+ "pip_install(\"-Uq\", \"qwen-omni-utils\")\n",

+ "pip_install(\"openvino>=2026.0.0\", \"nncf>=2.18.0\")\n",

+ "\n",

+ "# Read more about telemetry collection at https://github.com/openvinotoolkit/openvino_notebooks?tab=readme-ov-file#-telemetry\n",

+ "from notebook_utils import collect_telemetry\n",

+ "\n",

+ "collect_telemetry(\"qwen3-omni-chatbot.ipynb\")"

+ ]

+ },

+ {

+ "attachments": {},

+ "cell_type": "markdown",

+ "id": "539aae31-dded-4036-8946-67a4ec9e6034",

+ "metadata": {},

+ "source": [

+ "## Convert model to OpenVINO Intermediate Representation\n",

+ "[back to top ⬆️](#Table-of-contents:)\n",

+ "\n",

+ "OpenVINO supports PyTorch models via conversion to OpenVINO Intermediate Representation (IR). [OpenVINO model conversion API](https://docs.openvino.ai/2024/openvino-workflow/model-preparation.html#convert-a-model-with-python-convert-model) should be used for these purposes. `ov.convert_model` function accepts original PyTorch model instance and example input for tracing and returns `ov.Model` representing this model in OpenVINO framework. Converted model can be used for saving on disk using `ov.save_model` function or directly loading on device using `core.complie_model`.\n",

+ "\n",

+ "`qwen_3_omni_moe_helper.py` script contains helper function for model conversion, please check its content if you interested in conversion details.\n",

+ "\n",

+ "### Qwen3-Omni-MoE Architecture Overview\n",

+ "\n",

+ "**Qwen3-Omni-MoE** is a multimodal Mixture-of-Experts (MoE) model capable of processing text, images, video, and audio inputs, and generating both text and speech outputs. The architecture consists of two main components: **Thinker** and **Talker**.\n",

+ "\n",

+ "#### 1. Thinker (Understanding Module)\n",

+ "\n",

+ "The Thinker is responsible for understanding multimodal inputs and generating semantic representations.\n",

+ "\n",

+ "**Sub-models:**\n",

+ "\n",

+ "- **Thinker Audio Encoder**: Processes audio features through convolutional layers (conv2d1, conv2d2, conv2d3) and extracts audio embeddings\n",

+ "- **Thinker Vision Encoder**: Processes images/videos using Vision Transformer with rotary positional embeddings (Qwen2-VL style)\n",

+ "- **Thinker Vision Positional Encoder**: Computes 3D rope indices for spatial-temporal features\n",

+ "- **Thinker Vision Merger**: Merges multi-scale visual features using spatial merge strategy\n",

+ "- **Thinker Embedding**: Text token embeddings for language input\n",

+ "- **Thinker Language Model**: MoE-based decoder with sparse experts (Qwen3MoeThinkerTextExperts) that processes fused multimodal embeddings and generates hidden states\n",

+ "- **Thinker Patcher/Merger**: Combines text, audio, and visual embeddings with DeepStack visual features across layers\n",

+ "\n",

+ "#### 2. Talker (Speech Generation Module)\n",

+ "\n",

+ "The Talker generates speech outputs from the Thinker's representations.\n",

+ "\n",

+ "**Sub-models:**\n",

+ "\n",

+ "- **Talker Embedding**: Converts input tokens to embeddings\n",

+ "- **Talker Hidden Projection**: Projects Thinker's hidden states to Talker's hidden space\n",

+ "- **Talker Text Projection**: Additional projection layer for text features\n",

+ "- **Talker Language Model**: MoE decoder (Qwen3MoeTalkerTextExperts) that processes projected features and generates codec predictions\n",

+ "- **Talker Code Predictor**: Predicts audio codec codes for speech synthesis (with separate embedding and decoder)\n",

+ "\n",

+ "#### 3. Code2Wav Module\n",

+ "\n",

+ "Converts predicted audio codes into waveform audio output (vocoder).\n",

+ "\n",

+ "Let's convert each model part."

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": 2,

+ "id": "840d08db",

+ "metadata": {},

+ "outputs": [

+ {

+ "data": {

+ "application/vnd.jupyter.widget-view+json": {

+ "model_id": "7104335cadc545ada68144104792f54b",

+ "version_major": 2,

+ "version_minor": 0

+ },

+ "text/plain": [

+ "Dropdown(description='Model:', options=('Qwen/Qwen3-Omni-30B-A3B-Instruct', 'Qwen/Qwen3-Omni-30B-A3B-Thinking'…"

+ ]

+ },

+ "execution_count": 2,

+ "metadata": {},

+ "output_type": "execute_result"

+ }

+ ],

+ "source": [

+ "import ipywidgets as widgets\n",

+ "\n",

+ "model_ids = [\"Qwen/Qwen3-Omni-30B-A3B-Instruct\", \"Qwen/Qwen3-Omni-30B-A3B-Thinking\"]\n",

+ "\n",

+ "model_id = widgets.Dropdown(\n",

+ " options=model_ids,\n",

+ " default=model_ids[0],\n",

+ " description=\"Model:\",\n",

+ ")\n",

+ "\n",

+ "model_id"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": 3,

+ "id": "ff007127-7421-448e-9668-b3fdb32eca51",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "model_id = model_id.value\n",

+ "model_dir = Path(model_id.split(\"/\")[-1])"

+ ]

+ },

+ {

+ "attachments": {},

+ "cell_type": "markdown",

+ "id": "82f37188-7434-4dc9-ba3c-a9779cd4cdc8",

+ "metadata": {},

+ "source": [

+ "### Compress Thinker Model Weights to 4 bits\n",

+ "[back to top ⬆️](#Table-of-contents:)\n",

+ "\n",

+ "For reducing memory consumption, weights compression optimization can be applied using [NNCF](https://github.com/openvinotoolkit/nncf). \n",

+ "\n",

+ "\n",

+ " Click here for more details about weight compression

\n",

+ "Weight compression aims to reduce the memory footprint of a model. It can also lead to significant performance improvement for large memory-bound models, such as Large Language Models (LLMs). LLMs and other models, which require extensive memory to store the weights during inference, can benefit from weight compression in the following ways:\n",

+ "\n",

+ "* enabling the inference of exceptionally large models that cannot be accommodated in the memory of the device;\n",

+ "\n",

+ "* improving the inference performance of the models by reducing the latency of the memory access when computing the operations with weights, for example, Linear layers.\n",

+ "\n",

+ "[Neural Network Compression Framework (NNCF)](https://github.com/openvinotoolkit/nncf) provides 4-bit / 8-bit mixed weight quantization as a compression method primarily designed to optimize LLMs. The main difference between weights compression and full model quantization (post-training quantization) is that activations remain floating-point in the case of weights compression which leads to a better accuracy. Weight compression for LLMs provides a solid inference performance improvement which is on par with the performance of the full model quantization. In addition, weight compression is data-free and does not require a calibration dataset, making it easy to use.\n",

+ "\n",

+ "`nncf.compress_weights` function can be used for performing weights compression. The function accepts an OpenVINO model and other compression parameters. Compared to INT8 compression, INT4 compression improves performance even more, but introduces a minor drop in prediction quality.\n",

+ "\n",

+ "More details about weights compression, can be found in [OpenVINO documentation](https://docs.openvino.ai/2024/openvino-workflow/model-optimization-guide/weight-compression.html).\n",

+ "\n",

+ " \n",

+ "\n",

+ "> **Note:** weights compression process may require additional time and memory for performing. You can disable it using widget below:"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": 5,

+ "id": "6e938ae8-7e49-4c61-88b9-0b73c8fa8407",

+ "metadata": {},

+ "outputs": [

+ {

+ "name": "stdout",

+ "output_type": "stream",

+ "text": [

+ "⌛ Qwen/Qwen3-Omni-30B-A3B-Instruct conversion started. Be patient, it may takes some time.\n",

+ "⌛ Load Original model\n"

+ ]

+ },

+ {

+ "name": "stderr",

+ "output_type": "stream",

+ "text": [

+ "Unrecognized keys in `rope_scaling` for 'rope_type'='default': {'interleaved', 'mrope_interleaved', 'mrope_section'}\n",

+ "Unrecognized keys in `rope_scaling` for 'rope_type'='default': {'interleaved', 'mrope_section'}\n"

+ ]

+ },

+ {

+ "data": {

+ "application/vnd.jupyter.widget-view+json": {

+ "model_id": "1445c5204fc543cdac01d19d5ca77ecd",

+ "version_major": 2,

+ "version_minor": 0

+ },

+ "text/plain": [

+ "Loading checkpoint shards: 0%| | 0/15 [00:00\n"

+ ],

+ "text/plain": []

+ },

+ "metadata": {},

+ "output_type": "display_data"

+ },

+ {

+ "name": "stdout",

+ "output_type": "stream",

+ "text": [

+ "INFO:nncf:Statistics of the bitwidth distribution:\n",

+ "┍━━━━━━━━━━━━━━━━━━━━━━━━━━━┯━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┯━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┑\n",

+ "│ Weight compression mode │ % all parameters (layers) │ % ratio-defining parameters (layers) │\n",

+ "┝━━━━━━━━━━━━━━━━━━━━━━━━━━━┿━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┿━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┥\n",

+ "│ int8_asym, per-channel │ 21% (3805 / 18673) │ 20% (3804 / 18672) │\n",

+ "├───────────────────────────┼─────────────────────────────┼────────────────────────────────────────┤\n",

+ "│ int4_asym, group size 128 │ 79% (14868 / 18673) │ 80% (14868 / 18672) │\n",

+ "┕━━━━━━━━━━━━━━━━━━━━━━━━━━━┷━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┷━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┙\n"

+ ]

+ },

+ {

+ "data": {

+ "application/vnd.jupyter.widget-view+json": {

+ "model_id": "55d3440b65e94898ad9831ed341212de",

+ "version_major": 2,

+ "version_minor": 0

+ },

+ "text/plain": [

+ "Output()"

+ ]

+ },

+ "metadata": {},

+ "output_type": "display_data"

+ },

+ {

+ "data": {

+ "text/html": [

+ "

\n"

+ ],

+ "text/plain": []

+ },

+ "metadata": {},

+ "output_type": "display_data"

+ },

+ {

+ "name": "stdout",

+ "output_type": "stream",

+ "text": [

+ "✅ Weights compression finished\n",

+ "✅ Thinker model conversion finished. You can find results in Qwen3-Omni-30B-A3B-Instruct\n",

+ "⌛ Convert talker embedding model\n",

+ "✅ Talker embedding model successfully converted\n",

+ "⌛ Convert talker hidden_projection model\n",

+ "✅ Talker hidden_projection model successfully converted\n",

+ "⌛ Convert talker text_projection model\n",

+ "✅ Talker text_projection model successfully converted\n",

+ "⌛ Convert Talker Language model (MoE)\n"

+ ]

+ },

+ {

+ "name": "stderr",

+ "output_type": "stream",

+ "text": [

+ "/home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages/transformers/models/qwen3_omni_moe/modeling_qwen3_omni_moe.py:2901: TracerWarning: Converting a tensor to a Python boolean might cause the trace to be incorrect. We can't record the data flow of Python values, so this value will be treated as a constant in the future. This means that the trace might not generalize to other inputs!\n",

+ " if position_ids.ndim == 3 and position_ids.shape[0] == 4:\n"

+ ]

+ },

+ {

+ "name": "stdout",

+ "output_type": "stream",

+ "text": [

+ "✅ Talker language model (MoE) successfully converted\n",

+ "✅ Talker model conversion finished. You can find results in Qwen3-Omni-30B-A3B-Instruct\n",

+ "⌛ Convert talker code predictor embedding model\n",

+ "✅ Talker Code Predictor Embedding model successfully converted\n",

+ "⌛ Convert Talker Code Predictor model\n",

+ "✅ Talker Code Predictor model successfully converted\n",

+ "✅ Talker Code Predictor model conversion finished. You can find results in Qwen3-Omni-30B-A3B-Instruct\n",

+ "⌛ Convert code2wav model\n"

+ ]

+ },

+ {

+ "name": "stderr",

+ "output_type": "stream",

+ "text": [

+ "/home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages/transformers/models/qwen3_omni_moe/modeling_qwen3_omni_moe.py:3739: TracerWarning: Converting a tensor to a Python boolean might cause the trace to be incorrect. We can't record the data flow of Python values, so this value will be treated as a constant in the future. This means that the trace might not generalize to other inputs!\n",

+ " if codes.shape[1] != self.config.num_quantizers:\n",

+ "/home/ethan/intel/openvino_notebooks/py_env/lib/python3.10/site-packages/transformers/masking_utils.py:738: TracerWarning: Converting a tensor to a Python boolean might cause the trace to be incorrect. We can't record the data flow of Python values, so this value will be treated as a constant in the future. This means that the trace might not generalize to other inputs!\n",

+ " if batch_size != position_ids.shape[0]:\n",

+ "/home/ethan/intel/openvino_notebooks/notebooks/qwen3-omni-chatbot/qwen_3_omni_moe_helper.py:56: TracerWarning: torch.tensor results are registered as constants in the trace. You can safely ignore this warning if you use this function to create tensors out of constant variables that would be the same every time you call this function. In any other case, this might cause the trace to be incorrect.\n",

+ " ideal_length = (torch.ceil(torch.tensor(n_frames)).to(torch.int64) - 1) * self.stride + (self.kernel_size - self.padding)\n",

+ "/home/ethan/intel/openvino_notebooks/notebooks/qwen3-omni-chatbot/qwen_3_omni_moe_helper.py:56: UserWarning: To copy construct from a tensor, it is recommended to use sourceTensor.detach().clone() or sourceTensor.detach().clone().requires_grad_(True), rather than torch.tensor(sourceTensor).\n",

+ " ideal_length = (torch.ceil(torch.tensor(n_frames)).to(torch.int64) - 1) * self.stride + (self.kernel_size - self.padding)\n"

+ ]

+ },

+ {

+ "name": "stdout",

+ "output_type": "stream",

+ "text": [

+ "✅ Code2Wav model successfully converted\n",

+ "✅ Qwen/Qwen3-Omni-30B-A3B-Instruct model conversion finished. You can find results in Qwen3-Omni-30B-A3B-Instruct\n"

+ ]

+ }

+ ],

+ "source": [

+ "import nncf\n",

+ "from qwen_3_omni_moe_helper import convert_qwen3_omni_moe_model\n",

+ "\n",

+ "compression_configuration = {\n",

+ " \"mode\": nncf.CompressWeightsMode.INT4_ASYM,\n",

+ " \"group_size\": 128,\n",

+ " \"ratio\": 0.8,\n",

+ "}\n",

+ "\n",

+ "convert_qwen3_omni_moe_model(model_id, model_dir, compression_configuration)"

+ ]

+ },

+ {

+ "attachments": {},

+ "cell_type": "markdown",

+ "id": "43855dca-3ef2-4cea-8fc2-3700f769dd06",

+ "metadata": {},

+ "source": [

+ "## Prepare model inference pipeline\n",

+ "[back to top ⬆️](#Table-of-contents:)\n",

+ "\n",

+ "As discussed, the Qwen3-Omni-MoE model comprises multiple specialized components including Vision Encoder, Audio Encoder, Thinker (understanding module), Talker (speech generation module), and Code2Wav vocoder. In `qwen_3_omni_moe_helper.py` we defined the Thinker inference class `OVQwen3OmniMoeThinkerForConditionalGeneration`, the Talker inference class `OVQwen3OmniMoeTalkerForConditionalGeneration`, and the Talker Code Predictor class `OVQwen3OmniMoeTalkerCodePredictorModelForConditionalGeneration` that represent the generation cycle. These classes are based on [HuggingFace Transformers `GenerationMixin`](https://huggingface.co/docs/transformers/main_classes/text_generation) and look similar to [Optimum Intel](https://huggingface.co/docs/optimum/intel/index) `OVModelForCausalLM` used for LLM inference, with the key difference that they can accept multimodal input embeddings and support MoE architecture. The general multimodal model class `OVQwen3OmniMoeModel` orchestrates the entire pipeline, handling multimodal input processing (text, image, video, audio) and generating both text and speech outputs."

+ ]

+ },

+ {

+ "attachments": {},

+ "cell_type": "markdown",

+ "id": "06eada4a-cac9-4501-bed0-059c4e585d57",

+ "metadata": {},

+ "source": [

+ "### Select device\n",

+ "[back to top ⬆️](#Table-of-contents:)"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": 6,

+ "id": "e56db20f-7cf0-4ead-b6af-8e048e61b059",

+ "metadata": {},

+ "outputs": [

+ {

+ "data": {

+ "application/vnd.jupyter.widget-view+json": {

+ "model_id": "fd59e3e961eb409a9f46c5b447d3359f",

+ "version_major": 2,

+ "version_minor": 0

+ },

+ "text/plain": [

+ "Dropdown(description='Thinker device', options=('CPU', 'AUTO'), value='CPU')"

+ ]

+ },

+ "execution_count": 6,

+ "metadata": {},

+ "output_type": "execute_result"

+ }

+ ],

+ "source": [

+ "from notebook_utils import device_widget\n",

+ "\n",

+ "thinker_device = device_widget(default=\"CPU\", exclude=[\"NPU\"], description=\"Thinker device\")\n",

+ "\n",

+ "thinker_device"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": 7,

+ "id": "1d5d9f46-4d35-444e-ac46-cef1cf70bb0c",

+ "metadata": {},

+ "outputs": [

+ {

+ "data": {

+ "application/vnd.jupyter.widget-view+json": {

+ "model_id": "ac5adb59a32143ce9868904709c00413",

+ "version_major": 2,

+ "version_minor": 0

+ },

+ "text/plain": [

+ "Dropdown(description='Talker device', options=('CPU', 'AUTO'), value='CPU')"

+ ]

+ },

+ "execution_count": 7,

+ "metadata": {},

+ "output_type": "execute_result"

+ }

+ ],

+ "source": [

+ "talker_device = device_widget(default=\"CPU\", exclude=[\"NPU\"], description=\"Talker device\")\n",

+ "\n",

+ "talker_device"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": 8,

+ "id": "89a32bac-4b33-4bbb-abc6-3ea8b4ef60e7",

+ "metadata": {},

+ "outputs": [

+ {

+ "data": {

+ "application/vnd.jupyter.widget-view+json": {

+ "model_id": "f10d0b7fd996426db89faaf8100a9d20",

+ "version_major": 2,

+ "version_minor": 0

+ },

+ "text/plain": [

+ "Dropdown(description='Code2Wav device', options=('CPU', 'AUTO'), value='CPU')"

+ ]

+ },

+ "execution_count": 8,

+ "metadata": {},

+ "output_type": "execute_result"

+ }

+ ],

+ "source": [

+ "code2wav_device = device_widget(default=\"CPU\", exclude=[\"NPU\"], description=\"Code2Wav device\")\n",

+ "\n",

+ "code2wav_device"

+ ]

+ },

+ {

+ "attachments": {},

+ "cell_type": "markdown",

+ "id": "523fdc7d-7c4c-4f91-9c48-42fc2e39210a",

+ "metadata": {},

+ "source": [

+ "### Initialize model tasks\n",

+ "[back to top ⬆️](#Table-of-contents:)"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": 9,

+ "id": "8d1cbb31",

+ "metadata": {},

+ "outputs": [

+ {

+ "name": "stderr",

+ "output_type": "stream",

+ "text": [

+ "Unrecognized keys in `rope_scaling` for 'rope_type'='default': {'interleaved', 'mrope_interleaved', 'mrope_section'}\n",

+ "Unrecognized keys in `rope_scaling` for 'rope_type'='default': {'interleaved', 'mrope_section'}\n"

+ ]

+ }

+ ],

+ "source": [

+ "from transformers import Qwen3OmniMoeProcessor\n",

+ "from qwen_3_omni_moe_helper import OVQwen3OmniMoeModel\n",

+ "\n",

+ "ov_model = OVQwen3OmniMoeModel(model_dir, thinker_device=thinker_device.value, talker_device=talker_device.value, code2wav_device=code2wav_device.value)\n",

+ "processor = Qwen3OmniMoeProcessor.from_pretrained(model_dir)"

+ ]

+ },

+ {

+ "attachments": {},

+ "cell_type": "markdown",

+ "id": "8328887c",

+ "metadata": {},

+ "source": [

+ "## Run model inference\n",

+ "[back to top ⬆️](#Table-of-contents:)\n",

+ "\n",

+ "Let's explore model capabilities using multimodal input and output."

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "b3424ef7-f254-4199-97eb-62e4c7feb728",

+ "metadata": {},

+ "outputs": [

+ {

+ "name": "stderr",

+ "output_type": "stream",

+ "text": [

+ "Setting `pad_token_id` to `eos_token_id`:151645 for open-end generation.\n",

+ "The following generation flags are not valid and may be ignored: ['temperature']. Set `TRANSFORMERS_VERBOSITY=info` for more details.\n",

+ "The attention mask and the pad token id were not set. As a consequence, you may observe unexpected behavior. Please pass your input's `attention_mask` to obtain reliable results.\n",

+ "Setting `pad_token_id` to `eos_token_id`:2150 for open-end generation.\n",

+ "The attention mask is not set and cannot be inferred from input because pad token is same as eos token. As a consequence, you may observe unexpected behavior. Please pass your input's `attention_mask` to obtain reliable results.\n"

+ ]

+ }

+ ],

+ "source": [

+ "from qwen_omni_utils import process_mm_info\n",

+ "import soundfile as sf\n",

+ "from notebook_utils import download_file\n",

+ "\n",

+ "if not Path(\"cars.jpg\").exists():\n",

+ " download_file(\"https://qianwen-res.oss-cn-beijing.aliyuncs.com/Qwen3-Omni/demo/cars.jpg\", \"cars.jpg\")\n",

+ "\n",

+ "if not Path(\"cough.wav\").exists():\n",

+ " download_file(\"https://qianwen-res.oss-cn-beijing.aliyuncs.com/Qwen3-Omni/demo/cough.wav\", \"cough.wav\")\n",

+ "\n",

+ "conversation = [\n",

+ " {\n",

+ " \"role\": \"user\",\n",

+ " \"content\": [\n",

+ " {\"type\": \"image\", \"image\": \"cars.jpg\"},\n",

+ " {\"type\": \"audio\", \"audio\": \"cough.wav\"},\n",

+ " {\"type\": \"text\", \"text\": \"What can you see and hear? Answer in one short sentence.\"}\n",

+ " ],\n",

+ " },\n",

+ "]\n",

+ "\n",

+ "# Set whether to use audio in video\n",

+ "USE_AUDIO_IN_VIDEO = True\n",

+ "\n",

+ "# Preparation for inference\n",

+ "text = processor.apply_chat_template(conversation, add_generation_prompt=True, tokenize=False)\n",

+ "audios, images, videos = process_mm_info(conversation, use_audio_in_video=USE_AUDIO_IN_VIDEO)\n",

+ "inputs = processor(text=text, \n",

+ " audio=audios, \n",

+ " images=images, \n",

+ " videos=videos, \n",

+ " return_tensors=\"pt\", \n",

+ " padding=True, \n",

+ " use_audio_in_video=USE_AUDIO_IN_VIDEO)\n",

+ "\n",

+ "# Inference: Generation of the output text and audio\n",

+ "text_ids, audio = ov_model.generate(**inputs, \n",

+ " speaker=\"Ethan\", \n",

+ " thinker_return_dict_in_generate=True,\n",

+ " use_audio_in_video=USE_AUDIO_IN_VIDEO)\n",

+ "\n",

+ "text = processor.batch_decode(text_ids.sequences[:, inputs[\"input_ids\"].shape[1] :],\n",

+ " skip_special_tokens=True,\n",

+ " clean_up_tokenization_spaces=False)\n",

+ "print(text)\n",

+ "if audio is not None:\n",

+ " sf.write(\n",

+ " \"output_ov.wav\",\n",

+ " audio.reshape(-1).detach().cpu().numpy(),\n",

+ " samplerate=24000,\n",

+ " )\n"

+ ]

+ },

+ {

+ "attachments": {},

+ "cell_type": "markdown",

+ "id": "1ff8cc97",

+ "metadata": {},

+ "source": [

+ "## Interactive demo\n",

+ "[back to top ⬆️](#Table-of-contents:)"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": 15,

+ "id": "e01645bc",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "from gradio_helper import make_demo\n",

+ "\n",

+ "demo = make_demo(ov_model, processor)\n",

+ "\n",

+ "try:\n",

+ " demo.launch(debug=True)\n",

+ "except Exception:\n",

+ " demo.launch(debug=True, share=True)\n",

+ "# if you are launching remotely, specify server_name and server_port\n",

+ "# demo.launch(server_name='your server name', server_port='server port in int')\n",

+ "# Read more in the docs: https://gradio.app/docs/"

+ ]

+ }

+ ],

+ "metadata": {

+ "kernelspec": {

+ "display_name": "Python 3 (ipykernel)",

+ "language": "python",

+ "name": "python3"

+ },

+ "language_info": {

+ "codemirror_mode": {

+ "name": "ipython",

+ "version": 3

+ },

+ "file_extension": ".py",

+ "mimetype": "text/x-python",

+ "name": "python",

+ "nbconvert_exporter": "python",

+ "pygments_lexer": "ipython3",

+ "version": "3.10.12"

+ },

+ "openvino_notebooks": {

+ "imageUrl": "https://github.com/user-attachments/assets/600798db-c80e-4d1d-ab00-fa945cdcd583",

+ "tags": {

+ "categories": [

+ "Model Demos",

+ "AI Trends"

+ ],

+ "libraries": [],

+ "other": [],

+ "tasks": [

+ "Visual Question Answering",

+ "Image-to-Text",

+ "Text Generation",

+ "Audio Generation",

+ "Speech Recognition"

+ ]

+ }

+ },

+ "widgets": {

+ "application/vnd.jupyter.widget-state+json": {

+ "state": {

+ "05134cea5e3b46968b24749f435cc8da": {

+ "model_module": "@jupyter-widgets/base",

+ "model_module_version": "2.0.0",

+ "model_name": "LayoutModel",

+ "state": {}

+ },

+ "1606efa359604bd09f079d6ad29507b0": {

+ "model_module": "@jupyter-widgets/controls",

+ "model_module_version": "2.0.0",

+ "model_name": "DropdownModel",

+ "state": {

+ "_options_labels": [

+ "Qwen/Qwen2.5-Omni-3B",

+ "Qwen/Qwen2.5-Omni-7B"

+ ],

+ "description": "Model:",

+ "index": 0,

+ "layout": "IPY_MODEL_768b5ab84f8b4ba499f11eb89bb67aa0",

+ "style": "IPY_MODEL_b6bd1a60172b419783cba12ee8fc5662"

+ }

+ },

+ "2f09cede86e64e799a1f0baa7dd1ca7d": {

+ "model_module": "@jupyter-widgets/controls",

+ "model_module_version": "2.0.0",

+ "model_name": "DescriptionStyleModel",

+ "state": {

+ "description_width": ""

+ }

+ },

+ "5eeec30c85334a718fde4a2cc6bd630b": {

+ "model_module": "@jupyter-widgets/controls",

+ "model_module_version": "2.0.0",

+ "model_name": "DescriptionStyleModel",

+ "state": {

+ "description_width": ""

+ }

+ },

+ "767dd31f417948aca84f756d95786707": {

+ "model_module": "@jupyter-widgets/base",

+ "model_module_version": "2.0.0",

+ "model_name": "LayoutModel",

+ "state": {}

+ },

+ "768b5ab84f8b4ba499f11eb89bb67aa0": {

+ "model_module": "@jupyter-widgets/base",

+ "model_module_version": "2.0.0",

+ "model_name": "LayoutModel",

+ "state": {}

+ },

+ "96c78b29b9a54e78847100ac4619bc0d": {

+ "model_module": "@jupyter-widgets/controls",

+ "model_module_version": "2.0.0",

+ "model_name": "DropdownModel",

+ "state": {

+ "_options_labels": [

+ "CPU",

+ "AUTO"

+ ],

+ "description": "Talker device",

+ "index": 1,

+ "layout": "IPY_MODEL_767dd31f417948aca84f756d95786707",

+ "style": "IPY_MODEL_5eeec30c85334a718fde4a2cc6bd630b"

+ }

+ },

+ "9cc862536027461f91fc3b0ffd782bd2": {

+ "model_module": "@jupyter-widgets/controls",

+ "model_module_version": "2.0.0",

+ "model_name": "DropdownModel",

+ "state": {

+ "_options_labels": [

+ "CPU",

+ "AUTO"

+ ],

+ "description": "Token2Wav device",

+ "index": 0,

+ "layout": "IPY_MODEL_e4c6ac0c3b5741f8bd7000b9eaadfce9",

+ "style": "IPY_MODEL_2f09cede86e64e799a1f0baa7dd1ca7d"

+ }

+ },

+ "b135b255a1ce4fd1bf4311284797568d": {

+ "model_module": "@jupyter-widgets/controls",

+ "model_module_version": "2.0.0",

+ "model_name": "DescriptionStyleModel",

+ "state": {

+ "description_width": ""

+ }

+ },

+ "b6bd1a60172b419783cba12ee8fc5662": {

+ "model_module": "@jupyter-widgets/controls",

+ "model_module_version": "2.0.0",

+ "model_name": "DescriptionStyleModel",

+ "state": {

+ "description_width": ""

+ }

+ },

+ "e153361cb39f4c5d8ef1cecf7df58e41": {

+ "model_module": "@jupyter-widgets/controls",

+ "model_module_version": "2.0.0",

+ "model_name": "DropdownModel",

+ "state": {

+ "_options_labels": [

+ "CPU",

+ "AUTO"

+ ],

+ "description": "Thinker device",

+ "index": 1,

+ "layout": "IPY_MODEL_05134cea5e3b46968b24749f435cc8da",

+ "style": "IPY_MODEL_b135b255a1ce4fd1bf4311284797568d"

+ }

+ },

+ "e4c6ac0c3b5741f8bd7000b9eaadfce9": {

+ "model_module": "@jupyter-widgets/base",

+ "model_module_version": "2.0.0",

+ "model_name": "LayoutModel",

+ "state": {}

+ }

+ },

+ "version_major": 2,

+ "version_minor": 0

+ }

+ }

+ },

+ "nbformat": 4,

+ "nbformat_minor": 5

+}

diff --git a/notebooks/qwen3-omni-chatbot/qwen_3_omni_moe_helper.py b/notebooks/qwen3-omni-chatbot/qwen_3_omni_moe_helper.py

new file mode 100644

index 00000000000..44754d7a486

--- /dev/null

+++ b/notebooks/qwen3-omni-chatbot/qwen_3_omni_moe_helper.py

@@ -0,0 +1,2870 @@

+import gc

+import types

+from dataclasses import dataclass

+from pathlib import Path

+from typing import Any, Callable, Dict, Optional, Tuple, Union

+from torch.nn import functional as F

+import numpy as np

+import nncf

+import openvino as ov

+import torch

+from huggingface_hub import snapshot_download

+from torch import nn

+from transformers import (

+ AutoConfig,

+ AutoProcessor,

+ Qwen3OmniMoeForConditionalGeneration,

+ masking_utils,

+)

+from transformers.cache_utils import Cache, DynamicCache, DynamicLayer

+from transformers.generation import GenerationConfig, GenerationMixin

+from transformers.modeling_outputs import (

+ BaseModelOutput,

+ CausalLMOutputWithPast,

+ MoeCausalLMOutputWithPast,

+)

+from transformers.models.qwen3_omni_moe.configuration_qwen3_omni_moe import (

+ Qwen3OmniMoeConfig,

+)

+from transformers.models.qwen3_omni_moe.modeling_qwen3_omni_moe import (

+ Qwen3OmniMoeTalkerCodePredictorOutputWithPast,

+ Qwen3OmniMoeTalkerOutputWithPast,

+ Qwen3OmniMoeThinkerTextSparseMoeBlock,

+ Qwen3OmniMoeThinkerCausalLMOutputWithPast,

+ Qwen3OmniMoeTalkerTextSparseMoeBlock,

+ Qwen3OmniMoeVisionRotaryEmbedding,

+ Qwen3OmniMoeCausalConvNet,

+)

+from transformers.utils import is_torch_xpu_available, is_torchdynamo_compiling

+

+try:

+ from openvino import opset13

+except ImportError:

+ from openvino.runtime import opset13

+

+from openvino.frontend.pytorch.patch_model import __make_16bit_traceable

+from transformers.masking_utils import ALL_MASK_ATTENTION_FUNCTIONS

+

+# logging.basicConfig(level=logging.DEBUG)

+

+

+def _new_get_extra_padding_for_conv1d(self, hidden_state: torch.Tensor) -> int:

+ length = hidden_state.shape[-1]

+ n_frames = (length - self.kernel_size + self.padding) / self.stride + 1

+ # original implementation with math.ceil

+ # ideal_length = (math.ceil(n_frames) - 1) * self.stride + (self.kernel_size - self.padding)

+ ideal_length = (torch.ceil(torch.tensor(n_frames)).to(torch.int64) - 1) * self.stride + (self.kernel_size - self.padding)

+

+ return ideal_length - length

+

+def patched_dynamic_layer_update(

+ self, key_states: torch.Tensor, value_states: torch.Tensor, cache_kwargs: dict[str, Any] | None = None

+) -> tuple[torch.Tensor, torch.Tensor]:

+ if self.keys is None:

+ self.keys = key_states

+ self.values = value_states

+ self.device = key_states.device

+ self.dtype = key_states.dtype

+ self.is_initialized = True

+ else:

+ self.keys = torch.cat([self.keys, key_states], dim=-2)

+ self.values = torch.cat([self.values, value_states], dim=-2)

+ return self.keys, self.values

+

+DynamicLayer.update = patched_dynamic_layer_update

+

+def find_packed_sequence_indices(position_ids: torch.Tensor) -> torch.Tensor:

+ """

+ Find the indices of the sequence to which each new query token in the sequence belongs when using packed

+ tensor format (i.e. several sequences packed in the same batch dimension).

+

+ Args:

+ position_ids (`torch.Tensor`)

+ A 2D tensor of shape (batch_size, query_length) indicating the positions of each token in the sequences.

+

+ Returns:

+ A 2D tensor where each similar integer indicates that the tokens belong to the same sequence. For example, if we

+ pack 3 sequences of 2, 3 and 1 tokens respectively along a single batch dim, this will return [[0, 0, 1, 1, 1, 2]].

+ """

+ # What separate different sequences is when 2 consecutive positions_ids are separated by more than 1. So

+ # taking the diff (by prepending the first value - 1 to keep correct indexing) and applying cumsum to the result

+ # gives exactly the sequence indices

+ # Note that we assume that a single sequence cannot span several batch dimensions, i.e. 1 single sequence

+ # cannot be part of the end of the first batch dim and the start of the 2nd one for example

+ first_dummy_value = position_ids[:, :1] - 1 # We just need the diff on this first value to be 1

+ # Alternative implementation using concat instead of prepend

+ position_diff = torch.cat([torch.ones_like(first_dummy_value), position_ids[:, 1:] - position_ids[:, :-1]], dim=-1)

+ packed_sequence_mask = (position_diff != 1).cumsum(-1)

+

+ # Here it would be nice to return None if we did not detect packed sequence format, i.e. if `packed_sequence_mask[:, -1] == 0`

+ # but it causes issues with export

+ return packed_sequence_mask

+

+masking_utils.find_packed_sequence_indices = find_packed_sequence_indices

+

+def patch_cos_sin_cached_fp32(model):

+ if (

+ hasattr(model, "layers")

+ and hasattr(model.layers[0], "self_attn")

+ and hasattr(model.layers[0].self_attn, "rotary_emb")

+ and hasattr(model.layers[0].self_attn.rotary_emb, "dtype")

+ and hasattr(model.layers[0].self_attn.rotary_emb, "inv_freq")

+ and hasattr(model.layers[0].self_attn.rotary_emb, "max_position_embeddings")

+ and hasattr(model.layers[0].self_attn.rotary_emb, "_set_cos_sin_cache")

+ ):

+ for layer in model.layers:

+ if layer.self_attn.rotary_emb.dtype != torch.float32:

+ layer.self_attn.rotary_emb._set_cos_sin_cache(

+ seq_len=layer.self_attn.rotary_emb.max_position_embeddings,

+ device=layer.self_attn.rotary_emb.inv_freq.device,

+ dtype=torch.float32,

+ )

+

+def causal_mask_function(batch_idx: int, head_idx: int, q_idx: int, kv_idx: int) -> bool:

+ """

+ This creates a basic lower-diagonal causal mask.

+ """

+ return kv_idx <= q_idx

+

+def prepare_padding_mask(

+ attention_mask: Optional[torch.Tensor], kv_length: int, kv_offset: int, _slice: bool = True

+) -> Optional[torch.Tensor]:

+ """

+ From the 2D attention mask, prepare the correct padding mask to use by potentially padding it, and slicing

+ according to the `kv_offset` if `_slice` is `True`.

+ """

+ local_padding_mask = attention_mask

+ if attention_mask is not None:

+ # Pad it if necessary

+ if (padding_length := kv_length + kv_offset - attention_mask.shape[-1]) > 0:

+ local_padding_mask = torch.nn.functional.pad(attention_mask, (0, padding_length))

+ # For flex, we should not slice them, only use an offset

+ if _slice:

+ # Equivalent to: `local_padding_mask = attention_mask[:, kv_offset : kv_offset + kv_length]`,

+ # but without data-dependent slicing (i.e. torch.compile friendly)

+ mask_indices = torch.arange(kv_length, device=local_padding_mask.device)

+ mask_indices += kv_offset

+ local_padding_mask = local_padding_mask[:, mask_indices]

+ return local_padding_mask

+

+def and_masks(*mask_functions: list[Callable]) -> Callable:

+ """Returns a mask function that is the intersection of provided mask functions"""

+ if not all(callable(arg) for arg in mask_functions):

+ raise RuntimeError(f"All inputs should be callable mask_functions: {mask_functions}")

+

+ def and_mask(batch_idx, head_idx, q_idx, kv_idx):

+ result = q_idx.new_ones((), dtype=torch.bool)

+ for mask in mask_functions:

+ result = result & mask(batch_idx, head_idx, q_idx, kv_idx).to(result.device)

+ return result

+

+ return and_mask

+

+def padding_mask_function(padding_mask: torch.Tensor) -> Callable:

+ """

+ This return the mask_function function corresponding to a 2D padding mask.

+ """

+

+ def inner_mask(batch_idx: int, head_idx: int, q_idx: int, kv_idx: int) -> bool:

+ # Note that here the mask should ALWAYS be at least of the max `kv_index` size in the dimension 1. This is because

+ # we cannot pad it here in the mask_function as we don't know the final size, and we cannot try/except, as it is not

+ # vectorizable on accelerator devices

+ return padding_mask[batch_idx, kv_idx]

+

+ return inner_mask

+

+def _ignore_causal_mask_sdpa(

+ padding_mask: Optional[torch.Tensor],

+ query_length: int,

+ kv_length: int,

+ kv_offset: int,

+ local_attention_size: Optional[int] = None,

+) -> bool:

+ """

+ Detects whether the causal mask can be ignored in case PyTorch's SDPA is used, rather relying on SDPA's `is_causal` argument.

+

+ In case no token is masked in the 2D `padding_mask` argument, if `query_length == 1` or

+ `key_value_length == query_length`, we rather rely on SDPA `is_causal` argument to use causal/non-causal masks,

+ allowing to dispatch to the flash attention kernel (that can otherwise not be used if a custom `attn_mask` is

+ passed).

+ """

+ is_tracing = torch.jit.is_tracing() or isinstance(padding_mask, torch.fx.Proxy) or is_torchdynamo_compiling()

+ if padding_mask is not None and padding_mask.shape[-1] > kv_length:

+ mask_indices = torch.arange(kv_length, device=padding_mask.device)

+ mask_indices += kv_offset

+ padding_mask = padding_mask[:, mask_indices]

+

+ # When using `torch.export` or `torch.onnx.dynamo_export`, we must pass an example input, and `is_causal` behavior is

+ # hard-coded to the forward. If a user exports a model with query_length > 1, the exported model will hard-code `is_causal=True`

+ # which is in general wrong (see https://github.com/pytorch/pytorch/issues/108108). Thus, we only set

+ # `ignore_causal_mask = True` if we are not tracing

+ if (

+ not is_tracing

+ # only cases when lower and upper diags are the same, see https://github.com/pytorch/pytorch/issues/108108

+ and (query_length == 1 or (kv_length == query_length or is_torch_xpu_available))

+ # in this case we need to add special patterns to the mask so cannot be skipped otherwise

+ and (local_attention_size is None or kv_length < local_attention_size)

+ # In this case, we need to add padding to the mask, so cannot be skipped otherwise

+ and (

+ padding_mask is None

+ or (

+ padding_mask.all()

+ if not is_torch_xpu_available or query_length == 1

+ else padding_mask[:, :query_length].all()

+ )

+ )

+ ):

+ return True

+

+ return False

+

+def sdpa_mask_without_vmap(

+ batch_size: int,

+ cache_position: torch.Tensor,

+ kv_length: int,

+ kv_offset: int = 0,

+ mask_function: Optional[Callable] = None,

+ attention_mask: Optional[torch.Tensor] = None,

+ local_size: Optional[int] = None,

+ allow_is_causal_skip: bool = True,

+ **kwargs,

+) -> Optional[torch.Tensor]:

+ if mask_function is None:

+ mask_function = causal_mask_function

+

+ q_length = cache_position.shape[0]

+ # Potentially pad the 2D mask, and slice it correctly

+ padding_mask = prepare_padding_mask(attention_mask, kv_length, kv_offset, _slice=False)

+

+ # Under specific conditions, we can avoid materializing the mask, instead relying on the `is_causal` argument

+ if allow_is_causal_skip and _ignore_causal_mask_sdpa(padding_mask, q_length, kv_length, kv_offset, local_size):

+ return None

+

+ # Potentially add the padding 2D mask

+ if padding_mask is not None:

+ mask_function = and_masks(mask_function, padding_mask_function(padding_mask))

+

+ # Create broadcatable indices

+ device = cache_position.device

+ q_indices = cache_position[None, None, :, None]

+ head_indices = torch.arange(1, dtype=torch.long, device=device)[None, :, None, None]

+ batch_indices = torch.arange(batch_size, dtype=torch.long, device=device)[:, None, None, None]

+ kv_indices = torch.arange(kv_length, dtype=torch.long, device=device)[None, None, None, :] + kv_offset

+

+ # Apply mask function element-wise through broadcasting

+ causal_mask = mask_function(batch_indices, head_indices, q_indices, kv_indices)

+ # Expand the mask to match batch size and query length if they weren't used in the mask function

+ causal_mask = causal_mask.expand(batch_size, -1, q_length, kv_length)

+

+ return causal_mask

+

+# Adapted from https://github.com/huggingface/transformers/blob/v4.53.0/src/transformers/masking_utils.py#L433

+# Specifically for OpenVINO, we use torch.finfo(torch.float16).min instead of torch.finfo(dtype).min

+def eager_mask_without_vmap(*args, **kwargs) -> Optional[torch.Tensor]:

+ kwargs.pop("allow_is_causal_skip", None)

+ dtype = kwargs.get("dtype", torch.float32)

+ mask = sdpa_mask_without_vmap(*args, allow_is_causal_skip=False, **kwargs)

+ # we use torch.finfo(torch.float16).min instead torch.finfo(dtype).min to avoid an overflow but not

+ # sure this is the right way to handle this, we are basically pretending that -65,504 is -inf

+ mask = torch.where(

+ mask,

+ torch.tensor(0.0, device=mask.device, dtype=dtype),

+ torch.tensor(torch.finfo(torch.float16).min, device=mask.device, dtype=dtype),

+ )

+ return mask

+

+

+# for OpenVINO, we use torch.finfo(torch.float16).min instead of torch.finfo(dtype).min

+# Although I'm not sure this is the right way to handle this, we are basically pretending that -65,504 is -inf

+ALL_MASK_ATTENTION_FUNCTIONS.register("eager", eager_mask_without_vmap)

+

+# for decoder models, we use eager mask without vmap for sdpa as well

+# to avoid a nan output issue in OpenVINO that only happens in case of:

+# non-stateful models on cpu and stateful models on npu

+ALL_MASK_ATTENTION_FUNCTIONS.register("sdpa", sdpa_mask_without_vmap)

+

+

+def model_has_state(ov_model: ov.Model):

+ return len(ov_model.get_sinks()) > 0

+

+

+def model_has_input_output_name(ov_model: ov.Model, name: str):

+ """

+ Helper function for checking that model has specified input or output name

+ """

+ return name in sum([list(t.get_names()) for t in ov_model.inputs + ov_model.outputs], [])

+

+

+def fuse_cache_reorder(

+ ov_model: ov.Model,

+ not_kv_inputs: list[str],

+ key_value_input_names: list[str],

+ gather_dim: int,

+):

+ """

+ Fuses reordered cache during generate cycle into ov.Model for MoE models.

+ """

+ if model_has_input_output_name(ov_model, "beam_idx"):

+ raise ValueError("Model already has fused cache")

+ input_batch = ov_model.input("inputs_embeds").get_partial_shape()[0]

+ beam_idx = opset13.parameter(name="beam_idx", dtype=ov.Type.i32, shape=ov.PartialShape([input_batch]))

+ beam_idx.output(0).get_tensor().add_names({"beam_idx"})

+ ov_model.add_parameters([beam_idx])

+ not_kv_inputs.append(ov_model.inputs[-1])

+

+ for input_name in key_value_input_names:

+ parameter_output_port = ov_model.input(input_name)

+ consumers = parameter_output_port.get_target_inputs()

+ gather = opset13.gather(parameter_output_port, beam_idx, opset13.constant(gather_dim))

+ for consumer in consumers:

+ consumer.replace_source_output(gather.output(0))

+ ov_model.validate_nodes_and_infer_types()

+

+

+def build_state_initializer(ov_model: ov.Model, batch_dim: int):

+ """

+ Build initialization ShapeOf Expression for all ReadValue ops for MoE models

+ """

+ input_ids = ov_model.input("inputs_embeds")

+ batch = opset13.gather(

+ opset13.shape_of(input_ids, output_type="i64"),

+ opset13.constant([0]),

+ opset13.constant(0),

+ )

+ for op in ov_model.get_ops():

+ if op.get_type_name() == "ReadValue":

+ dims = [dim.min_length for dim in list(op.get_output_partial_shape(0))]

+ dims[batch_dim] = batch

+ dims = [(opset13.constant(np.array([dim], dtype=np.int64)) if isinstance(dim, int) else dim) for dim in dims]

+ shape = opset13.concat(dims, axis=0)

+ broadcast = opset13.broadcast(opset13.constant(0.0, dtype=op.get_output_element_type(0)), shape)

+ op.set_arguments([broadcast])

+ ov_model.validate_nodes_and_infer_types()

+

+

+def make_stateful(

+ ov_model: ov.Model,

+ not_kv_inputs: list[str],

+ key_value_input_names: list[str],

+ key_value_output_names: list[str],

+ batch_dim: int,

+ num_attention_heads: int,

+ num_beams_and_batch: int = None,

+):

+ """

+ Hides kv-cache inputs and outputs inside the MoE model as variables.

+ """

+ from openvino._offline_transformations import apply_make_stateful_transformation

+

+ input_output_map = {}

+

+ if num_beams_and_batch is not None:

+ for input in not_kv_inputs:

+ shape = input.get_partial_shape()

+ if shape.rank.get_length() <= 2:

+ shape[0] = num_beams_and_batch

+ input.get_node().set_partial_shape(shape)

+

+ for kv_name_pair in zip(key_value_input_names, key_value_output_names):

+ input_output_map[kv_name_pair[0]] = kv_name_pair[1]

+ if num_beams_and_batch is not None:

+ input = ov_model.input(kv_name_pair[0])

+ shape = input.get_partial_shape()

+ shape[batch_dim] = num_beams_and_batch * num_attention_heads

+ input.get_node().set_partial_shape(shape)

+

+ if num_beams_and_batch is not None:

+ ov_model.validate_nodes_and_infer_types()

+

+ apply_make_stateful_transformation(ov_model, input_output_map)

+ if num_beams_and_batch is None:

+ build_state_initializer(ov_model, batch_dim)

+

+

+def patch_stateful(ov_model, dim):

+ key_value_input_names = [key.get_any_name() for key in ov_model.inputs[2:-1]]

+ key_value_output_names = [key.get_any_name() for key in ov_model.outputs[dim:]]

+ not_kv_inputs = [input for input in ov_model.inputs if not any(name in key_value_input_names for name in input.get_names())]

+ if not key_value_input_names or not key_value_output_names:

+ return

+ batch_dim = 0

+ num_attention_heads = 1

+

+ fuse_cache_reorder(ov_model, not_kv_inputs, key_value_input_names, batch_dim)

+ make_stateful(

+ ov_model,

+ not_kv_inputs,

+ key_value_input_names,

+ key_value_output_names,

+ batch_dim,

+ num_attention_heads,

+ None,

+ )

+

+

+def cleanup_torchscript_cache():

+ """

+ Helper for removing cached model representation

+ """

+ torch._C._jit_clear_class_registry()

+ torch.jit._recursive.concrete_type_store = torch.jit._recursive.ConcreteTypeStore()

+ torch.jit._state._clear_class_state()

+

+

+core = ov.Core()

+

+# File naming conventions for Qwen3OmniMoe

+THINKER_LANGUAGE_NAME = "openvino_thinker_language_model.xml"

+THINKER_AUDIO_NAME = "openvino_thinker_audio_model.xml"

+THINKER_AUDIO_STATE_NAME = "openvino_thinker_audio_state_model.xml"

+THINKER_VISION_NAME = "openvino_thinker_vision_model.xml"

+THINKER_VISION_POS_NAME = "openvino_thinker_vision_pos_model.xml"

+THINKER_VISION_MERGER_NAME = "openvino_thinker_vision_merger_model.xml"

+THINKER_EMBEDDING_NAME = "openvino_thinker_embedding_model.xml"

+THINKER_PATCHER_NAME = "openvino_thinker_patcher_model.xml"

+THINKER_PATCHER_NAME = "openvino_thinker_patcher_model.xml"

+THINKER_MERGER_NAME = "openvino_thinker_merger_model.xml"

+

+TALKER_LANGUAGE_NAME = "openvino_talker_language_model.xml"

+TALKER_EMBEDDING_NAME = "openvino_talker_embedding_model.xml"

+TALKER_TEXT_PROJECTION_NAME = "openvino_talker_text_projection_model.xml"

+TALKER_HIDDEN_PROJECTION_NAME = "openvino_talker_hidden_projection_model.xml"

+

+TALKER_CODE_PREDICTOR_EMBEDDING_NAME = "openvino_talker_code_predictor_embedding_model.xml"

+TALKER_CODE_PREDICTOR_NAME = "openvino_talker_code_predictor_model.xml"

+

+CODE2WAV_NAME = "openvino_code2wav_model.xml"

+

+

+def _get_feat_extract_output_lengths(input_lengths):

+ """

+ Computes the output length of the convolutional layers and the output length of the audio encoder

+ """

+

+ input_lengths_leave = input_lengths % 100

+ feat_lengths = (input_lengths_leave - 1) // 2 + 1

+ output_lengths = ((feat_lengths - 1) // 2 + 1 - 1) // 2 + 1 + (input_lengths // 100) * 13

+ return output_lengths

+

+

+def qwen3_moe_thinker_forward_patched(self, hidden_states: torch.Tensor) -> torch.Tensor:

+ batch_size, sequence_length, hidden_dim = hidden_states.shape

+ hidden_states = hidden_states.view(-1, hidden_dim)

+ # router_logits: (batch * sequence_length, n_experts)

+ router_logits = self.gate(hidden_states)

+

+ routing_weights = torch.nn.functional.softmax(router_logits, dim=1, dtype=torch.float)

+ routing_weights, selected_experts = torch.topk(routing_weights, self.top_k, dim=-1)

+ if self.norm_topk_prob: # only diff with mixtral sparse moe block!

+ routing_weights /= routing_weights.sum(dim=-1, keepdim=True)

+ # we cast back to the input dtype

+ routing_weights = routing_weights.to(hidden_states.dtype)

+

+ final_hidden_states = torch.zeros(

+ (batch_size * sequence_length, hidden_dim), dtype=hidden_states.dtype, device=hidden_states.device

+ )

+

+ # expert_mask: [num_experts, top_k, batch*seq_len]

+ expert_mask = torch.nn.functional.one_hot(selected_experts, num_classes=self.num_experts).permute(2, 1, 0)

+

+ # Use arithmetic masking instead of torch.where + index_add_ to avoid NonZero ops

+ # that produce data-dependent shapes and crash OpenVINO apply_moc_transformations.

+ for expert_idx in range(self.num_experts):

+ expert_layer = self.experts[expert_idx]

+ # token_weights: [batch*seq_len] — routing weight for this expert per token (0 if not routed here)

+ token_weights = (expert_mask[expert_idx].to(routing_weights.dtype) * routing_weights.T).sum(0)

+ current_hidden_states = expert_layer(hidden_states) * token_weights.unsqueeze(-1)

+ final_hidden_states = final_hidden_states + current_hidden_states.to(hidden_states.dtype)

+

+ final_hidden_states = final_hidden_states.reshape(batch_size, sequence_length, hidden_dim)

+ return final_hidden_states, router_logits

+

+def qwen3_moe_talker_forward_patched(self, hidden_states: torch.Tensor) -> torch.Tensor:

+ batch_size, sequence_length, hidden_dim = hidden_states.shape

+ hidden_states = hidden_states.view(-1, hidden_dim)

+ # router_logits: (batch * sequence_length, n_experts)

+ router_logits = self.gate(hidden_states)

+

+ routing_weights = torch.nn.functional.softmax(router_logits, dim=1, dtype=torch.float)

+ routing_weights, selected_experts = torch.topk(routing_weights, self.top_k, dim=-1)

+ if self.norm_topk_prob: # only diff with mixtral sparse moe block!

+ routing_weights /= routing_weights.sum(dim=-1, keepdim=True)

+ # we cast back to the input dtype

+ routing_weights = routing_weights.to(hidden_states.dtype)

+

+ final_hidden_states = torch.zeros(

+ (batch_size * sequence_length, hidden_dim), dtype=hidden_states.dtype, device=hidden_states.device

+ )

+

+ # expert_mask: [num_experts, top_k, batch*seq_len]

+ expert_mask = torch.nn.functional.one_hot(selected_experts, num_classes=self.num_experts).permute(2, 1, 0)

+

+ # Use arithmetic masking instead of torch.where + index_add_ to avoid NonZero ops

+ # that produce data-dependent shapes and crash OpenVINO apply_moc_transformations.

+ for expert_idx in range(self.num_experts):

+ expert_layer = self.experts[expert_idx]

+ # token_weights: [batch*seq_len] — routing weight for this expert per token (0 if not routed here)

+ token_weights = (expert_mask[expert_idx].to(routing_weights.dtype) * routing_weights.T).sum(0)

+ current_hidden_states = expert_layer(hidden_states) * token_weights.unsqueeze(-1)

+ final_hidden_states = final_hidden_states + current_hidden_states.to(hidden_states.dtype)

+

+ shared_expert_output = self.shared_expert(hidden_states)

+ shared_expert_output = torch.nn.functional.sigmoid(self.shared_expert_gate(hidden_states)) * shared_expert_output

+

+ final_hidden_states = final_hidden_states + shared_expert_output

+

+ final_hidden_states = final_hidden_states.reshape(batch_size, sequence_length, hidden_dim)

+ return final_hidden_states, router_logits

+

+

+def qwen3_moe_thinker_expert_forward_patched(self, hidden_states: torch.Tensor, top_k_index: torch.Tensor, top_k_weights: torch.Tensor

+ ) -> torch.Tensor:

+

+ final_hidden_states = torch.zeros_like(hidden_states)

+ expert_mask = torch.nn.functional.one_hot(top_k_index, num_classes=self.num_experts).permute(2, 1, 0)

+

+ # TODO: we loop over all possible experts instead of hitted ones to avoid issues in graph execution.

+ # expert_hitted = torch.greater(expert_mask.sum(dim=(-1, -2)), 0).nonzero()

+ # Loop over all available experts in the model and perform the computation on each expert

+ for expert_idx in range(self.num_experts):

+ idx, top_x = torch.where(expert_mask[expert_idx].squeeze(0))

+

+ # Index the correct hidden states and compute the expert hidden state for

+ # the current expert. We need to make sure to multiply the output hidden

+ # states by `routing_weights` on the corresponding tokens (top-1 and top-2)

+ current_state = hidden_states[None, top_x].reshape(-1, hidden_states.shape[-1])

+ current_hidden_states = self[expert_idx](current_state) * top_k_weights[top_x, idx, None]

+ final_hidden_states.index_add_(0, top_x, current_hidden_states.to(hidden_states.dtype))

+ return final_hidden_states

+

+def qwen3_moe_talker_text_forward_patched(self, hidden_states: torch.Tensor, top_k_index: torch.Tensor, top_k_weights: torch.Tensor

+ ) -> torch.Tensor:

+ final_hidden_states = torch.zeros_like(hidden_states)

+ expert_mask = torch.nn.functional.one_hot(top_k_index, num_classes=self.num_experts).permute(2, 1, 0)

+

+ # TODO: we loop over all possible experts instead of hitted ones to avoid issues in graph execution.

+ # expert_hitted = torch.greater(expert_mask.sum(dim=(-1, -2)), 0).nonzero()

+ # Loop over all available experts in the model and perform the computation on each expert

+ for expert_idx in range(self.num_experts):

+ idx, top_x = torch.where(expert_mask[expert_idx].squeeze(0))

+ current_state = hidden_states[None, top_x].reshape(-1, hidden_states.shape[-1])

+ current_hidden_states = self[expert_idx](current_state) * top_k_weights[top_x, idx, None]

+ final_hidden_states.index_add_(0, top_x, current_hidden_states.to(hidden_states.dtype))

+ return final_hidden_states

+

+def patch_qwen2vl_vision_blocks(model, force_new_behaviour=False):

+ # Modified from https://github.com/huggingface/transformers/blob/v4.49.0/src/transformers/models/qwen2_vl/modeling_qwen2_vl.py#L391

+ # added attention_mask input instead of internal calculation (unsupported by tracing due to cycle with dynamic len)

+ def sdpa_attn_forward(

+ self,

+ hidden_states: torch.Tensor,

+ attention_mask: torch.Tensor,

+ rotary_pos_emb: torch.Tensor = None,

+ position_embeddings: Optional[Tuple[torch.Tensor, torch.Tensor]] = None,

+ ):

+ def rotate_half(x):

+ """Rotates half the hidden dims of the input."""

+ x1 = x[..., : x.shape[-1] // 2]

+ x2 = x[..., x.shape[-1] // 2 :]

+ return torch.cat((-x2, x1), dim=-1)

+

+ def apply_rotary_pos_emb_vision(

+ q: torch.Tensor, k: torch.Tensor, cos: torch.Tensor, sin: torch.Tensor

+ ) -> Tuple[torch.Tensor, torch.Tensor]:

+ orig_q_dtype = q.dtype

+ orig_k_dtype = k.dtype

+ q, k = q.float(), k.float()

+ cos, sin = cos.unsqueeze(-2), sin.unsqueeze(-2)

+ q_embed = (q * cos) + (rotate_half(q) * sin)

+ k_embed = (k * cos) + (rotate_half(k) * sin)

+ q_embed = q_embed.to(orig_q_dtype)

+ k_embed = k_embed.to(orig_k_dtype)

+ return q_embed, k_embed

+

+ seq_length = hidden_states.shape[0]

+ q, k, v = self.qkv(hidden_states).reshape(seq_length, 3, self.num_heads, -1).permute(1, 0, 2, 3).unbind(0)

+ if position_embeddings is None:

+ emb = torch.cat((rotary_pos_emb, rotary_pos_emb), dim=-1)

+ cos = emb.cos().float()

+ sin = emb.sin().float()

+ else:

+ cos, sin = position_embeddings

+ q, k = apply_rotary_pos_emb_vision(q, k, cos, sin)

+ q = q.transpose(0, 1)

+ k = k.transpose(0, 1)

+ v = v.transpose(0, 1)