|

7 | 7 | "display_vendor": "ggml-org", |

8 | 8 | "license": "open-source" |

9 | 9 | }, |

10 | | - "latest": "3.0.0", |

| 10 | + "latest": "3.0.1", |

11 | 11 | "versions": { |

| 12 | + "3.0.1": { |

| 13 | + "sources": [ |

| 14 | + { |

| 15 | + "url": "https://github.com/cristianadam/llama.qtcreator/releases/download/v3.0.1/LlamaCpp-3.0.1-Windows-x64.7z", |

| 16 | + "sha256": "e2f00df103af908b0453292a05a200c6a56fab7f0d3e90ec07a7ae3b5962764c", |

| 17 | + "platform": { |

| 18 | + "name": "Windows", |

| 19 | + "architecture": "x86_64" |

| 20 | + } |

| 21 | + }, |

| 22 | + { |

| 23 | + "url": "https://github.com/cristianadam/llama.qtcreator/releases/download/v3.0.1/LlamaCpp-3.0.1-Windows-arm64.7z", |

| 24 | + "sha256": "ac3ca5977878819c3584109b0ff29d45053591b3e63cc9b147849c9e155216c3", |

| 25 | + "platform": { |

| 26 | + "name": "Windows", |

| 27 | + "architecture": "arm64" |

| 28 | + } |

| 29 | + }, |

| 30 | + { |

| 31 | + "url": "https://github.com/cristianadam/llama.qtcreator/releases/download/v3.0.1/LlamaCpp-3.0.1-Linux-x64.7z", |

| 32 | + "sha256": "b8cb0a3b6f28a99c99a10b54308ec12516ffd31ffd58aa51f8b57d6663a0979c", |

| 33 | + "platform": { |

| 34 | + "name": "Linux", |

| 35 | + "architecture": "x86_64" |

| 36 | + } |

| 37 | + }, |

| 38 | + { |

| 39 | + "url": "https://github.com/cristianadam/llama.qtcreator/releases/download/v3.0.1/LlamaCpp-3.0.1-Linux-arm64.7z", |

| 40 | + "sha256": "9562a39e3724ed8408fc323c5ec971611d5194ff013d1379a7708dcb1c393aa6", |

| 41 | + "platform": { |

| 42 | + "name": "Linux", |

| 43 | + "architecture": "arm64" |

| 44 | + } |

| 45 | + }, |

| 46 | + { |

| 47 | + "url": "https://github.com/cristianadam/llama.qtcreator/releases/download/v3.0.1/LlamaCpp-3.0.1-macOS-universal.7z", |

| 48 | + "sha256": "f6d7133af9d55800d9a1d2c54829db63d7454b83b14552e99072413a02c1e8c0", |

| 49 | + "platform": { |

| 50 | + "name": "macOS", |

| 51 | + "architecture": "x86_64" |

| 52 | + } |

| 53 | + }, |

| 54 | + { |

| 55 | + "url": "https://github.com/cristianadam/llama.qtcreator/releases/download/v3.0.1/LlamaCpp-3.0.1-macOS-universal.7z", |

| 56 | + "sha256": "f6d7133af9d55800d9a1d2c54829db63d7454b83b14552e99072413a02c1e8c0", |

| 57 | + "platform": { |

| 58 | + "name": "macOS", |

| 59 | + "architecture": "arm64" |

| 60 | + } |

| 61 | + } |

| 62 | + ], |

| 63 | + "metadata": { |

| 64 | + "Id": "llamacpp", |

| 65 | + "Name": "llama.qtcreator", |

| 66 | + "Version": "3.0.1", |

| 67 | + "CompatVersion": "3.0.1", |

| 68 | + "Vendor": "ggml-org", |

| 69 | + "VendorId": "ggml", |

| 70 | + "Copyright": "(C) 2025 The llama.qtcreator Contributors, Copyright (C) The Qt Company Ltd. and other contributors.", |

| 71 | + "License": "MIT", |

| 72 | + "Description": "llama.cpp infill completion plugin for Qt Creator", |

| 73 | + "LongDescription": [ |

| 74 | + "# llama.qtcreator", |

| 75 | + "", |

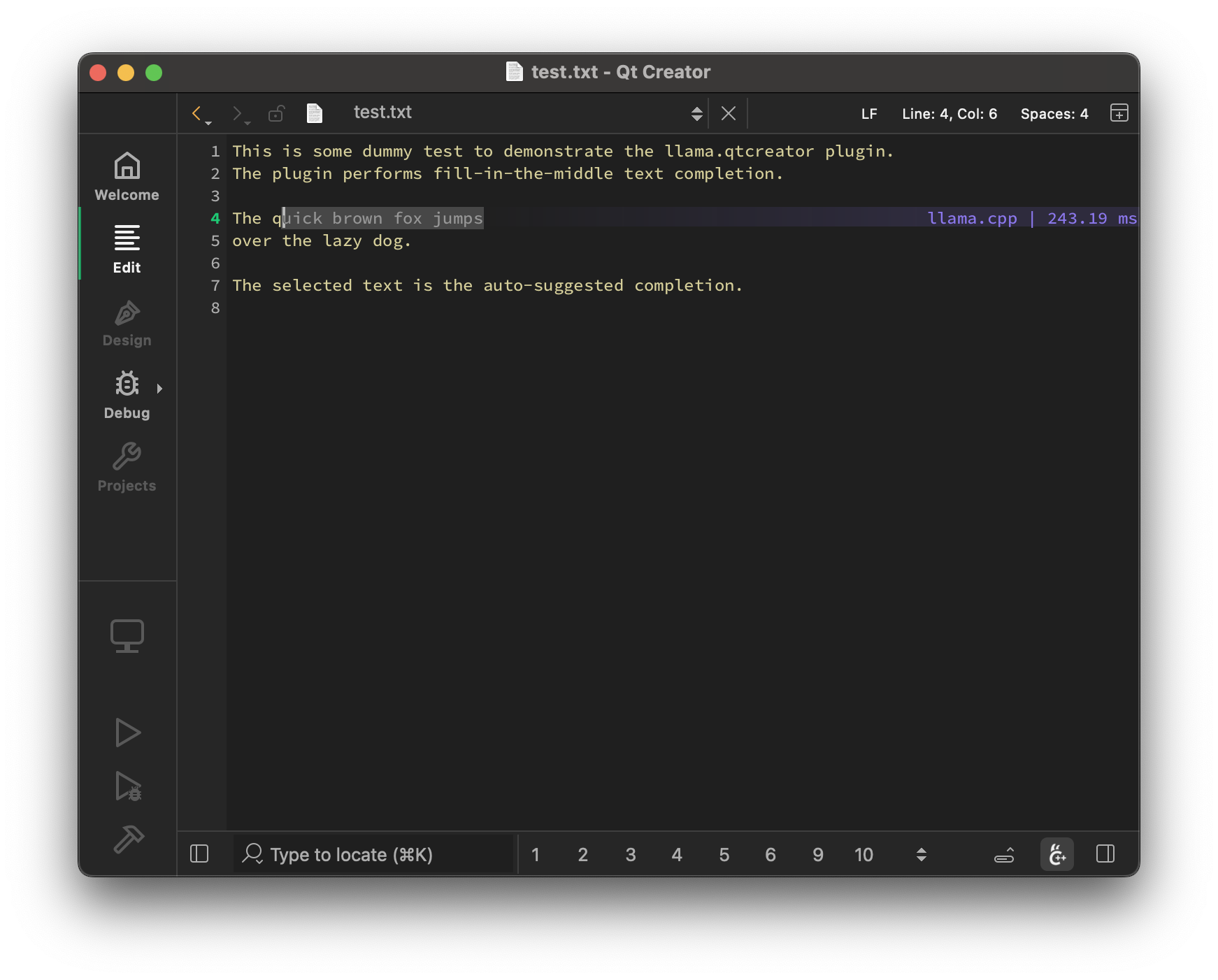

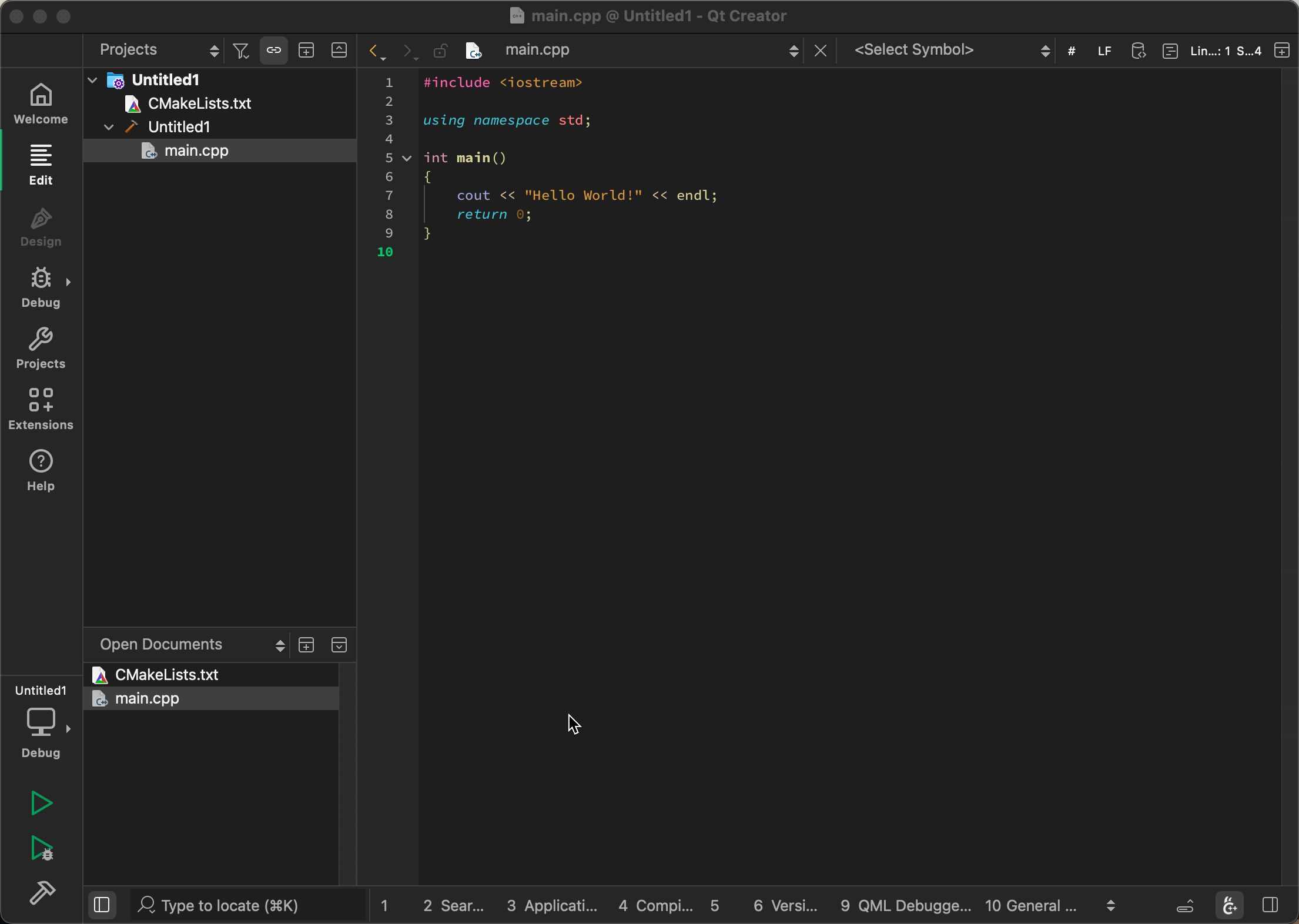

| 76 | + "Local LLM-assisted text completion for Qt Creator.", |

| 77 | + "", |

| 78 | + "", |

| 79 | + "", |

| 80 | + "---", |

| 81 | + "", |

| 82 | + "", |

| 83 | + "", |

| 84 | + "", |

| 85 | + "## Features", |

| 86 | + "", |

| 87 | + "- Auto-suggest on cursor movement. Toggle enable / disable with `Ctrl+Shift+G`", |

| 88 | + "- Trigger the suggestion manually by pressing `Ctrl+G`", |

| 89 | + "- Accept a suggestion with `Tab`", |

| 90 | + "- Accept the first line of a suggestion with `Shift+Tab`", |

| 91 | + "- Control max text generation time", |

| 92 | + "- Configure scope of context around the cursor", |

| 93 | + "- Ring context with chunks from open and edited files and yanked text", |

| 94 | + "- [Supports very large contexts even on low-end hardware via smart context reuse](https://github.com/ggml-org/llama.cpp/pull/9787)", |

| 95 | + "- Speculative FIM support", |

| 96 | + "- Speculative Decoding support", |

| 97 | + "- Display performance stats", |

| 98 | + "- Chat support", |

| 99 | + "- Source and Image drag & drop support", |

| 100 | + "- Current editor selection predefined and custom LLM prompts", |

| 101 | + "- [Tools usage](https://github.com/cristianadam/llama.qtcreator/wiki/Tools)", |

| 102 | + "", |

| 103 | + "", |

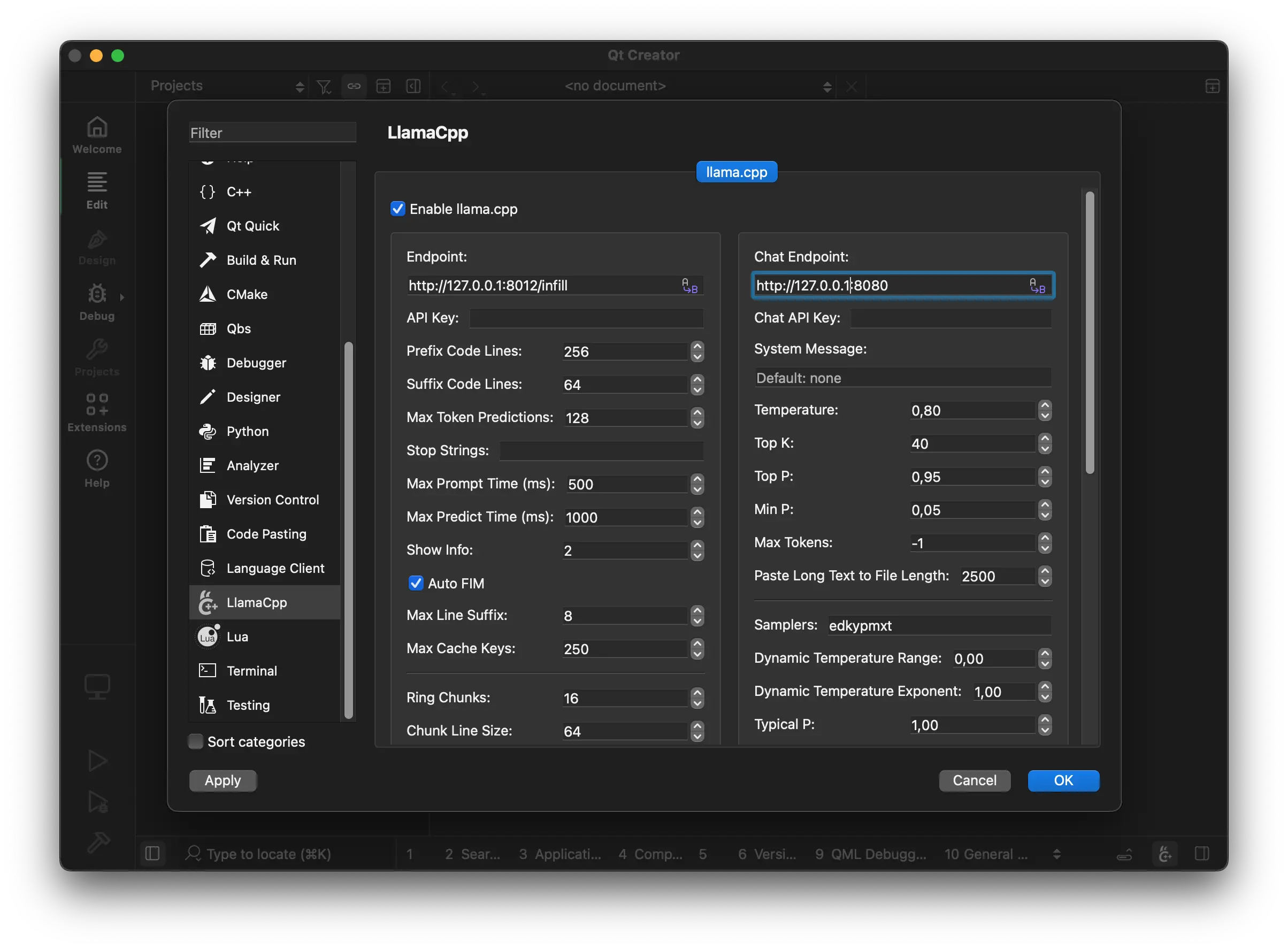

| 104 | + "### llama.cpp setup", |

| 105 | + "", |

| 106 | + "The plugin requires a [llama.cpp](https://github.com/ggml-org/llama.cpp) server instance to be running at:", |

| 107 | + "", |

| 108 | + "", |

| 109 | + "", |

| 110 | + "", |

| 111 | + "#### Mac OS", |

| 112 | + "", |

| 113 | + "```bash", |

| 114 | + "brew install llama.cpp", |

| 115 | + "```", |

| 116 | + "", |

| 117 | + "#### Windows", |

| 118 | + "", |

| 119 | + "```bash", |

| 120 | + "winget install llama.cpp", |

| 121 | + "```", |

| 122 | + "", |

| 123 | + "#### Any other OS", |

| 124 | + "", |

| 125 | + "Either build from source or use the latest binaries: https://github.com/ggml-org/llama.cpp/releases", |

| 126 | + "", |

| 127 | + "### llama.cpp settings", |

| 128 | + "", |

| 129 | + "Here are recommended settings, depending on the amount of VRAM that you have:", |

| 130 | + "", |

| 131 | + "- More than 16GB VRAM:", |

| 132 | + "", |

| 133 | + " ```bash", |

| 134 | + " llama-server --fim-qwen-7b-default", |

| 135 | + " ```", |

| 136 | + "", |

| 137 | + "- Less than 16GB VRAM:", |

| 138 | + "", |

| 139 | + " ```bash", |

| 140 | + " llama-server --fim-qwen-3b-default", |

| 141 | + " ```", |

| 142 | + "", |

| 143 | + "- Less than 8GB VRAM:", |

| 144 | + "", |

| 145 | + " ```bash", |

| 146 | + " llama-server --fim-qwen-1.5b-default", |

| 147 | + " ```", |

| 148 | + "", |

| 149 | + "Use `llama-server --help` for more details.", |

| 150 | + "", |

| 151 | + "", |

| 152 | + "### Recommended LLMs", |

| 153 | + "", |

| 154 | + "The plugin requires FIM-compatible models: [HF collection](https://huggingface.co/collections/ggml-org/llamavim-6720fece33898ac10544ecf9)", |

| 155 | + "", |

| 156 | + "## Examples", |

| 157 | + "", |

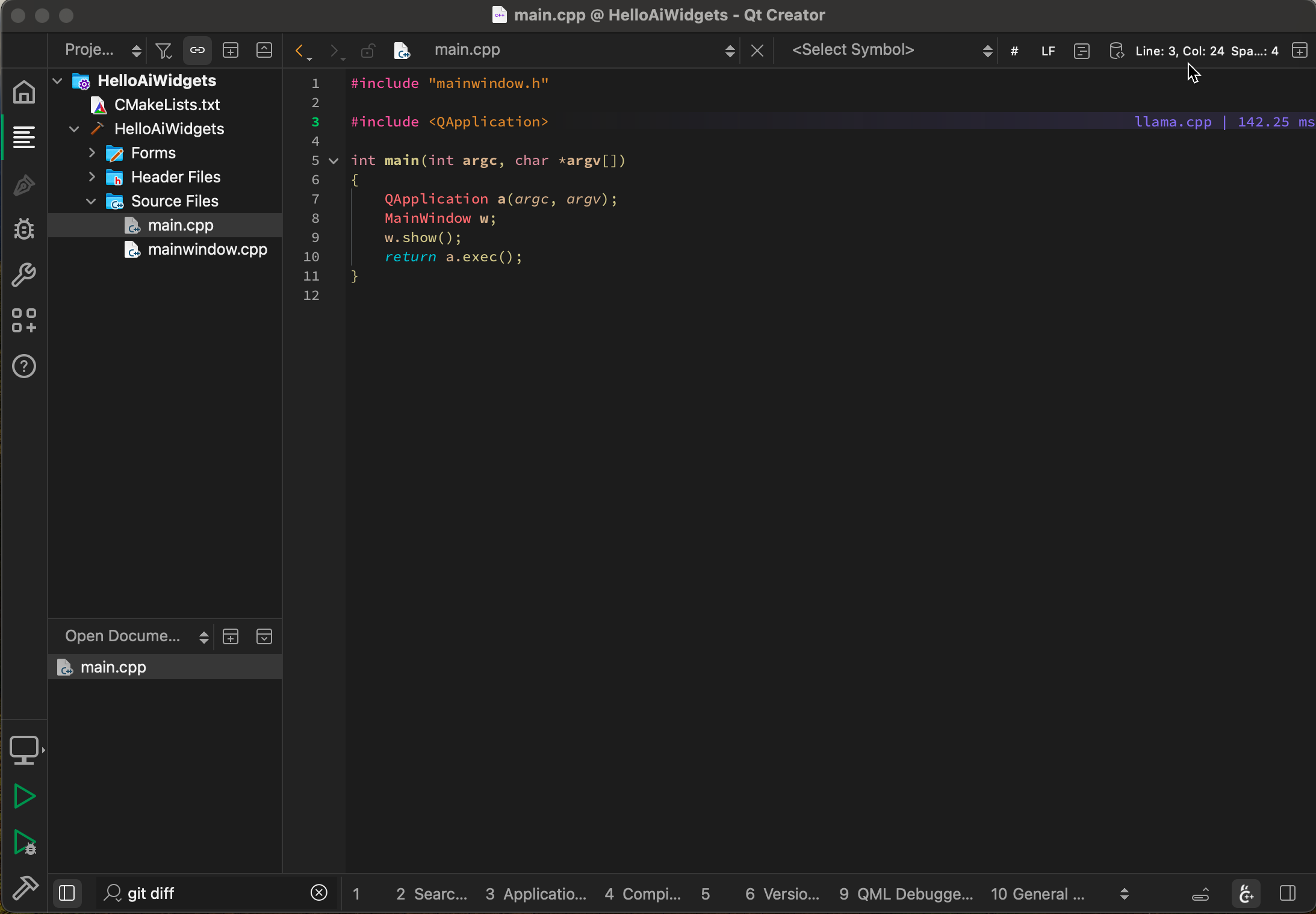

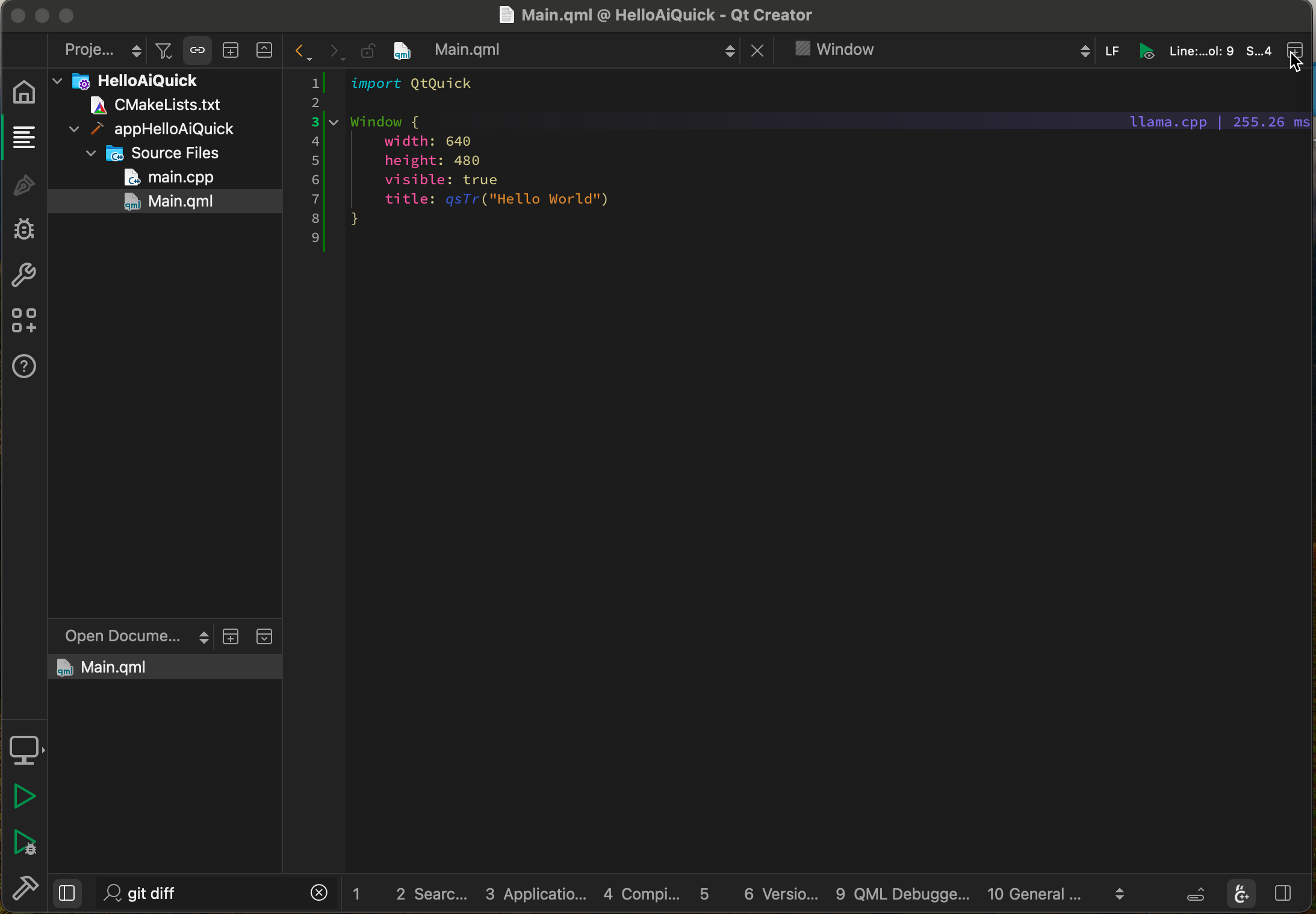

| 158 | + "### A Qt Quick example on MacBook Pro M3 `Qwen2.5-Coder 3B Q8_0`:", |

| 159 | + "", |

| 160 | + "", |

| 161 | + "", |

| 162 | + "### Chat on a Mac Studio M2 with `gpt-oss 20B`:", |

| 163 | + "", |

| 164 | + "", |

| 165 | + "", |

| 166 | + "### Using Tools on a MacBook M3 with `gpt-oss 20B`:", |

| 167 | + "", |

| 168 | + "https://github.com/user-attachments/assets/b38f2d6b-aea8-4879-be17-73f0290e4f71", |

| 169 | + "", |

| 170 | + "## Implementation details", |

| 171 | + "", |

| 172 | + "The plugin aims to be very simple and lightweight and at the same time to provide high-quality and performant local FIM completions, even on consumer-grade hardware. ", |

| 173 | + "", |

| 174 | + "## Other IDEs", |

| 175 | + "", |

| 176 | + "- Vim/Neovim: https://github.com/ggml-org/llama.vim", |

| 177 | + "- VS Code: https://github.com/ggml-org/llama.vscode" |

| 178 | + ], |

| 179 | + "Url": "https://github.com/ggml-org/llama.qtcreator", |

| 180 | + "DocumentationUrl": "", |

| 181 | + "Dependencies": [ |

| 182 | + { |

| 183 | + "Id": "core", |

| 184 | + "Version": "18.0.0" |

| 185 | + }, |

| 186 | + { |

| 187 | + "Id": "projectexplorer", |

| 188 | + "Version": "18.0.0" |

| 189 | + }, |

| 190 | + { |

| 191 | + "Id": "texteditor", |

| 192 | + "Version": "18.0.0" |

| 193 | + } |

| 194 | + ] |

| 195 | + } |

| 196 | + }, |

12 | 197 | "3.0.0": { |

13 | 198 | "sources": [ |

14 | 199 | { |

|

0 commit comments