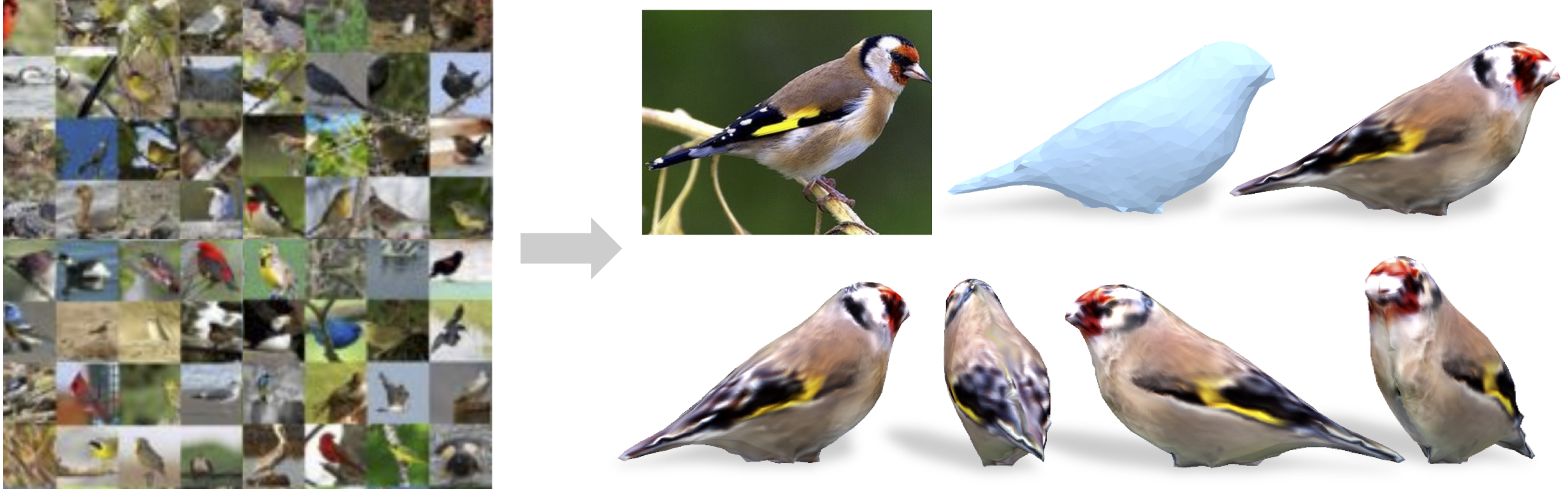

Shubham Goel, Angjoo Kanazawa, Jitendra Malik

University of California, Berkeley In ECCV, 2020

- Python 3.7

- Pytorch 1.1.0

- Pymesh

- SoftRas

- NMR

Please use this Dockerfile to build your environment. For convenience, we provide a pre-built docker image at shubhamgoel/birds. If interested in a non-docker build, please follow docs/installation.md

Please see docs/training.md

- From the

ucmrdirectory, download the pretrained models (cub_train_cam4_withcam.tar.gz) from this gdrive folder and extract it:

tar -vzxf cub_train_cam4_withcam.tar.gz

You should see cachedir/snapshots/cam/e400_cub_train_cam4

- Run the demo:

python -m src.demo \

--pred_pose \

--pretrained_network_path=cachedir/snapshots/cam/e400_cub_train_cam4/pred_net_600.pth \

--shape_path=cachedir/template_shape/bird_template.npy\

--img_path demo_data/birdie1.png

To evaluate camera poses errors on the entire test dataset, first download the CUB dataset and annotation files as instructed in docs/training.md. Then run

python -m src.experiments.benchmark \

--pred_pose \

--pretrained_network_path=cachedir/snapshots/cam/e400_cub_train_cam4/pred_net_600.pth \

--shape_path=cachedir/template_shape/bird_template.npy \

--nodataloader_computeMaskDt \

--split=testIf you use this code for your research, please consider citing:

@inProceedings{ucmrGoel20,

title={Shape and Viewpoints without Keypoints},

author = {Shubham Goel and

Angjoo Kanazawa and

and Jitendra Malik},

booktitle={ECCV},

year={2020}

}