Desktop: route STT through backend /v4/listen, remove DEEPGRAM_API_KEY#5395

Desktop: route STT through backend /v4/listen, remove DEEPGRAM_API_KEY#5395

Conversation

Greptile SummaryThis PR routes desktop speech-to-text through the backend Key issues found:

Confidence Score: 2/5

Sequence DiagramsequenceDiagram

participant UI as AppState

participant BTS as BackendTranscriptionService

participant WS as /v4/listen WebSocket

participant AM as AudioMixer

UI->>BTS: start(onTranscript, onConnected)

BTS->>WS: WebSocket upgrade request

Note over BTS: 0.5s timer, isConnected = true

BTS-->>UI: onConnected()

UI->>AM: start(outputMode: .mono)

loop Audio streaming

AM->>BTS: sendAudio(monoData)

BTS->>WS: binary PCM16 mono frame

WS-->>BTS: JSON segment array

BTS-->>UI: onTranscript(segment)

end

UI->>BTS: stop()

BTS->>WS: close connection

Note over UI: backendOwnsConversation=true

|

| private func startKeepalive() { | ||

| keepaliveTask?.cancel() | ||

| keepaliveTask = Task { [weak self] in | ||

| while !Task.isCancelled { | ||

| try? await Task.sleep(nanoseconds: UInt64(self?.keepaliveInterval ?? 8.0) * 1_000_000_000) | ||

| guard !Task.isCancelled, let self = self, self.isConnected else { break } | ||

| self.sendKeepalive() | ||

| } | ||

| } | ||

| } | ||

|

|

||

| private func sendKeepalive() { |

There was a problem hiding this comment.

Initial connection failures are silently swallowed — reconnect never fires

isConnected is set optimistically after a 0.5s timer checks webSocketTask?.state == .running. If the server rejects the upgrade (e.g., HTTP 401 Unauthorized, 403 Forbidden, or TLS error), URLSessionWebSocketTask delivers the failure through the receive completion handler — but that handler guards with:

guard self.isConnected else { return }If the error arrives within 0.5s (which is typical for a rejected HTTP upgrade), isConnected is still false, so the guard exits early, the error is discarded, and handleDisconnection() is never called. Because handleDisconnection() is the only place that schedules a reconnect, the service is permanently stuck: the error is silently logged but reconnect is never scheduled. The user sees no transcript and no error.

Recommended fix: Remove the isConnected guard from the receive failure path, or use a dedicated state variable (e.g., .connecting) that represents "a connection attempt is in progress and failures should trigger reconnect":

| private func startKeepalive() { | |

| keepaliveTask?.cancel() | |

| keepaliveTask = Task { [weak self] in | |

| while !Task.isCancelled { | |

| try? await Task.sleep(nanoseconds: UInt64(self?.keepaliveInterval ?? 8.0) * 1_000_000_000) | |

| guard !Task.isCancelled, let self = self, self.isConnected else { break } | |

| self.sendKeepalive() | |

| } | |

| } | |

| } | |

| private func sendKeepalive() { | |

| case .failure(let error): | |

| // Guard on isConnected suppresses errors that arrive before the 0.5s timer fires. | |

| // Use shouldReconnect instead so rejected upgrades still trigger a retry. | |

| guard self.isConnected || self.shouldReconnect else { return } | |

| logError("BackendTranscriptionService: Receive error", error: error) | |

| if self.isConnected { | |

| self.handleDisconnection() | |

| } else { | |

| // Initial connection failed — schedule reconnect directly | |

| self.scheduleReconnect() | |

| } |

The deepest fix is to eliminate the 0.5s timer entirely and instead set isConnected = true the first time a message is received from the backend.

| private var isConnected = false | ||

| private var shouldReconnect = false |

There was a problem hiding this comment.

Data race on isConnected

isConnected is a plain unsynchronized Bool that is read and written from multiple concurrent contexts:

- Written on the main queue (line 269, via

DispatchQueue.main.asyncAfter) - Written in

handleDisconnection()(line 350), called from URLSession's internal queue and fromTaskbodies - Written in

disconnect()(line 334), called from whereverstop()is invoked - Read in

sendAudio()(line 145), called from the real-time audio capture callback thread - Read inside keepalive and watchdog

Taskbodies

Swift's memory model does not guarantee atomic access to plain value types across threads. The recommended fix is to mark the class @MainActor (which is idiomatic for ObservableObject-style services in this codebase), or protect the flag with a dedicated lock alongside audioBufferLock.

| return | ||

| } | ||

|

|

||

| let isBatchMode = ShortcutSettings.shared.pttTranscriptionMode == .batch | ||

| // Always use live streaming through the backend (no client-side batch mode) | ||

| startMicCapture() | ||

|

|

||

| if isBatchMode { | ||

| // Batch mode: just capture audio into buffer, no streaming connection | ||

| batchAudioLock.lock() | ||

| batchAudioBuffer = Data() | ||

| batchAudioLock.unlock() | ||

| startMicCapture(batchMode: true) | ||

| log("PushToTalkManager: started audio capture (batch mode)") | ||

| } else { | ||

| // Live mode: start mic capture and stream to Deepgram | ||

| startMicCapture() | ||

| let language = AssistantSettings.shared.effectiveTranscriptionLanguage | ||

| let service = BackendTranscriptionService(language: language) | ||

| transcriptionService = service | ||

|

|

||

| do { | ||

| let language = AssistantSettings.shared.effectiveTranscriptionLanguage | ||

| let service = try TranscriptionService(language: language, channels: 1) | ||

| transcriptionService = service | ||

|

|

||

| service.start( | ||

| onTranscript: { [weak self] segment in | ||

| Task { @MainActor in | ||

| self?.handleTranscript(segment) | ||

| } | ||

| }, | ||

| onError: { [weak self] error in | ||

| Task { @MainActor in | ||

| logError("PushToTalkManager: transcription error", error: error) | ||

| self?.stopListening() | ||

| } | ||

| }, | ||

| onConnected: { | ||

| Task { @MainActor in | ||

| log("PushToTalkManager: DeepGram connected") | ||

| } | ||

| } | ||

| ) | ||

| } catch { | ||

| logError("PushToTalkManager: failed to create TranscriptionService", error: error) | ||

| stopListening() | ||

| service.start( | ||

| onTranscript: { [weak self] segment in | ||

| Task { @MainActor in | ||

| self?.handleTranscript(segment) | ||

| } | ||

| }, | ||

| onError: { [weak self] error in | ||

| Task { @MainActor in | ||

| logError("PushToTalkManager: transcription error", error: error) | ||

| self?.stopListening() | ||

| } | ||

| }, | ||

| onConnected: { | ||

| Task { @MainActor in | ||

| log("PushToTalkManager: backend connected") | ||

| } | ||

| } | ||

| } | ||

| ) | ||

| } | ||

|

|

||

| private func startMicCapture(batchMode: Bool = false) { | ||

| private func startMicCapture() { |

There was a problem hiding this comment.

First ~500ms+ of PTT audio silently dropped

startMicCapture() is called before service.start(), and audio callbacks immediately call self.transcriptionService?.sendAudio(audioData). However, sendAudio has guard isConnected else { return }, and isConnected won't become true until the 0.5s timer fires in connectWithToken — after the auth token fetch, TCP handshake, WebSocket upgrade, and the 500ms artificial delay all complete. On any connection with non-trivial latency this threshold can easily exceed 500ms.

The result is that all audio captured from the moment the PTT button is pressed until the WebSocket is established is silently discarded. For short PTT presses (e.g., a single short sentence) this could mean losing the first word or two.

Recommended fix: Delay startMicCapture() until onConnected fires:

| return | |

| } | |

| let isBatchMode = ShortcutSettings.shared.pttTranscriptionMode == .batch | |

| // Always use live streaming through the backend (no client-side batch mode) | |

| startMicCapture() | |

| if isBatchMode { | |

| // Batch mode: just capture audio into buffer, no streaming connection | |

| batchAudioLock.lock() | |

| batchAudioBuffer = Data() | |

| batchAudioLock.unlock() | |

| startMicCapture(batchMode: true) | |

| log("PushToTalkManager: started audio capture (batch mode)") | |

| } else { | |

| // Live mode: start mic capture and stream to Deepgram | |

| startMicCapture() | |

| let language = AssistantSettings.shared.effectiveTranscriptionLanguage | |

| let service = BackendTranscriptionService(language: language) | |

| transcriptionService = service | |

| do { | |

| let language = AssistantSettings.shared.effectiveTranscriptionLanguage | |

| let service = try TranscriptionService(language: language, channels: 1) | |

| transcriptionService = service | |

| service.start( | |

| onTranscript: { [weak self] segment in | |

| Task { @MainActor in | |

| self?.handleTranscript(segment) | |

| } | |

| }, | |

| onError: { [weak self] error in | |

| Task { @MainActor in | |

| logError("PushToTalkManager: transcription error", error: error) | |

| self?.stopListening() | |

| } | |

| }, | |

| onConnected: { | |

| Task { @MainActor in | |

| log("PushToTalkManager: DeepGram connected") | |

| } | |

| } | |

| ) | |

| } catch { | |

| logError("PushToTalkManager: failed to create TranscriptionService", error: error) | |

| stopListening() | |

| service.start( | |

| onTranscript: { [weak self] segment in | |

| Task { @MainActor in | |

| self?.handleTranscript(segment) | |

| } | |

| }, | |

| onError: { [weak self] error in | |

| Task { @MainActor in | |

| logError("PushToTalkManager: transcription error", error: error) | |

| self?.stopListening() | |

| } | |

| }, | |

| onConnected: { | |

| Task { @MainActor in | |

| log("PushToTalkManager: backend connected") | |

| } | |

| } | |

| } | |

| ) | |

| } | |

| private func startMicCapture(batchMode: Bool = false) { | |

| private func startMicCapture() { | |

| service.start( | |

| onTranscript: { [weak self] segment in | |

| Task { @MainActor in | |

| self?.handleTranscript(segment) | |

| } | |

| }, | |

| onError: { [weak self] error in | |

| Task { @MainActor in | |

| logError("PushToTalkManager: transcription error", error: error) | |

| self?.stopListening() | |

| } | |

| }, | |

| onConnected: { [weak self] in | |

| Task { @MainActor in | |

| log("PushToTalkManager: backend connected — starting mic capture") | |

| self?.startMicCapture() | |

| } | |

| } | |

| ) |

Remove the startMicCapture() call before service.start().

060ef49 to

39fea6b

Compare

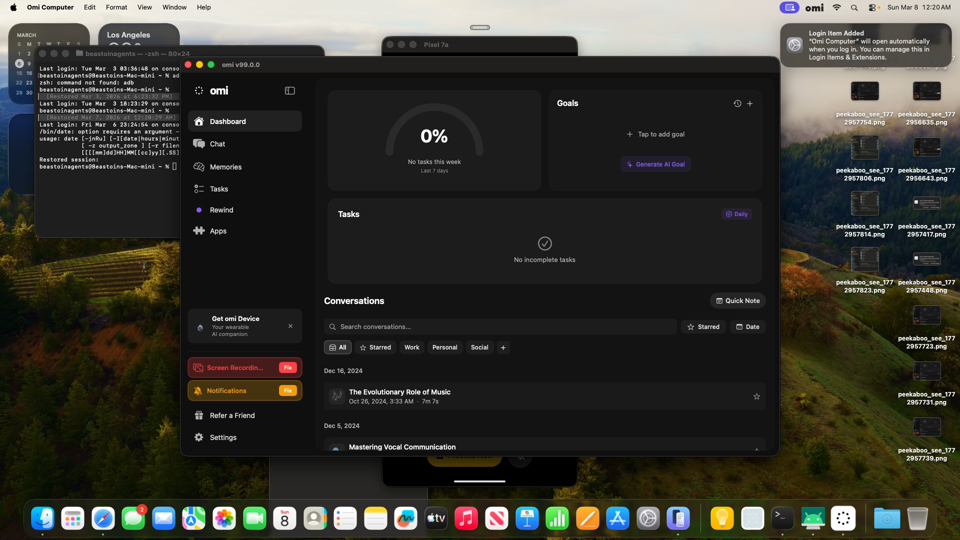

Mac Mini E2E Test — PR #5395 (Deepgram STT through /v4/listen)Built from 1. App authenticated, dashboard loaded

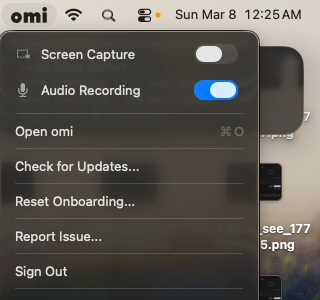

2. Audio Recording toggle ON (BackendTranscriptionService active)

3. Backend WebSocket log — live audio streamVerified

Not verified (quiet room)

by AI for @beastoin |

39fea6b to

71a20c0

Compare

Independent Verification — PR #5395Verifier: kelvin Test Results

Codex Audit

Cross-PR Interaction

Remote Sync

Verdict: PASS |

Independent Verification — PR #5395Verifier: noa (independent, did not author this code) Test Results

Codex Audit

Commands RunRemote Sync

Verdict: PASS |

Combined UAT Summary — Desktop Migration PRsVerifier: noa | Branch:

Combined: 1026 pass, 13 fail (pre-existing), 42 errors (env-only) | Cross-PR interference: none | Remote sync: verified Overall Verdict: PASS — ready for merge in order #5374 → #5395 → #5413 |

New service replacing direct Deepgram connection. Connects to backend /v4/listen with Bearer auth header, streams mono PCM16 audio at 16kHz, parses backend response format (segment arrays, ping heartbeats, events). Configurable source parameter for BLE device type propagation. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Add OutputMode enum (.stereo/.mono) with mono averaging both channels. Fix processBuffers() to work when only one source has data (e.g. system audio disabled by default) — previously min(mic, 0) = 0 blocked all output. Existing silence-padding handles the gap. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Replace concrete TranscriptionService parameter with audioSink closure for decoupled audio routing. Callers provide destination closure instead of coupling to a specific transcription type. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Replace direct Deepgram with BackendTranscriptionService. Force streaming mode, set AudioMixer to mono. Add backendOwnsConversation flag to skip createConversationFromSegments() (backend creates conversations via lifecycle manager). Pass correct source for BLE devices. Remove DEEPGRAM_API_KEY check. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Replace direct Deepgram with backend service for live PTT. Remove batch transcription path entirely — backend handles STT server-side. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

No longer needed — STT now routes through backend /v4/listen. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

71a20c0 to

e2a8857

Compare

Independent Verification — PR #5395 (rebased)Verifier: noa | Branch: Test Results

Architecture Review

Mac Mini E2E

Verdict: ✅ PASS |

Deployment Steps ChecklistDeploy surfaces: Desktop only (no backend changes) Pre-merge

Desktop deploy (automatic)

Post-deploy verification

Rollback plan

by AI for @beastoin |

Independent Verification — PR #5395 (fix/desktop-stt-backend-5393)Verifier: noa (independent) Results

Key EvidenceZero DEEPGRAM references in entire log. The old direct Deepgram path is fully replaced by BackendTranscriptionService. Verdict: PASS |

Independent Verification — PR #5395Verifier: noa (independent) ScopeDesktop STT backend migration: replace direct Deepgram with BackendTranscriptionService (wss://api.omi.me/v4/listen), switch audio to mono. Results

Codex Warnings (non-blocking)

Verdict: PASSCore fix verified: zero DEEPGRAM references in runtime log. BackendTranscriptionService connects and syncs data correctly. Mono audio pipeline works. |

Closes #5393 (Phase 1). Routes desktop STT through backend /v4/listen WebSocket. Removes DEEPGRAM_API_KEY from client — API keys no longer bundled in the app.

What changed

New: BackendTranscriptionService.swift — WebSocket client for

/v4/listenwith Bearer auth, mono PCM16 streaming, response parsing (segment arrays, ping heartbeats, events), keepalive, reconnection.AudioMixer.swift — Mono output mode + single-source fix (system audio disabled → 0 bytes was a pre-existing bug).

BleAudioService.swift — Closure-based

audioSinkinstead of concrete TranscriptionService type.AppState.swift — Wires BackendTranscriptionService,

backendOwnsConversationflag (prevents duplicate conversations), correct BLE source propagation, forces streaming mode.PushToTalkManager.swift — Uses BackendTranscriptionService for live PTT.

Architecture

Backend owns conversation lifecycle. Desktop sends raw audio, receives transcript segments.

Verification

e2a88573Driver verdict: PASS. No backend changes — desktop-only. STT works through /v4/listen.

Infra Prerequisites

/v4/listenalready supports desktop auth and all required params on prodDeployment Steps

desktop_auto_release.yml→ Codemagic./scripts/rollback_release.sh <tag>Merge order

#5374 → this PR → #5413

by AI for @beastoin