Table of Contents

OmNomNicient is designed to help users identify dishes based on photos and recommend restaurants selling that dish.

It allows users who are unfamiliar with dish names to easily identify dishes and find places around them selling the dish.

It allows users who are unfamiliar with dish names to easily identify dishes and find places around them selling the dish.

- Users will upload an image of the dish to be identified.

- They would then input the address where they would like to find restaurants selling that dish.

- Upon submission, OmNomNicient identifies the dish and recommends restaurants near the inputted address.

How We Built This:

- Identify Dish:

When an image is uploaded, it is passed through a TensorFlow supervised learning, machine learning model which identifies the dish. - Identify Address Coordinates:

The address inputted by the user is then passed through Google Maps' Geocoding API which identifies the latitude and longitude coordinates of the address. - Find Restaurants Near The Address, Selling The Dish:

The identified dish and latitude and longitude coordinates are then passed through Google Maps' Places API which identifies restaurants selling the dish. - Find Restaurant Photos:

A third API call is made through Google Maps' Places API to retrieve the restaurant images.

- Add restaurants to the favourites category to keep track of them.

- Remember the places you've tried before.

- Easily favourite a restaurant that you've eaten at and delete it from the past eats category.

- Look through your history of previous searches.

- View search results again.

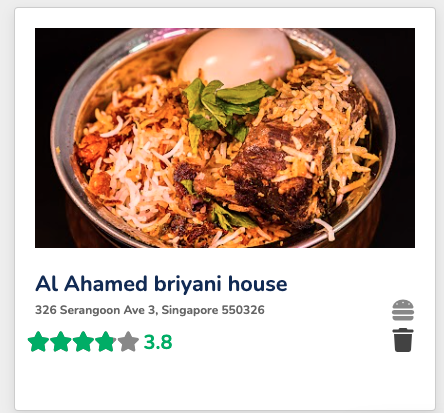

- Restaurant name

- Restaurant rating

- Restaurant address

- Restaurant image

User Interface:

Component Routing:

Server:

Database:

Authentication:

Image Detection:

Restaurant Search:

Reason for Choosing a SQL Database:

- Given the small size and complexity of our app, we did not have much data to store and data did not have a lot of relationships with other data. Hence, we did not require the ability to embed data, provided by a NoSQL database.

- We wanted to allow users to be able to type in their address and use this to search for restaurants nearby.

- Google Maps API provided a variety of APIs which allowed us to convert the users address into latitude and longitude coordinates and use these to search for restaurants serving the identified dish.

- Additionally, the Google Places API allowed us to customize our search parameters to get more accurate location results.

- Our desire to use a machine learning model to identify dishes in an image led us to TensorFlow.

- TensorFlows' ability to train a machine learning model to identify objects in an image suited this need.

- We chose JSON Web Tokens as our method of authentication due to its increased security through its digital signature capabilities.

Dominique Yeo | LinkedIn

Shannon Suresh | LinkedIn