Blog post: https://araffin.github.io/post/rl102/

Website: https://rlsummerschool.com/

Slides: https://araffin.github.io/slides/dqn-tutorial/

Stable-Baselines3 repo: https://github.com/DLR-RM/stable-baselines3

RL Virtual School 2021: https://github.com/araffin/rl-handson-rlvs21

RL Summer School 2023: https://rlsummerschool.com/2023/

RL Summer School 2026: https://2026.rlsummerschool.com/

- Fitted Q-Iteration (FQI) Colab Notebook

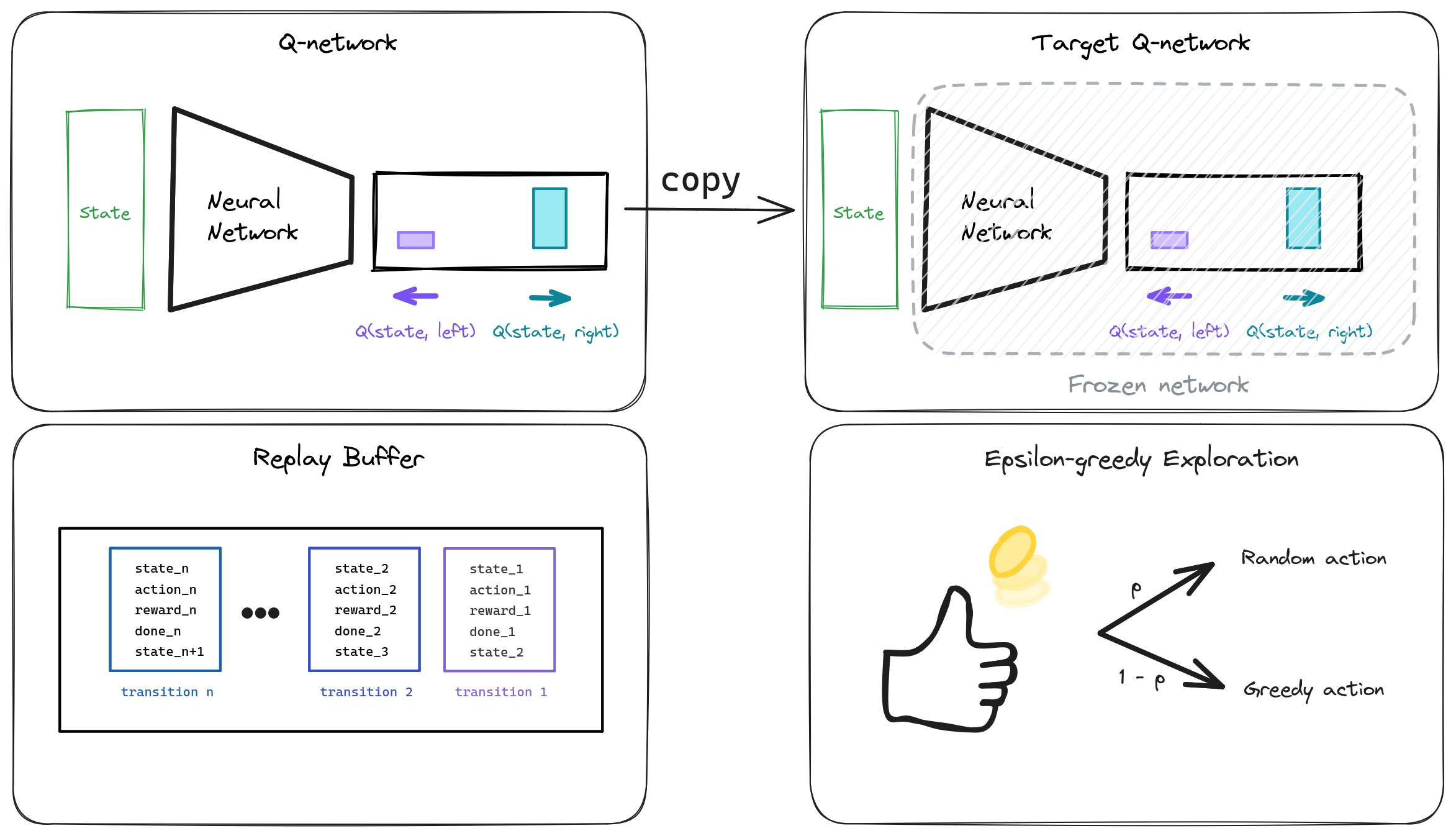

- Deep Q-Network (DQN) Part I: DQN Components: Replay Buffer, Q-Network, ... Colab Notebook

- Deep Q-Network (DQN) Part II: DQN Update and Training Loop Colab Notebook

- Install uv

- Run

uv run jupyter lab notebooks

Solutions can be found in the notebooks/solutions/ folder.

The code in dqn_tutorial package can also be used to bypass some exercises.