Building state-of-the-art transformer models from scratch with modern MLOps practices

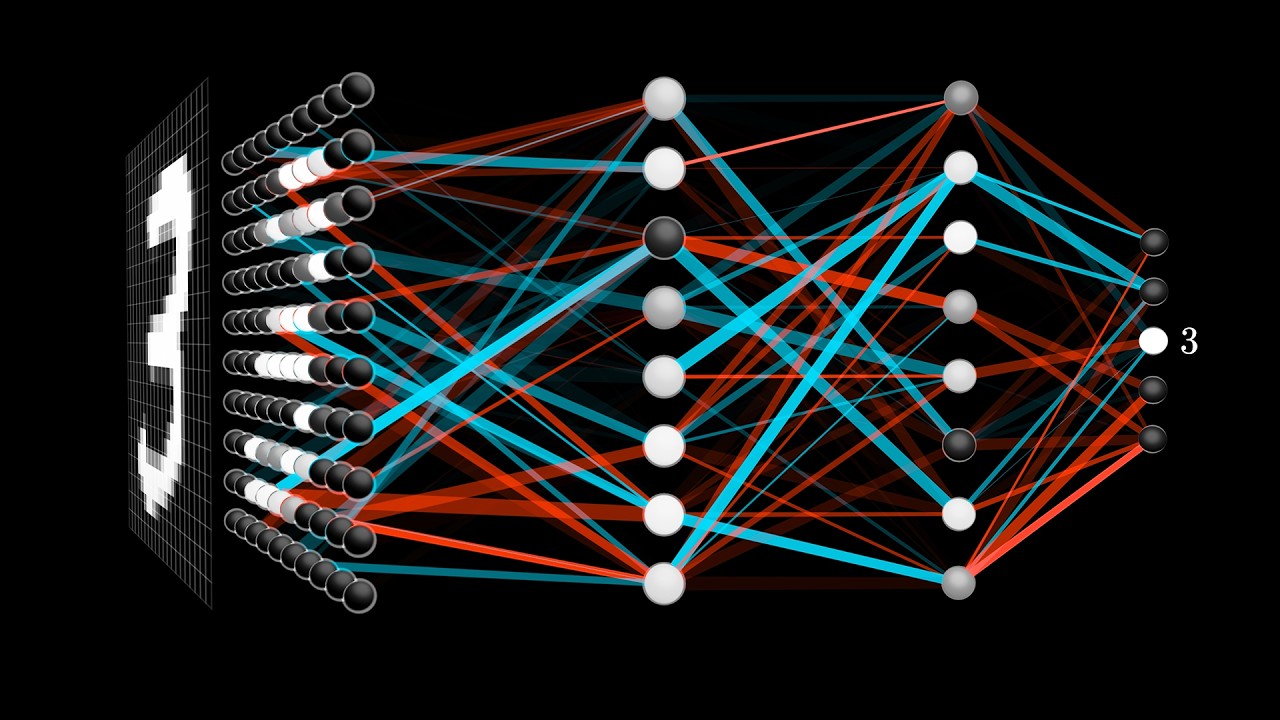

🧠 Neural Networks Fundamentals Playlist by 3Blue1Brown

Click the image above to watch the complete series 📹

|

🎯 Core Concepts

|

🔬 Visual Understanding

|

🚀 Foundation for Transformers

|

| Episode | Topic | Duration | Key Concepts |

|---|---|---|---|

| 1 | But what is a neural network? | 19 min | Neurons, layers, MNIST |

| 2 | Gradient descent, how neural networks learn | 21 min | Cost functions, optimization |

| 3 | What is backpropagation really doing? | 14 min | Chain rule, derivatives |

| 4 | Backpropagation calculus | 10 min | Mathematical details |

📋 What you'll learn in this video:

- 🔧 Transformer Architecture Deep Dive: Understanding attention mechanisms, positional encoding, and layer normalization

- 🚀 Implementation from Scratch: Building transformers with PyTorch, including multi-head attention and feed-forward networks

- 📊 Training Strategies: Advanced techniques for training large transformer models efficiently

- 🎯 Fine-tuning & Transfer Learning: Adapting pre-trained models for specific tasks

- 🛠️ MLOps Integration: Using modern tools like UV for dependency management and reproducible environments

- 📈 Performance Optimization: Memory management, gradient checkpointing, and distributed training

- Python 3.12+ (3.13 for GPU environments)

- CUDA 12.06+ (for GPU training)

- UV Package Manager

- Recommended: Watch the 3Blue1Brown series first! 🎥

# 1. Clone the repository

git clone https://github.com/your-username/MLX8-W3-Transformers.git

cd MLX8-W3-Transformers

# 2. Install UV (if not already installed)

curl -LsSf https://astral.sh/uv/install.sh | sh

# 3. Setup environment (auto-detects your platform)

uv sync

# 4. Run your first transformer!

uv run python examples/basic_transformer.py🪟 Windows 11 Development

echo "3.12" > .python-version

uv sync --extra dev

uv run python examples/cpu_training.py🍎 macOS (Intel & Apple Silicon)

echo "3.12" > .python-version

uv sync --extra dev

uv run python examples/cpu_training.py🐧 Ubuntu 22.04 + CUDA 12.06

echo "3.13" > .python-version

export UV_EXTRA_INDEX_URL="https://download.pytorch.org/whl/cu121"

uv sync --extra gpu-dev

uv run python examples/gpu_training.py🐧 Ubuntu 24.04 + CUDA 12.8

echo "3.13" > .python-version

export UV_EXTRA_INDEX_URL="https://download.pytorch.org/whl/cu128"

uv sync --extra gpu-dev

uv run python examples/gpu_training.py