Conversation

|

|

The latest updates on your projects. Learn more about Vercel for GitHub.

9 Skipped Deployments

|

|

No actionable comments were generated in the recent review. 🎉 ℹ️ Recent review info⚙️ Run configurationConfiguration used: defaults Review profile: CHILL Plan: Pro Run ID: 📒 Files selected for processing (2)

📝 WalkthroughWalkthroughAdds a new Lincolnshire case study markdown, extends the case study layout to support external long-read CTAs via frontMatter ( Changes

Estimated code review effort🎯 3 (Moderate) | ⏱️ ~22 minutes Possibly related PRs

Suggested reviewers

Poem

🚥 Pre-merge checks | ✅ 2 | ❌ 3❌ Failed checks (2 warnings, 1 inconclusive)

✅ Passed checks (2 passed)

✏️ Tip: You can configure your own custom pre-merge checks in the settings. ✨ Finishing Touches

🧪 Generate unit tests (beta)

Tip Try Coding Plans. Let us write the prompt for your AI agent so you can ship faster (with fewer bugs). Thanks for using CodeRabbit! It's free for OSS, and your support helps us grow. If you like it, consider giving us a shout-out. Comment |

There was a problem hiding this comment.

Actionable comments posted: 9

🧹 Nitpick comments (2)

site/public/rss.xml (1)

1-1: Consider making feed generation deterministic to reduce PR noise.

lastBuildDate/channelpubDateupdates on each regeneration create high-churn diffs in a tracked artifact file. If possible, generate these XML files at deploy time (or avoid committing unchanged feed artifacts from unrelated content edits).This will keep PRs focused and reduce merge conflicts.

🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed. In `@site/public/rss.xml` at line 1, The RSS feed file rss.xml is updating channel-level timestamps (lastBuildDate and pubDate) on every regeneration which causes high-churn diffs; change the generation process so these fields are deterministic or only set at deploy time (or exclude/rss.xml from commits when only timestamps change). Update the feed generation logic that writes lastBuildDate and pubDate to either keep a fixed value (e.g., derived from published item dates), omit them when unchanged, or move stamp-setting to your deployment pipeline so the channel metadata in rss.xml remains stable across content edits.site/layouts/casestudy.tsx (1)

561-571: Consider adding visual/textual indication that link opens in a new tab.The external link correctly uses

rel="noopener noreferrer"for security, but users (especially screen reader users) may not know it opens in a new tab. Consider adding a visual indicator or screen-reader-only text.♿ Suggested accessibility improvement

<a href={longReadLink} target="_blank" rel="noopener noreferrer" className="inline-flex items-center gap-2 bg-slate-900 dark:bg-white text-white dark:text-[`#111`] text-sm font-semibold px-6 py-3.5 rounded-lg hover:bg-slate-700 dark:hover:bg-slate-100 transition-colors shrink-0" > Read the full story + <span className="sr-only">(opens in new tab)</span> <svg viewBox="0 0 16 16" fill="none" stroke="currentColor" strokeWidth="2" strokeLinecap="round" strokeLinejoin="round" className="w-4 h-4"> <path d="M3 8h10M9 4l4 4-4 4"/> </svg> </a>🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed. In `@site/layouts/casestudy.tsx` around lines 561 - 571, The external anchor using longReadLink opens in a new tab but lacks an accessible indication; update the <a> that renders "Read the full story" to include a visible or screen-reader-only cue (e.g., append a visually-hidden span with text like "(opens in new tab)" and/or add an accessible label via aria-label or aria-describedby) and ensure the decorative external-tab SVG has aria-hidden="true" so screen readers only announce the explicit hint; keep target="_blank" and rel="noopener noreferrer" intact.

🤖 Prompt for all review comments with AI agents

Verify each finding against the current code and only fix it if needed.

Inline comments:

In

`@site/content/case-studies/modernizing-lincolnshire-county-council39s-open-data-portal.md`:

- Around line 26-31: Fix two copy typos in the case study content: replace the

double period after "admin dashboard.." with a single period, and replace the

doubled single quotes in "Council''s" with a proper apostrophe ("Council's") so

the user-visible text reads correctly in the paragraph that describes PortalJS

Cloud delivering a managed service and the modern branded interface.

- Around line 10-12: The three keystat strings ('Seamless/n catalogue

migration', 'Zero/n infrastructure overhead', '100%/n Budget-friendly solution')

contain the literal token "/n" which renders incorrectly; replace each "/n" with

a proper line-break representation (either an actual line break, a Markdown hard

break, or an HTML <br/> as appropriate for this content) so the phrases render

on two lines rather than showing "/n" literally.

- Around line 6-7: The frontmatter keys image and images contain misspelled

filenames (e.g., "linconshire0.jpg" and "lincon" variants) that will 404; update

the values so they consistently use the correct "lincolnshire" spelling (e.g.,

rename "linconshire0.jpg" to "lincolnshire0.jpg" and ensure all entries in image

and images arrays match the actual asset filenames used in the repo:

"lincolnshire1.png", "lincolnshire2.png", etc.), and verify the referenced

assets exist in the images/casestudies directory.

In `@site/public/atom.xml`:

- Line 5504: Replace the user-facing placeholder "Newsletter (insert link)" in

the quoted atom feed string "There will be more automated datasets on :datahub:

so join our [community chat on :discord: Discord](https://discord.gg/KrRzMKU)

and our Newsletter (insert link) to receive the latest news!" by either removing

the newsletter mention entirely or replacing "Newsletter (insert link)" with the

actual newsletter URL/text; ensure the final copy uses a valid Markdown/HTML

link (e.g., "Newsletter (https://...)" or "[Newsletter](https://...)") so no

broken/stale placeholder remains.

- Line 441: Fix the typo in the Atom feed summary: update the text inside the

<summary type="html"> element (the summary string "programatically create

datasets, ...") to use the correct spelling "programmatically" instead of

"programatically" so the summary reads "...to programmatically create datasets,

add resources, upload and replace data files, and update metadata—showing how to

build repeatable data update pipelines with code examples."

- Around line 15-1014: The PR accidentally bundles broad RSS/Atom feed updates

in site/public/atom.xml (multiple <entry> items such as the entries titled "Keep

Your Portal Data Fresh: A Hands-On Guide to the PortalJS Cloud API", "Turning

OpenMetadata into a User-Friendly Data Portal with PortalJS", etc.) that are

unrelated to the Lincolnshire case-study; revert or remove any <entry> elements

not part of the case-study, then regenerate atom.xml from only the intended

case-study content (or run the canonical feed generation script used by the site

build), verify the regenerated feed contains only the targeted entry/changes,

and commit those feed changes in a separate PR so this PR remains scoped to the

case-study.

In `@site/public/rss.xml`:

- Around line 1-417: The RSS feed currently embeds full article bodies in the

channel's <item><description> fields (see channel/item/description in rss.xml),

causing excessive payloads; change generation so <description> contains a short

summary/excerpt (e.g., first 200–400 chars or a generated teaser) with no full

code blocks or inline images, and remove large embedded content from description

for each item; if you need to publish full HTML keep it in <content:encoded>

only when size-controlled (or behind an explicit flag) and ensure you strip

large assets and code blocks before inserting into either tag.

- Around line 432-440: The RSS feed is embedding raw JSX/MDX (e.g., <img

style={{"marginBottom": 0}}> and <div style={{textAlign: "center"}}>) into

<description> for posts; update the RSS generation flow (the routine that

produces <description> content) to render/serialize post bodies to plain HTML

before writing the <description> element so attributes become valid HTML (e.g.,

style="margin-bottom: 0") and MDX/JSX fragments are converted/removed; ensure

the serializer used in the RSS generator (where post bodies are passed into

<description>) runs through the MDX/HTML renderer or a simple transform that

normalizes style objects and outputs standard HTML markup for <img>, <div>,

<iframe>, etc.

In `@site/public/sitemap-0.xml`:

- Line 40: The sitemap contains a malformed slug

"modernizing-lincolnshire-county-council39s-open-data-portal"; locate the

case-study content file that defines this slug (check frontmatter keys like

slug/permalink or the filename containing "council39s"), change it to a clean

slug (e.g. "modernizing-lincolnshire-county-councils-open-data-portal" or remove

the apostrophe entirely), save the frontmatter, then regenerate the sitemap so

the corrected URL replaces the bad entry; also search the repo for "council39s"

to fix any other occurrences.

---

Nitpick comments:

In `@site/layouts/casestudy.tsx`:

- Around line 561-571: The external anchor using longReadLink opens in a new tab

but lacks an accessible indication; update the <a> that renders "Read the full

story" to include a visible or screen-reader-only cue (e.g., append a

visually-hidden span with text like "(opens in new tab)" and/or add an

accessible label via aria-label or aria-describedby) and ensure the decorative

external-tab SVG has aria-hidden="true" so screen readers only announce the

explicit hint; keep target="_blank" and rel="noopener noreferrer" intact.

In `@site/public/rss.xml`:

- Line 1: The RSS feed file rss.xml is updating channel-level timestamps

(lastBuildDate and pubDate) on every regeneration which causes high-churn diffs;

change the generation process so these fields are deterministic or only set at

deploy time (or exclude/rss.xml from commits when only timestamps change).

Update the feed generation logic that writes lastBuildDate and pubDate to either

keep a fixed value (e.g., derived from published item dates), omit them when

unchanged, or move stamp-setting to your deployment pipeline so the channel

metadata in rss.xml remains stable across content edits.

ℹ️ Review info

Configuration used: defaults

Review profile: CHILL

Plan: Pro

⛔ Files ignored due to path filters (6)

site/public/images/casestudies/lincolnshire1.pngis excluded by!**/*.pngsite/public/images/casestudies/lincolnshire2.pngis excluded by!**/*.pngsite/public/images/casestudies/lincolnshire3.pngis excluded by!**/*.pngsite/public/images/casestudies/lincolnshire4.pngis excluded by!**/*.pngsite/public/images/casestudies/lincon.svgis excluded by!**/*.svgsite/public/images/casestudies/linconshire0.jpgis excluded by!**/*.jpg

📒 Files selected for processing (5)

site/content/case-studies/modernizing-lincolnshire-county-council39s-open-data-portal.mdsite/layouts/casestudy.tsxsite/public/atom.xmlsite/public/rss.xmlsite/public/sitemap-0.xml

| image: /images/casestudies/linconshire0.jpg | ||

| images: ['/images/casestudies/lincolnshire1.png', '/images/casestudies/lincolnshire2.png', '/images/casestudies/lincolnshire3.png', '/images/casestudies/lincolnshire4.png'] |

There was a problem hiding this comment.

Fix likely broken media asset paths (linconshire / lincon).

These filenames look misspelled relative to “Lincolnshire” and may 404 in production.

Suggested fix

-image: /images/casestudies/linconshire0.jpg

+image: /images/casestudies/lincolnshire0.jpg

...

- '/images/casestudies/lincon.svg',

+ '/images/casestudies/lincoln.svg',Also applies to: 45-45

🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed.

In

`@site/content/case-studies/modernizing-lincolnshire-county-council39s-open-data-portal.md`

around lines 6 - 7, The frontmatter keys image and images contain misspelled

filenames (e.g., "linconshire0.jpg" and "lincon" variants) that will 404; update

the values so they consistently use the correct "lincolnshire" spelling (e.g.,

rename "linconshire0.jpg" to "lincolnshire0.jpg" and ensure all entries in image

and images arrays match the actual asset filenames used in the repo:

"lincolnshire1.png", "lincolnshire2.png", etc.), and verify the referenced

assets exist in the images/casestudies directory.

| 'Seamless/n catalogue migration', | ||

| 'Zero/n infrastructure overhead', | ||

| '100%/n Budget-friendly solution', |

There was a problem hiding this comment.

Normalize keystat line-break token to avoid literal /n rendering.

/n is typically a typo and may render as plain text in UI.

Suggested fix

- 'Seamless/n catalogue migration',

- 'Zero/n infrastructure overhead',

- '100%/n Budget-friendly solution',

+ 'Seamless\ncatalogue migration',

+ 'Zero\ninfrastructure overhead',

+ '100%\nBudget-friendly solution',📝 Committable suggestion

‼️ IMPORTANT

Carefully review the code before committing. Ensure that it accurately replaces the highlighted code, contains no missing lines, and has no issues with indentation. Thoroughly test & benchmark the code to ensure it meets the requirements.

| 'Seamless/n catalogue migration', | |

| 'Zero/n infrastructure overhead', | |

| '100%/n Budget-friendly solution', | |

| 'Seamless\ncatalogue migration', | |

| 'Zero\ninfrastructure overhead', | |

| '100%\nBudget-friendly solution', |

🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed.

In

`@site/content/case-studies/modernizing-lincolnshire-county-council39s-open-data-portal.md`

around lines 10 - 12, The three keystat strings ('Seamless/n catalogue

migration', 'Zero/n infrastructure overhead', '100%/n Budget-friendly solution')

contain the literal token "/n" which renders incorrectly; replace each "/n" with

a proper line-break representation (either an actual line break, a Markdown hard

break, or an HTML <br/> as appropriate for this content) so the phrases render

on two lines rather than showing "/n" literally.

| PortalJS Cloud delivered exactly what Lincolnshire needed: a managed service that eliminated infrastructure costs while providing a modern, customizable frontend. The migration preserved their entire CKAN catalogue while giving council staff direct control over day-to-day operations through an intuitive admin dashboard.. | ||

|

|

||

| - **Complete Data Migration**: Every dataset, resource, organization, and metadata element transferred seamlessly from CKAN to PortalJS Cloud. | ||

|

|

||

|

|

||

| - **Modern, Branded Interface**: Fully customised frontend reflecting Lincolnshire County Council''s visual identity and accessibility requirements. |

There was a problem hiding this comment.

Clean up copy typos in solution text.

There are two user-visible text issues: dashboard.. and Council''s.

Suggested fix

-PortalJS Cloud delivered exactly what Lincolnshire needed: a managed service that eliminated infrastructure costs while providing a modern, customizable frontend. The migration preserved their entire CKAN catalogue while giving council staff direct control over day-to-day operations through an intuitive admin dashboard..

+PortalJS Cloud delivered exactly what Lincolnshire needed: a managed service that eliminated infrastructure costs while providing a modern, customizable frontend. The migration preserved their entire CKAN catalogue while giving council staff direct control over day-to-day operations through an intuitive admin dashboard.

...

-- **Modern, Branded Interface**: Fully customised frontend reflecting Lincolnshire County Council''s visual identity and accessibility requirements.

+- **Modern, Branded Interface**: Fully customised frontend reflecting Lincolnshire County Council's visual identity and accessibility requirements.📝 Committable suggestion

‼️ IMPORTANT

Carefully review the code before committing. Ensure that it accurately replaces the highlighted code, contains no missing lines, and has no issues with indentation. Thoroughly test & benchmark the code to ensure it meets the requirements.

| PortalJS Cloud delivered exactly what Lincolnshire needed: a managed service that eliminated infrastructure costs while providing a modern, customizable frontend. The migration preserved their entire CKAN catalogue while giving council staff direct control over day-to-day operations through an intuitive admin dashboard.. | |

| - **Complete Data Migration**: Every dataset, resource, organization, and metadata element transferred seamlessly from CKAN to PortalJS Cloud. | |

| - **Modern, Branded Interface**: Fully customised frontend reflecting Lincolnshire County Council''s visual identity and accessibility requirements. | |

| PortalJS Cloud delivered exactly what Lincolnshire needed: a managed service that eliminated infrastructure costs while providing a modern, customizable frontend. The migration preserved their entire CKAN catalogue while giving council staff direct control over day-to-day operations through an intuitive admin dashboard. | |

| - **Complete Data Migration**: Every dataset, resource, organization, and metadata element transferred seamlessly from CKAN to PortalJS Cloud. | |

| - **Modern, Branded Interface**: Fully customised frontend reflecting Lincolnshire County Council's visual identity and accessibility requirements. |

🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed.

In

`@site/content/case-studies/modernizing-lincolnshire-county-council39s-open-data-portal.md`

around lines 26 - 31, Fix two copy typos in the case study content: replace the

double period after "admin dashboard.." with a single period, and replace the

doubled single quotes in "Council''s" with a proper apostrophe ("Council's") so

the user-visible text reads correctly in the paragraph that describes PortalJS

Cloud delivering a managed service and the modern branded interface.

| <entry> | ||

| <title>Keep Your Portal Data Fresh: A Hands-On Guide to the PortalJS Cloud API</title> | ||

| <id>https://portaljs.com/blog/keep-your-portal-data-fresh-a-hands-on-guide-to-the-portaljs-cloud-api</id> | ||

| <link href="https://portaljs.com/blog/keep-your-portal-data-fresh-a-hands-on-guide-to-the-portaljs-cloud-api" /> | ||

| <updated>2026-01-22T00:00:00.000Z</updated> | ||

| <published>2026-01-22T00:00:00.000Z</published> | ||

| <author> | ||

| <name>João Demenech</name> | ||

| </author> | ||

| <content type="html"> | ||

| Keeping data portals up to date is harder than it looks. Files change, metadata drifts, and manual uploads don’t scale once updates become frequent or automated. | ||

|

|

||

| PortalJS Cloud solves this by exposing a powerful API that lets you manage datasets, resources, and data files programmatically. In this guide, we’ll walk through how to use the **PortalJS Cloud API** to automatically create datasets, upload data, and keep both data and metadata in sync—using real Python code. | ||

|

|

||

| By the end, you’ll have all the pieces needed to build a repeatable, automated data publishing pipeline. | ||

|

|

||

| ## The Problem with Manual Data Updates | ||

|

|

||

| Many portals start with a simple workflow: | ||

|

|

||

| - Upload a CSV through the UI | ||

| - Update the description | ||

| - Repeat next week | ||

|

|

||

| Over time, this approach breaks down: | ||

|

|

||

| - Files are updated, but metadata isn’t | ||

| - Uploads become repetitive and error-prone | ||

| - Data refreshes depend on someone remembering to do them | ||

|

|

||

| What we want instead is: | ||

|

|

||

| - A fully automated flow | ||

| - Consistent dataset and resource metadata | ||

| - The ability to update data on a schedule | ||

|

|

||

| That’s exactly what the PortalJS Cloud API enables. | ||

|

|

||

| ## Finding Your PortalJS Cloud API | ||

|

|

||

| Every PortalJS Cloud portal comes with its **own API**. | ||

|

|

||

| You can access your portal’s API documentation using the following pattern: | ||

|

|

||

| ``` | ||

| https://api.cloud.portaljs.com/{your-portal}/api/3/docs | ||

| ``` | ||

|

|

||

| For example, for the Datopian portal: | ||

|

|

||

| ``` | ||

| https://api.cloud.portaljs.com/@datopian/api/3/docs | ||

| ``` | ||

|

|

||

|  | ||

|

|

||

| This interactive documentation lets you: | ||

|

|

||

| - Explore all available endpoints | ||

| - See required parameters and response schemas | ||

| - Test requests directly from the browser | ||

|

|

||

| > **Tip:** Bookmark this page—you’ll use it constantly when building and debugging integrations. | ||

|

|

||

| ## Authentication and API Keys | ||

|

|

||

| ### Public access (no API key) | ||

|

|

||

| Without an API key, anyone can: | ||

|

|

||

| - Read public datasets | ||

| - Access public resources and data files | ||

|

|

||

| For example, a simple public read request: | ||

|

|

||

| ```python | ||

| import requests | ||

|

|

||

| response = requests.get( | ||

| "https://api.cloud.portaljs.com/@datopian/api/3/action/package_search" | ||

| ) | ||

|

|

||

| result = response.json() | ||

| print(result) | ||

| ``` | ||

|

|

||

| ### Authenticated access (API key required) | ||

|

|

||

| To create or update data, you’ll need an API key. Authenticated actions include: | ||

|

|

||

| - Creating datasets | ||

| - Creating resources | ||

| - Uploading or replacing data files | ||

| - Updating dataset and resource metadata | ||

|

|

||

| #### Generating an API key | ||

|

|

||

| To generate an API key: | ||

|

|

||

| 1. Log in to the [**PortalJS Cloud dashboard**](https://cloud.portaljs.com/auth/signin) | ||

| 2. Navigate to [**your user profile**](https://cloud.portaljs.com/profile/api-keys) | ||

| 3. Create a new API key | ||

|

|

||

| Treat API keys like passwords and store them securely. | ||

|

|

||

|  | ||

|

|

||

| ### Using the API key in requests | ||

|

|

||

| Include the API key in the `Authorization` header. | ||

|

|

||

| #### Common Python setup | ||

|

|

||

| We’ll use Python 3.10+ and the `requests` package. Set your portal slug and API key once and reuse them everywhere. | ||

|

|

||

| ```python | ||

| import requests | ||

|

|

||

| PORTAL = "datopian" | ||

| API_KEY = "your-api-key" | ||

| API_BASE = f"https://api.cloud.portaljs.com/@{PORTAL}/api/3/action" | ||

|

|

||

| headers = { | ||

| "Content-Type": "application/json", | ||

| "Authorization": API_KEY, | ||

| } | ||

| ``` | ||

|

|

||

| ## Creating a Dataset via the API | ||

|

|

||

| In PortalJS, a **dataset** is the top-level container for related data and resources. | ||

|

|

||

| To create one programmatically, use the `package_create` endpoint. | ||

|

|

||

| ```python | ||

| response = requests.post( | ||

| f"{API_BASE}/package_create", | ||

| headers=headers, | ||

| json={ | ||

| "name": "automated-dataset", | ||

| "title": "Automated Dataset", | ||

| "notes": "This dataset is created and updated via the PortalJS Cloud API", | ||

| "owner_org": PORTAL, | ||

| }, | ||

| ) | ||

|

|

||

| result = response.json() | ||

| print(result) | ||

|

|

||

| dataset_id = result["result"]["id"] | ||

| ``` | ||

|

|

||

| > The `owner_org` field should be set to the organization that owns the dataset. | ||

| > You can find the organization identifier in the PortalJS Cloud dashboard. | ||

|

|

||

| --- | ||

|

|

||

| ## Creating a Resource for the Dataset | ||

|

|

||

| A **resource** represents a specific data file (CSV, JSON, etc.) attached to a dataset. | ||

|

|

||

| Create a resource using `resource_create`: | ||

|

|

||

| ```python | ||

| response = requests.post( | ||

| f"{API_BASE}/resource_create", | ||

| headers=headers, | ||

| json={ | ||

| "package_id": "automated-dataset", | ||

| "name": "latest-data", | ||

| "description": "Latest version of the dataset", | ||

| "format": "CSV", | ||

| }, | ||

| ) | ||

|

|

||

| result = response.json() | ||

| print(result) | ||

|

|

||

| resource_id = result["result"]["id"] | ||

| ``` | ||

|

|

||

| Resources are typically created once and updated repeatedly. | ||

|

|

||

| ## Uploading Data Using Pre-Signed URLs | ||

|

|

||

| PortalJS Cloud uses **pre-signed URLs** for uploads. This allows large files to be uploaded directly to storage without passing through the API server. | ||

|

|

||

| The upload flow is: | ||

|

|

||

| 1. Request an upload URL | ||

| 2. Upload the file using `PUT` | ||

| 3. Finalize the upload so the resource metadata is updated | ||

|

|

||

| ### Step 1: Request an upload URL | ||

|

|

||

| ```python | ||

| upload_response = requests.post( | ||

| f"{API_BASE}/resource_upload", | ||

| headers=headers, | ||

| json={ | ||

| "id": resource_id, | ||

| "filename": "data.csv", | ||

| }, | ||

| ) | ||

|

|

||

| upload_result = upload_response.json() | ||

| upload_url = upload_result["result"]["presigned_url"] | ||

| print(upload_url) | ||

| ``` | ||

|

|

||

| ### Step 2: Upload the file | ||

|

|

||

| Create a minimal CSV file locally first, for example `data.csv`: | ||

|

|

||

| ```csv | ||

| id,name | ||

| 1,Example row | ||

| ``` | ||

|

|

||

| Then upload the file: | ||

|

|

||

| ```python | ||

| with open("./data.csv", "rb") as file_handle: | ||

| requests.put(upload_url, data=file_handle) | ||

| ``` | ||

|

|

||

| ### Step 3: Finalize the upload | ||

|

|

||

| ```python | ||

| requests.post( | ||

| f"{API_BASE}/resource_upload_finalize", | ||

| headers=headers, | ||

| json={ | ||

| "id": resource_id, | ||

| }, | ||

| ) | ||

| ``` | ||

|

|

||

| At this point, the resource is updated and consumers will see the new data. | ||

|

|

||

| ## Updating Resource Data Automatically | ||

|

|

||

| This same upload flow can be reused every time your data changes: | ||

| - Daily refreshes | ||

| - Weekly exports | ||

| - Data generated from upstream systems | ||

|

|

||

| You do **not** need to create a new resource each time. Updating the existing resource ensures: | ||

| - Stable URLs | ||

| - Consistent metadata | ||

| - A clean dataset structure | ||

|

|

||

| ## Putting It All Together: End-to-End Automation | ||

|

|

||

| A typical automation flow looks like this: | ||

|

|

||

| **One-time** | ||

| - Create dataset | ||

| - Create resource | ||

|

|

||

| **On every run** | ||

| - Generate or fetch new data | ||

| - Request upload URL | ||

| - Upload file | ||

| - Finalize the upload | ||

|

|

||

| ## Common Dataset and Resource Operations | ||

|

|

||

| Here are a few additional calls you’ll use often once your pipeline is in place. | ||

|

|

||

| ### Search datasets | ||

|

|

||

| ```python | ||

| search_response = requests.get( | ||

| f"{API_BASE}/package_search", | ||

| params={"q": "climate", "rows": 5}, | ||

| ) | ||

|

|

||

| search_result = search_response.json() | ||

| print(search_result) | ||

| ``` | ||

|

|

||

| ### Patch a dataset | ||

|

|

||

| ```python | ||

| dataset_patch_response = requests.post( | ||

| f"{API_BASE}/package_patch", | ||

| headers=headers, | ||

| json={ | ||

| "id": dataset_id, | ||

| "notes": "Updated description from automation.", | ||

| }, | ||

| ) | ||

|

|

||

| dataset_patch_result = dataset_patch_response.json() | ||

| print(dataset_patch_result) | ||

| ``` | ||

|

|

||

| ### Delete a dataset | ||

|

|

||

| ```python | ||

| dataset_delete_response = requests.post( | ||

| f"{API_BASE}/package_delete", | ||

| headers=headers, | ||

| json={"id": dataset_id}, | ||

| ) | ||

|

|

||

| dataset_delete_result = dataset_delete_response.json() | ||

| print(dataset_delete_result) | ||

| ``` | ||

|

|

||

| ### Patch a resource | ||

|

|

||

| ```python | ||

| resource_patch_response = requests.post( | ||

| f"{API_BASE}/resource_patch", | ||

| headers=headers, | ||

| json={ | ||

| "id": resource_id, | ||

| "description": "Updated resource description.", | ||

| }, | ||

| ) | ||

|

|

||

| resource_patch_result = resource_patch_response.json() | ||

| print(resource_patch_result) | ||

| ``` | ||

|

|

||

| ### Delete a resource | ||

|

|

||

| ```python | ||

| resource_delete_response = requests.post( | ||

| f"{API_BASE}/resource_delete", | ||

| headers=headers, | ||

| json={"id": resource_id}, | ||

| ) | ||

|

|

||

| resource_delete_result = resource_delete_response.json() | ||

| print(resource_delete_result) | ||

| ``` | ||

|

|

||

| ## Full End-to-End Script | ||

|

|

||

| If you want a single copy-paste file with all the steps (create dataset, create resource, upload, finalize), use this: | ||

|

|

||

| ```python | ||

| import requests | ||

|

|

||

| PORTAL = "datopian" | ||

| API_KEY = "your-api-key" | ||

| API_BASE = f"https://api.cloud.portaljs.com/@{PORTAL}/api/3/action" | ||

|

|

||

| headers = { | ||

| "Content-Type": "application/json", | ||

| "Authorization": API_KEY, | ||

| } | ||

|

|

||

| dataset_response = requests.post( | ||

| f"{API_BASE}/package_create", | ||

| headers=headers, | ||

| json={ | ||

| "name": "automated-dataset", | ||

| "title": "Automated Dataset", | ||

| "notes": "This dataset is created and updated via the PortalJS Cloud API", | ||

| "owner_org": PORTAL, | ||

| }, | ||

| ) | ||

|

|

||

| dataset_result = dataset_response.json() | ||

| dataset_id = dataset_result["result"]["id"] | ||

|

|

||

| resource_response = requests.post( | ||

| f"{API_BASE}/resource_create", | ||

| headers=headers, | ||

| json={ | ||

| "package_id": dataset_id, | ||

| "name": "latest-data", | ||

| "description": "Latest version of the dataset", | ||

| "format": "CSV", | ||

| }, | ||

| ) | ||

|

|

||

| resource_result = resource_response.json() | ||

| resource_id = resource_result["result"]["id"] | ||

|

|

||

| filename = "data.csv" | ||

| with open(filename, "w", encoding="utf-8") as file_handle: | ||

| file_handle.write("id,name\n1,Example row\n") | ||

|

|

||

| upload_response = requests.post( | ||

| f"{API_BASE}/resource_upload", | ||

| headers=headers, | ||

| json={ | ||

| "id": resource_id, | ||

| "filename": filename, | ||

| }, | ||

| ) | ||

|

|

||

| upload_result = upload_response.json() | ||

| upload_url = upload_result["result"]["presigned_url"] | ||

|

|

||

| with open(filename, "rb") as file_handle: | ||

| requests.put(upload_url, data=file_handle) | ||

|

|

||

| requests.post( | ||

| f"{API_BASE}/resource_upload_finalize", | ||

| headers=headers, | ||

| json={ | ||

| "id": resource_id, | ||

| }, | ||

| ) | ||

| ``` | ||

|

|

||

| ## Conclusion and Next Steps | ||

|

|

||

| Using the PortalJS Cloud API, you can move from manual uploads to a fully automated, reliable data publishing workflow. | ||

|

|

||

| You’ve seen how to: | ||

|

|

||

| - Discover your portal’s API | ||

| - Authenticate with API keys | ||

| - Create datasets and resources | ||

| - Upload and update data programmatically | ||

| - Search, update, and delete datasets and resources | ||

|

|

||

| Explore your portal’s API documentation to go further and tailor automation to your data workflows. | ||

| </content> | ||

| <summary type="html">This guide walks through using the PortalJS Cloud API to programatically create datasets, add resources, upload and replace data files, and update metadata—showing how to build repeatable data update pipelines with code examples.</summary> | ||

| </entry> | ||

| <entry> | ||

| <title>Turning OpenMetadata into a User-Friendly Data Portal with PortalJS</title> | ||

| <id>https://portaljs.com/blog/turning-openmetadata-into-a-user-friendly-data-portal-with-portaljs</id> | ||

| <link href="https://portaljs.com/blog/turning-openmetadata-into-a-user-friendly-data-portal-with-portaljs" /> | ||

| <updated>2026-01-09T00:00:00.000Z</updated> | ||

| <published>2026-01-09T00:00:00.000Z</published> | ||

| <author> | ||

| <name>João Demenech</name> | ||

| </author> | ||

| <content type="html"> | ||

| OpenMetadata is a strong foundation for modern data governance. It excels at managing metadata, lineage, ownership, and data quality, and it is clearly designed for data engineers, platform teams, and governance practitioners. | ||

|

|

||

| Its user interface reflects that focus. Concepts such as database services, schemas, and assets are exposed directly, assuming users understand how data infrastructure works. This is effective for power users, but it creates friction when OpenMetadata is used by a broader audience. | ||

|

|

||

| Many organizations want researchers, analysts, partners, or other non-technical users to explore their data. For them, how data is stored matters far less than what the data represents and how it can be used. | ||

|

|

||

| ## Making OpenMetadata easier to explore with PortalJS | ||

|

|

||

| PortalJS helps solve this by turning OpenMetadata into a data portal that is easier to browse and understand. It keeps all the existing metadata, but presents it in a simpler way, so people can focus on datasets and their contents instead of technical details. | ||

|

|

||

| A ready-to-use, open-source template powered by Next.js and Tailwind CSS is available to get started quickly: | ||

|

|

||

| 👉 https://github.com/datopian/portaljs-frontend-starter-omd | ||

|

|

||

| <img style={{"marginBottom": 0}} src="/static/img/blog/2026-01-09-turning-openmetadata-into-a-user-friendly-data-portal-with-portaljs/from.png" /> | ||

| <div style={{textAlign: "center"}}> | ||

| *OpenMetadata explore page, where users navigate metadata through infrastructure concepts* | ||

| </div> | ||

|

|

||

| <img style={{"marginBottom": 0}} src="/static/img/blog/2026-01-09-turning-openmetadata-into-a-user-friendly-data-portal-with-portaljs/to.png" /> | ||

| <div style={{textAlign: "center"}}> | ||

| *PortalJS dataset search page, focused on helping users quickly find datasets* | ||

| </div> | ||

|

|

||

| ## Why discovery is hard for non-technical users | ||

|

|

||

| In OpenMetadata, navigation mirrors how data is stored: | ||

|

|

||

| - database services | ||

| - databases | ||

| - schemas | ||

| - tables and assets | ||

|

|

||

| This structure makes sense from an engineering point of view, but it forces users to understand internal architecture before they can answer a simpler question: *what data exists that is relevant to me?* | ||

|

|

||

| Most data consumers think in terms of datasets, domains, topics, and documentation. When finding data requires understanding storage layers, many users struggle to get value from the catalog. | ||

|

|

||

| This is not a limitation of OpenMetadata’s metadata model. It is a mismatch between a governance-focused interface and a discovery-focused use case. | ||

|

|

||

| ## Access and sharing add another layer of friction | ||

|

|

||

| OpenMetadata is designed as an authenticated system. Requiring users to sign in is often the right choice for governance workflows, but it limits how metadata can be shared. | ||

|

|

||

| This makes it harder to: | ||

| - Share data with external collaborators | ||

| - Build lightweight data portals | ||

| - Expose selected metadata to broader audiences | ||

|

|

||

| OpenMetadata is not intended to be a flexible, audience-facing data portal, which is why many teams look for an additional interface focused on exploration and reading. | ||

|

|

||

| ## PortalJS as a data portal for OpenMetadata | ||

|

|

||

| PortalJS provides that interface. | ||

|

|

||

| It is an open-source framework for building data portals on top of systems like OpenMetadata. OpenMetadata continues to manage metadata, ownership, and lineage, while PortalJS focuses on helping people find and understand data more easily. | ||

|

|

||

| This separation allows teams to keep the full power of OpenMetadata, while offering a much simpler experience to data consumers. | ||

|

|

||

|  | ||

|

|

||

| ## A simpler mental model for data discovery | ||

|

|

||

| The PortalJS OpenMetadata template reshapes how metadata is presented, using concepts that are easier for most users to understand: | ||

|

|

||

| - **Data Product → Dataset** | ||

| - **Domain → Organization** | ||

| - **Asset → Resource** | ||

|

|

||

| Nothing is removed or simplified in the metadata itself. The difference is how that information is organized and displayed, making it easier to browse, search, and explore. | ||

|

|

||

| ## What the template provides out of the box | ||

|

|

||

| The open-source PortalJS OpenMetadata template includes: | ||

|

|

||

| - A dataset search page | ||

| - Domain (organization) browsing | ||

| - A glossary page | ||

| - A dataset details page where users can understand the dataset metadata and browse available resources | ||

| - Resource detail pages | ||

|

|

||

| All pages are designed for read-only, exploration-first use. Metadata is fetched directly from OpenMetadata, with no duplication or manual syncing. | ||

|

|

||

|  | ||

|

|

||

| ## Open source, flexible by design | ||

|

|

||

| The template is built with **Next.js** and **Tailwind CSS**, making it easy to customize, extend, and brand. | ||

|

|

||

| Because it is fully open source, teams retain control over: | ||

| - The codebase | ||

| - How and where it is deployed | ||

| - Who can access which data | ||

|

|

||

| PortalJS can be adapted to different audiences and access requirements. | ||

|

|

||

| ## Not just for open data | ||

|

|

||

| While PortalJS can power open data portals, the template is not limited to public use cases. | ||

|

|

||

| It can be customized to: | ||

| - Add authentication | ||

| - Restrict access to specific datasets or domains | ||

| - Expose data conditionally based on users, roles, or custom properties | ||

|

|

||

| This makes it suitable for internal catalogs, research portals, partner-facing experiences, and fully public portals alike. | ||

|

|

||

| ## From governance to exploration | ||

|

|

||

| OpenMetadata is built for managing metadata. PortalJS is built for helping people explore and understand data. | ||

|

|

||

| Together, they allow organizations to turn existing metadata into a user-friendly data portal that serves more people, without replacing governance tooling. | ||

|

|

||

| **We’ve used this approach and the PortalJS OpenMetadata template with many different clients, across a range of data platforms and use cases. In practice, it has proven to be a flexible and reliable way to make OpenMetadata easier to explore, without changing how metadata is managed underneath.** | ||

|

|

||

| For a concrete example of how this approach has worked in practice, check out our case study: | ||

|

|

||

| 👉 [Helping Researchers Find The Right Data Faster — With A Simple Frontend For OpenMetadata](https://www.datopian.com/showcase/case-studies/simple-frontend-for-openmetadata-with-portaljs). | ||

|

|

||

| If you are already using OpenMetadata and want to improve data discovery, the open-source PortalJS OpenMetadata template is a practical place to start. | ||

|

|

||

| 👉 https://github.com/datopian/portaljs-frontend-starter-omd | ||

|

|

||

| </content> | ||

| <summary type="html">OpenMetadata is excellent for governance and power users, but difficult for broader audiences. Learn how PortalJS turns OpenMetadata into a user-friendly data portal focused on discovery and navigation.</summary> | ||

| </entry> | ||

| <entry> | ||

| <title>Supercharging Data Portals with the PortalJS MCP Server</title> | ||

| <id>https://portaljs.com/blog/supercharging-data-portals-with-the-portaljs-mcp-server</id> | ||

| <link href="https://portaljs.com/blog/supercharging-data-portals-with-the-portaljs-mcp-server" /> | ||

| <updated>2025-11-25T00:00:00.000Z</updated> | ||

| <published>2025-11-25T00:00:00.000Z</published> | ||

| <author> | ||

| <name>anuveyatsu</name> | ||

| </author> | ||

| <content type="html"> | ||

| Back in September this year, we published [our first look at using MCP (Model Context Protocol) servers](/blog/mcp-server-ai-assistants-to-improve-data-portals) to give AI assistants structured access to data portals. | ||

|

|

||

| Now the implementation is live and fully open source. | ||

|

|

||

| PortalJS MCP runs in production on Cloudflare’s MCP SDK, which gives us a fast, global, edge-native runtime. It comes with low latency, high reliability, and no “AI integration infra tax” for you to pay. | ||

|

|

||

| The PortalJS MCP server is publicly available at: | ||

|

|

||

| ``` | ||

| mcp.portaljs.com | ||

| ``` | ||

|

|

||

| If your data portal runs on PortalJS Cloud, connecting it is dead simple. Your MCP endpoint is: | ||

|

|

||

| ``` | ||

| mcp.portaljs.com/@org-name/sse | ||

| ``` | ||

|

|

||

| Paste that into ChatGPT, Claude, or any MCP-capable client, and your AI assistant immediately gains structured access to your datasets, metadata, and previews. | ||

|

|

||

| And because we think this should be a standard building block for modern data portals, we’ve open sourced the whole implementation here: | ||

|

|

||

| https://github.com/datopian/portaljs-mcp-server | ||

|

|

||

| Use it, fork it, deploy your own version, or just read through it to understand how MCP can sit cleanly on top of a data portal. | ||

|

|

||

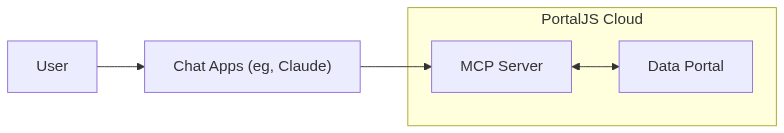

| [](https://mermaid.live/edit#pako:eNpNjl9PgzAUxb9Kc580YYQxKLQxJhs8GU0WF19c91Dt3VgClJTWqITvbhm6eJ_un_M75w7wrhUCh5ORXUUen0VLfK33Lz2aA1ks7slmL6CopCXrruvJDZ4CUtTSKbwVcJjlm4uw2D8VW7JD8-HR-dC7t9l4q42V9cPOo9qp-ThV8a8ld5NL6eNKaeUvcs3AVkHg3zwr4NY4DKBB08hphGGSCLAVNiiA-1bhUbraChDt6LFOtq9aN3-k0e5UAT_KuveT65S0WJ6lf7W5bo0PRFNo11rgaXbxAD7AJ3DGQrqiGY3yPMnSnAXwBXxJozBKknQV53FMo4iNAXxfMqOQspwtaZzSJM4ymrDxBwREbd4) | ||

| _Figure 1: Architecture diagram._ | ||

|

|

||

| ## Why MCP Is a Game-Changer for Data Portals | ||

|

|

||

| AI chats are powerful, but without structured access they’re basically guessing. MCP fixes that by giving models secure, predictable tools to interact with real systems — including your data portal. | ||

|

|

||

| In practice, this unlocks: | ||

|

|

||

| * **Reliable dataset discovery** backed by actual portal data search | ||

| * **Accurate metadata exploration** without hallucination risk | ||

| * **On-demand previews** (rows, schema, field types) | ||

| * **One clean integration** that works across multiple AI clients | ||

|

|

||

| This effectively turns your AI assistant into a precision data navigator — not just a polite autocomplete engine. | ||

|

|

||

| ## What’s Available in the MCP Today | ||

|

|

||

| The initial toolset focuses on high-value workflows for discovery and exploration: | ||

|

|

||

| ### Search tool enables data discovery | ||

|

|

||

| * List datasets | ||

| * Keyword search | ||

| * Metadata filtering | ||

| * Dataset summaries | ||

|

|

||

| ### Get tool for metadata exploration | ||

|

|

||

| * Resource lists | ||

| * Field definitions | ||

| * Schema inspection | ||

| * Full metadata extraction | ||

|

|

||

| ### Table preview | ||

|

|

||

| * First N rows | ||

| * Column summaries | ||

| * Type inference | ||

| * Lightweight profiling | ||

|

|

||

| These tools are designed to be **fast, bounded, and safe**. The model doesn’t pull full datasets — it gets structured previews that are ideal for reasoning and analysis. | ||

|

|

||

| ## Works with ChatGPT, Claude, VS Code, and More | ||

|

|

||

| Our MCP server is model-agnostic by default: | ||

|

|

||

| * Claude — native MCP support | ||

| * ChatGPT Desktop — native MCP support | ||

| * VS Code MCP clients — plug-and-play | ||

| * Future MCP-enabled tools — automatically compatible | ||

|

|

||

| Wherever your team uses AI, your portal can now show up *as a first-class, tool-based data source*. | ||

|

|

||

| ## Why Cloudflare’s MCP SDK? | ||

|

|

||

| We chose Cloudflare’s SDK because MCP should feel like infrastructure you **never have to think about**. | ||

|

|

||

| Using Cloudflare gives us: | ||

|

|

||

| * **Edge deployment by default** → fast globally, no region bottlenecks | ||

| * **Battle-tested SSE support** → stable streaming tool calls | ||

| * **Simple scaling model** → no infra babysitting as usage grows | ||

|

|

||

| This matters because AI tooling isn’t forgiving. If your MCP endpoint is slow or flaky, your user’s trust evaporates instantly. Cloudflare’s runtime lets us keep it sharp. | ||

|

|

||

| ## What’s Coming Next | ||

|

|

||

| This is only the first layer. We’re already expanding the MCP toolbox, including: | ||

|

|

||

| * Write-back tools (tags, notes, curation workflows) | ||

| * Automated metadata enrichment | ||

| * Data quality checks | ||

| * Permission-aware exploration | ||

| * Semantic search | ||

| * Lineage and observability integration | ||

|

|

||

| The direction is clear: your data portal becomes an intelligent interface, not a static catalog. | ||

|

|

||

| ## Try It Today | ||

|

|

||

| If your portal runs on PortalJS Cloud, your MCP endpoint is: | ||

|

|

||

| ``` | ||

| https://mcp.portaljs.com/@org-name/sse | ||

| ``` | ||

|

|

||

| Plug it into your AI assistant and start exploring your data conversationally — with real structure, real metadata, and real previews. | ||

|

|

||

| Want help rolling this out to your team or customers? Reach out. We’re building this to make data portals genuinely useful in an AI-first world. | ||

| </content> | ||

| <summary type="html">Explore how the PortalJS MCP server unlocks AI-native discovery, metadata exploration, and data previews for modern portals — now open sourced and easy to integrate.</summary> | ||

| </entry> | ||

| <entry> | ||

| <title>Introducing Visualizations in PortalJS Cloud: Publish and Share Insights Alongside Your Datasets</title> | ||

| <id>https://portaljs.com/blog/introducing-visualizations-in-portaljs-cloud</id> | ||

| <link href="https://portaljs.com/blog/introducing-visualizations-in-portaljs-cloud" /> | ||

| <updated>2025-10-13T00:00:00.000Z</updated> | ||

| <published>2025-10-13T00:00:00.000Z</published> | ||

| <author> | ||

| <name>João Demenech</name> | ||

| </author> | ||

| <content type="html"> | ||

| ## Introduction | ||

|

|

||

| PortalJS Cloud now treats visualizations as first-class citizens in your data portal — discoverable and searchable, just like your datasets. | ||

|

|

||

| This feature allows users to publish external dashboards, reports, and data stories alongside their datasets, regardless of which tool they use — from Power BI to Tableau or custom-built data apps. | ||

|

|

||

| ## Why Visualizations Matter | ||

|

|

||

| Data portals are great for publishing and discovering datasets, but raw data rarely tells the full story. | ||

|

|

||

| Visualizations bridge that gap by helping users: | ||

|

|

||

| - Quickly grasp insights and trends | ||

| - Lower the barrier for non-technical audiences | ||

| - Combine narrative and analysis into data-driven storytelling | ||

| - Share interactive dashboards directly with stakeholders | ||

|

|

||

|  | ||

|

|

||

| ## Tool-Agnostic by Design | ||

|

|

||

| A core design goal for this feature was flexibility. | ||

|

|

||

| PortalJS doesn’t restrict you to one visualization framework. Instead, you can publish and catalog visualizations created with any external tool, such as: | ||

|

|

||

| - Power BI | ||

| - Observable | ||

| - Superset | ||

| - Tableau | ||

| - Custom-built dashboards or apps | ||

|

|

||

| When you publish a visualization in PortalJS Cloud, it becomes: | ||

|

|

||

| - A dedicated entry in your PortalJS instance (with metadata, tags, and ownership) | ||

| - Linked to the relevant groups and organizations | ||

| - Browsable and searchable, just like datasets | ||

|

|

||

| This means your portal can now serve as both a catalog of datasets and a catalog of insights built on top of them. | ||

|

|

||

| ## Optional Add-On: Managed Data App Powered By Observable Framework | ||

|

|

||

| For users who prefer a code-driven workflow, PortalJS offers an optional Observable Framework Data App Add-on integration. | ||

|

|

||

|  | ||

|

|

||

| [Observable Framework](https://observablehq.com/framework/) is an open-source JavaScript framework for building interactive data applications, dashboards, and reports. It was created by the team behind ObservableHQ, with the goal of making it easier for developers and analysts to turn data into live, shareable, and interactive visualizations using standard web technologies. | ||

|

|

||

| Data apps powered by the Observable Framework are static sites that can be built, deployed, and hosted anywhere — including platforms like Vercel. This makes them fast, portable, and easy to integrate into modern web stacks such as PortalJS. | ||

|

|

||

| The data app addon works similarly to the main data portal: once enabled on PortalJS Cloud, a dedicated GitHub repository and deployment will be created for your data app. | ||

|

|

||

| <iframe src="https://drive.google.com/file/d/1Y6g89nMq3YjVGLXkaUP5VFQDxls6TZe-/preview" width="569" height="308" allow="autoplay; fullscreen"></iframe> | ||

|

|

||

| The GitHub repository is created based on the [PortalJS Data App Starter template](https://github.com/datopian/portaljs-data-app-starter). You can then clone it locally for development and raise PRs to deploy changes to your data app, such as the creation of new dashboards or branding. | ||

|

|

||

| ## Publishing Visualizations | ||

|

|

||

| Once your dashboards and reports are deployed to the data app (or any other tool), you can then publish them in PortalJS Cloud by creating corresponding visualizations. | ||

|

|

||

| <iframe src="https://drive.google.com/file/d/170GaJd8WK6_wEna2gR-dbgR-Z0krTVSt/preview" width="569" height="308" allow="autoplay; fullscreen"></iframe> | ||

|

|

||

| ## Browsable and Searchable Like Datasets | ||

|

|

||

| Finally, users will be able to explore the visualizations published to your portal and access the dashboards and reports created on the data app. | ||

|

|

||

| Just like with datasets, users can search visualizations by organization, groups, and tags. | ||

|

|

||

| <iframe src="https://drive.google.com/file/d/1zYJaz2HOCinVRFokrxooAWqQbvOpoPJ-/preview" width="569" height="308" allow="autoplay; fullscreen"></iframe> | ||

|

|

||

| On the visualization details page, the full metadata for the visualization can be found, along with a link to access it. | ||

|

|

||

| ## What’s Next | ||

|

|

||

| This release is just the beginning of a series of improvements focused on data visualizations. | ||

|

|

||

| In the next iterations, we aim to make creating and publishing visualizations as intuitive as exploring them, introducing simple, guided tools that allow non-technical users to build and customize visualizations directly within PortalJS Cloud, without needing to write code or manage external apps. | ||

|

|

||

| With these enhancements, we aim to make PortalJS Cloud not just a catalog of datasets and dashboards, but a platform where any user — technical or not — can explore, create, and share meaningful insights. | ||

|

|

||

| </content> | ||

| <summary type="html">Empower your data portal with an insights catalog — a new way to explore, share, and communicate findings alongside your datasets.</summary> | ||

| </entry> | ||

| <entry> | ||

| <title>MCP Server: A better way to connect AI assistants to data portals</title> | ||

| <id>https://portaljs.com/blog/mcp-server-ai-assistants-to-improve-data-portals</id> | ||

| <link href="https://portaljs.com/blog/mcp-server-ai-assistants-to-improve-data-portals" /> | ||

| <updated>2025-09-17T00:00:00.000Z</updated> | ||

| <published>2025-09-17T00:00:00.000Z</published> | ||

| <author> | ||

| <name>Theo Bertol</name> | ||

| </author> | ||

| <content type="html"> | ||

| ## Introduction | ||

|

|

||

| The world of AI assistants is rapidly evolving, but there's been a persistent challenge: how do we connect these powerful tools to the vast repositories of data that organizations maintain? Enter the **Model Context Protocol (MCP)** - Anthropic's groundbreaking open standard that's revolutionizing how AI systems interact with data sources. | ||

|

|

||

| ## What is the Model Context Protocol? | ||

|

|

||

|  | ||

|

|

||

| Before diving into our MCP Data Portal implementation, let's understand what makes MCP so revolutionary. Announced by Anthropic in November 2024, the Model Context Protocol is quickly becoming the universal standard for connecting AI assistants to data systems. | ||

|

|

||

| ### The Problem MCP Solves | ||

|

|

||

| Traditional AI assistants are often overwhelmed by unnecessary, copy-pasted context (JSON, CSV, etc.). We end up pasting a lot of information before having a good conversation or solving the problem, burning a lot of tokens, and still not solving the problem because the signal is buried in noise. | ||

|

|

||

| - How can I make this conversation more straight to the point? | ||

| - Why does my AI chat keep storing useless information? | ||

| - When did I ask for this? | ||

| - Where is this chat's context going? | ||

|

|

||

| All of these questions point to the core issue: managing context. How do we fix it? | ||

|

|

||

| ### The MCP Solution | ||

|

|

||

| MCP replaces these fragmented integrations with a single, open protocol. Think of it as the "USB standard" for AI-data connections - any MCP-compliant data source can serve context to any MCP-enabled AI client, and vice versa. | ||

|

|

||

| Think of it as simple: instead of copy/pasting, contextualizing, and rephrasing, an MCP server provides direct, permissioned access to the right sources (APIs, databases, datasets, connectors). | ||

|

|

||

| With an MCP server, the assistant knows which tools to call to answer your question. No more pasting 10,000-line CSVs. | ||

|

|

||

| ### Why Connect Data Portals to AI Assistants? | ||

|

|

||

| Imagine being able to: | ||

|

|

||

| - **Ask natural language questions** about datasets: "Show me environmental datasets from 2020" | ||

| - **Get instant summaries** of complex data without browsing through catalogs | ||

| - **Discover relationships** between datasets across different organizations | ||

| - **Access metadata** and resources through conversational interfaces | ||

| - **Integrate data discovery** into your existing AI workflows | ||

|

|

||

| This is exactly what the MCP Server enables for data portals. | ||

|

|

||

| ## How It Works: | ||

|

|

||

| The MCP Server acts as a bridge between MCP-compatible AI clients and data portal APIs. Here's the flow: | ||

|

|

||

| 1. **AI Assistant** sends a request through MCP protocol | ||

| 2. **MCP Server** translates the request to data portal API calls | ||

| 3. **Data Portal** returns data and metadata | ||

| 4. **Server processes and formats** the response for the AI | ||

| 5. **AI Assistant** receives structured data to provide intelligent responses | ||

|

|

||

| ### Government Open Data | ||

| Government agencies can enable citizens and researchers to interact with public datasets using natural language. Instead of navigating complex data portals, users can simply ask: "What environmental data is available for my city?" | ||

|

|

||

| ### Research Institutions | ||

| Researchers can quickly discover relevant datasets across multiple repositories, understand data provenance, and identify potential collaborations through AI-powered data exploration. | ||

|

|

||

| ### Enterprise Data Discovery | ||

| Organizations can connect their internal data portals to AI assistants, enabling employees to find and understand corporate data assets without specialized knowledge of data catalogs. | ||

|

|

||

| ### Data Journalism | ||

| Journalists can rapidly identify story-relevant datasets, understand their context, and explore connections between different data sources through conversational interfaces. | ||

|

|

||

| ## The Future of AI-Powered Data Discovery | ||

|

|

||

| The MCP Server for data portals represents more than just a technical integration - it's a glimpse into the future of how we'll interact with data. As MCP becomes the standard protocol for AI-data connections, we're moving toward a world where: | ||

|

|

||

| - **Data discovery is conversational**, not navigational | ||

| - **AI assistants understand context** from your organization's data | ||

| - **Complex data relationships** are explained in natural language | ||

| - **Data democratization** happens through familiar AI interfaces | ||

|

|

||

| ## Conclusion | ||

|

|

||

| The Model Context Protocol is transforming how AI systems access and interact with data. The MCP Server for data portals makes this connection seamless, secure, and scalable. | ||

|

|

||

| By bridging data portals with AI assistants, we're not just enabling new technical capabilities - we're fundamentally changing how people discover, understand, and work with data. The barriers between human curiosity and data insights are dissolving, replaced by natural, conversational interfaces that make data accessible to everyone.</content> | ||

| <summary type="html">How an MCP server bridges AI assistants and data portals, enabling seamless, efficient data discovery for ChatGPT, Claude, and other AI tools.</summary> | ||

| </entry> | ||

| <entry> | ||

| <title>How We Rebuilt a Legacy CKAN Portal into a Static, Read-Only Site with PortalJS</title> | ||

| <id>https://portaljs.com/blog/how-we-rebuilt-a-legacy-ckan-portal-into-a-static-read-only-site-with-portaljs</id> | ||

| <link href="https://portaljs.com/blog/how-we-rebuilt-a-legacy-ckan-portal-into-a-static-read-only-site-with-portaljs" /> | ||

| <updated>2025-07-29T00:00:00.000Z</updated> | ||

| <published>2025-07-29T00:00:00.000Z</published> | ||

| <author> | ||

| <name>baglanadaskhan</name> | ||

| </author> | ||

| <content type="html"> | ||

| ## Background | ||

|

|

||

| [DataHub v1](https://old.datahub.io/) was originally built as a CKAN 2.6-based data portal, hosting thousands of open datasets from organizations across the world. For many years, it served as a reliable place to discover, download, and share data. But like many long-running platforms, it started to show its age. | ||

|

|

||

| Over time, the maintenance burden grew increasingly difficult to justify: | ||

|

|

||

| * CKAN 2.6 was outdated and lacked long-term support | ||

| * The portal depended on numerous legacy plugins, some of which were custom and unmaintained | ||

| * Upgrades became risky and time-consuming | ||

| * Day-to-day stability relied on manual patching and workarounds | ||

|

|

||

| At the same time, the **value of the data remained high** — historical records, research outputs, and public datasets that people still searched for and used. We didn’t want to lose that. But we also didn’t want to keep investing in heavy infrastructure just to preserve read-only access. | ||

|

|

||

| So the idea emerged: **what if we turned the portal into a fully static site — no backend, no databases, just fast, reliable, and simple?** | ||

|

|

||

| ## The Goal | ||

|

|

||

| We wanted to preserve: | ||

|

|

||

| * Access to all datasets | ||

| * Dataset metadata (title, description, tags, license, resources) | ||

| * Basic search and navigation | ||

| * A clean and consistent UI | ||

|

|

||

| And we wanted to remove: | ||

|

|

||

| * The need for CKAN backend services (PostgreSQL, Solr, extensions) | ||

| * Admin/user accounts and dynamic features | ||

| * Any part of the system that required manual ops or upgrades | ||

|

|

||

| Our target was a **read-only static portal**, built on modern tooling and served entirely over CDN. | ||

|

|

||

| ## From Legacy to Lightweight | ||

|

|

||

| ### Stabilizing the CKAN Instance | ||

|

|

||

| Before migrating, we had to ensure the old CKAN site was stable enough to extract data from. We: | ||

|

|

||

| * Disabled login and registration | ||

| * Made the instance read-only | ||

| * Removed unused and broken plugins like `disqus`, `datapub`, and `validation` | ||

|

|

||

| This left us with a clean, static snapshot of the portal’s content that could be safely extracted. | ||

|

|

||

| ### Extracting Metadata | ||

|

|

||

| We needed a format that was both machine-readable and flexible. We chose the [Frictionless Data Package](https://specs.frictionlessdata.io/data-package/) spec — a widely used standard in the open data world. | ||

|

|

||

| Each dataset was exported as a datapackage.json file. For better structure and clarity, we organized them semantically by publisher: | ||

|

|

||

| ```bash | ||

| /datasets/ | ||

| └── organization-name/ | ||

| └── dataset-name/ | ||

| ├── datapackage.json | ||

| └── organization.json | ||

| ``` | ||

|

|

||

| This simple hierarchy helped mirror how CKAN groups datasets by organization, and allowed for clear URL routing and static page generation. | ||

|

|

||

| All metadata files and downloadable resources were uploaded to Cloudflare R2 — an S3-compatible object storage with global CDN support. | ||

|

|

||

| ### Building the Frontend | ||

|

|

||

| We chose [PortalJS](https://portaljs.com/) — an open-source, React/Next.js-based framework designed for data portals. It allowed us to build: | ||

|

|

||

| * A homepage with basic intro and quick search | ||

| * A dataset listing page | ||

| * A dataset detail page rendered directly from datapackage.json | ||

|

|

||

| Everything is statically rendered at build time, including SEO metadata, resource tables, and file links. | ||

|

|

||

| We also customized layout components using TailwindCSS and React, giving the new portal a clean and responsive interface. | ||

|

|

||

| ### Implementing Search Without a Backend | ||

|

|

||

| CKAN uses Solr for powerful search, but it’s a server-side dependency. We replaced it with [Lunr.js](https://lunrjs.com/), a client-side search engine that indexes documents in the browser. | ||

|

|

||

| We wrote a script that scans all `datapackage.json` files and builds a Lunr index at deploy time. The result is a fast, compact index (\~1MB) bundled with the frontend and loaded entirely in-browser. | ||

|

|

||

| For our use case — static data and a finite number of datasets — Lunr was the perfect fit. | ||

|

|

||

| ### CI/CD and Deployment | ||

|

|

||

| We automated everything with GitHub Actions: | ||

|

|

||

| * Build the PortalJS frontend | ||

| * Pull latest metadata and generate search index | ||

| * Deploy to Vercel | ||

|

|

||

| There’s no server, no database, and nothing to monitor. The site is regenerated automatically when content changes. | ||

|

|

||

| ## What We Removed — By Design | ||

|

|

||

| This wasn’t a downgrade — it was a conscious shift toward minimalism. We removed: | ||

|

|

||

| * CKAN’s web UI and admin panel | ||

| * Solr search engine | ||

| * Login, registration, and permissions | ||

|

|

||

| What remained was what mattered most: **the data itself**, presented clearly and accessibly. | ||

|

|

||

| ## Results | ||

|

|

||

| * Over **1,000 datasets** preserved and discoverable | ||

| * Site loads in milliseconds — no waiting for backend queries | ||

| * Infrastructure costs nearly eliminated | ||

| * Maintenance reduced to a few GitHub workflows | ||

|

|

||

| The new [old.datahub.io](https://old.datahub.io/) is not just faster — it's also cleaner, safer, and easier to evolve. | ||

|

|

||

| --- | ||

|

|

||

| Thanks for reading\! Want to explore more? Check out [PortalJS](https://portaljs.com/), or reach out if you’re thinking of giving your legacy data portal a second life — static, searchable, and serverless. | ||

| </content> | ||

| <summary type="html">Migrating from a heavy CKAN 2.6 portal to a fast, fully static frontend — and the technical journey behind it.</summary> | ||

| </entry> |

There was a problem hiding this comment.

This PR appears to include broad feed changes unrelated to the case-study objective

The diff includes many editorial/feed-entry updates beyond the Lincolnshire case-study scope, which increases merge risk and makes intent hard to validate. Please isolate these feed/content refreshes into a dedicated PR (or regenerate atom.xml from only the intended content changes) to keep this PR scoped and reviewable.

Also applies to: 3803-3804, 5504-5504

🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed.

In `@site/public/atom.xml` around lines 15 - 1014, The PR accidentally bundles

broad RSS/Atom feed updates in site/public/atom.xml (multiple <entry> items such

as the entries titled "Keep Your Portal Data Fresh: A Hands-On Guide to the

PortalJS Cloud API", "Turning OpenMetadata into a User-Friendly Data Portal with

PortalJS", etc.) that are unrelated to the Lincolnshire case-study; revert or

remove any <entry> elements not part of the case-study, then regenerate atom.xml

from only the intended case-study content (or run the canonical feed generation

script used by the site build), verify the regenerated feed contains only the

targeted entry/changes, and commit those feed changes in a separate PR so this

PR remains scoped to the case-study.

|

|

||

| Explore your portal’s API documentation to go further and tailor automation to your data workflows. | ||

| </content> | ||

| <summary type="html">This guide walks through using the PortalJS Cloud API to programatically create datasets, add resources, upload and replace data files, and update metadata—showing how to build repeatable data update pipelines with code examples.</summary> |

There was a problem hiding this comment.

Fix typo in summary text

At Line 441, “programatically” should be “programmatically”.

🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed.

In `@site/public/atom.xml` at line 441, Fix the typo in the Atom feed summary:

update the text inside the <summary type="html"> element (the summary string

"programatically create datasets, ...") to use the correct spelling

"programmatically" instead of "programatically" so the summary reads "...to

programmatically create datasets, add resources, upload and replace data files,

and update metadata—showing how to build repeatable data update pipelines with

code examples."

| * [Population growth estimates and projections](/core/population-growth-estimates-and-projections) :clock1: updated annually | ||

|

|

||

| There will be more automated datasets on :datahub: so join our [community chat on :discord: Discord](https://discord.gg/krmj5HM6He) and our Newsletter (insert link) to receive the latest news! | ||

| There will be more automated datasets on :datahub: so join our [community chat on :discord: Discord](https://discord.gg/KrRzMKU) and our Newsletter (insert link) to receive the latest news! |

There was a problem hiding this comment.

Remove placeholder text from user-facing copy

At Line 5504, “Newsletter (insert link)” is still a placeholder and ships as broken/stale copy. Replace with a real URL or remove the mention.

🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed.

In `@site/public/atom.xml` at line 5504, Replace the user-facing placeholder

"Newsletter (insert link)" in the quoted atom feed string "There will be more

automated datasets on :datahub: so join our [community chat on :discord:

Discord](https://discord.gg/KrRzMKU) and our Newsletter (insert link) to receive

the latest news!" by either removing the newsletter mention entirely or

replacing "Newsletter (insert link)" with the actual newsletter URL/text; ensure

the final copy uses a valid Markdown/HTML link (e.g., "Newsletter (https://...)"

or "[Newsletter](https://...)") so no broken/stale placeholder remains.